The Trust Verification Audit: Beyond Rankings to Prove Your SEO Agency Actually Drives Revenue

Majestic introduced its Trust Flow metric as a way to score link quality back in 2012. Fourteen years later, the concept of "trust" between a business and its SEO agency still runs on roughly the same logic: you're supposed to take their word for it.

The Trust Verification Audit: Beyond Rankings to Prove Your SEO Agency Actually Drives Revenue

Majestic introduced its Trust Flow metric as a way to score link quality back in 2012. Fourteen years later, the concept of "trust" between a business and its SEO agency still runs on roughly the same logic: you're supposed to take their word for it. The agency sends a monthly PDF with ranking charts and traffic graphs, you scan the green arrows, and everyone agrees things are going well. The problem is that this ritual has survived long past the point where it tells you anything useful about whether organic search is actually making your business money. What follows is the chronological trace of how this gap opened, how the industry ignored it, and what a proper trust verification audit looks like when you stop accepting ranking reports as proof of value.

When Ranking Reports Were the Whole Story

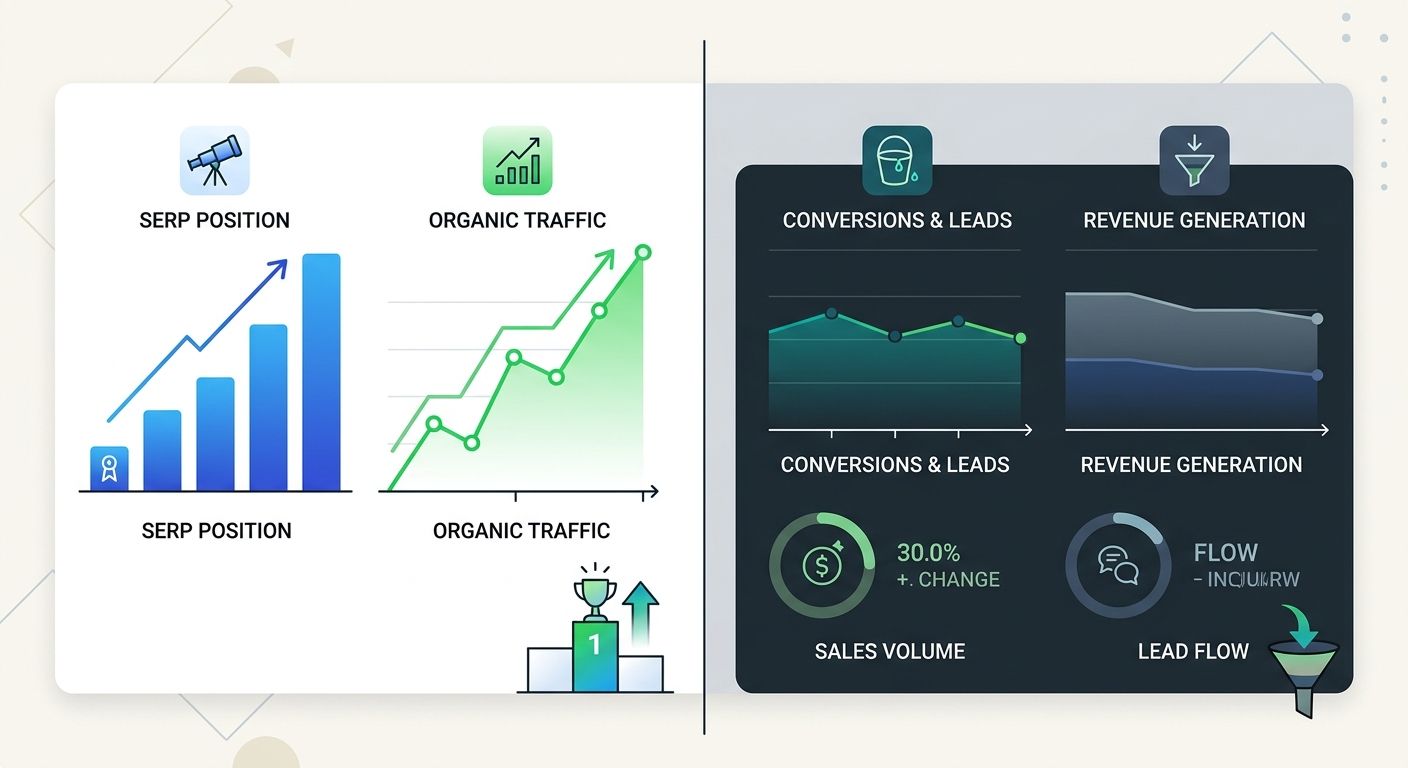

For most of the 2010s, the SEO agency performance metrics conversation started and ended with keyword positions. An agency would agree to target a list of keywords, report their positions monthly, and everyone would celebrate when a term hit page one. The implicit assumption was straightforward: higher rankings meant more traffic, more traffic meant more leads, and more leads meant more revenue. Each link in that chain felt logical enough that nobody bothered to verify the connections.

And for a while, the model worked well enough. Search was simpler. Organic click-through rates on page-one results were reliably high. If you ranked #3 for a high-volume term, you could predict with reasonable accuracy how much traffic that position would send. Agencies built their entire reporting infrastructure around this premise, and clients bought it because the alternative required actual measurement work that neither side wanted to do.

The first cracks appeared when agencies started gaming the reporting itself. I saw this repeatedly during my years on the operations side: agencies would cherry-pick keywords that were easy to rank for but had no commercial intent, then present those wins as proof of progress. A B2B software company ranking #1 for "what is ERP" looked great on paper. Whether that ranking produced a single qualified lead was a question nobody in the room was asking.

Traffic Became the Proxy Everyone Accepted

Google Analytics adoption pushed the industry toward a slightly more sophisticated proxy: sessions. Agencies shifted from pure ranking reports to traffic-focused dashboards. The pitch evolved from "we got you to page one" to "organic traffic is up 34% quarter over quarter." This felt like progress. Traffic was at least one step closer to business outcomes than keyword positions alone.

But the substitution created its own blind spots. Agencies optimized for volume without qualifying the traffic they were driving. A blog post about a trending topic might generate 50,000 sessions in a month and produce zero leads. Meanwhile, a well-optimized service page pulling 200 sessions might convert at 8% and generate serious pipeline. The monthly report treated both pages the same way because traffic was traffic, and the number went up.

This is the era when optimizing for search volume over search intent became the industry's default setting. Agencies built content calendars around keyword difficulty and monthly search volume. Whether the people typing those queries would ever buy anything was a secondary concern, if it was a concern at all.

Semrush's research on user engagement reinforces why this mattered. A bounce rate below 40% signals that visitors find content credible and relevant, while anything significantly higher suggests they landed, didn't find what they expected, and left. Agencies reporting traffic growth without segmenting by engagement quality were presenting a number that could mean almost anything.

The Revenue Disconnect Nobody Wanted to Measure

The honest reason most agencies avoided revenue-driven SEO measurement is simple: it's hard. Tracking a visitor from an organic search result through a multi-touch buying process that might involve email nurture sequences, sales calls, and a three-month decision cycle requires infrastructure that most marketing teams don't have. As one analysis puts it bluntly, revenue takes more effort to track, so many agencies avoid it.

That avoidance was comfortable for agencies because it kept accountability vague. If rankings went up, they could claim success. If revenue didn't follow, the blame could land on the client's sales team, their website design, their pricing, or any other variable outside the agency's scope. The measurement gap became a shield.

The discomfort intensified as search itself changed. Google's shift toward task fulfillment and AI-generated answers meant that traditional ranking metrics started missing what search was actually becoming. A featured snippet might answer a user's question completely, meaning a #1 ranking could generate impressions without producing a single click. Traffic dashboards showed one reality. Business outcomes showed another.

By this point, the trust signals in SEO agency relationships had become almost entirely superficial. Clients trusted agencies based on reporting artifacts that the agencies controlled. The data was real, but the story it told was incomplete at best and misleading at worst.

Building the Audit Framework That Connects Search to Revenue

The trust verification audit didn't emerge as a single framework from a single source. It developed in pieces as the gap between ranking success and revenue reality became too obvious to ignore. What I'm describing here synthesizes approaches I've tested across roughly forty agency evaluations over the past three years, combined with the attribution models that the measurement-focused corner of the industry has been refining.

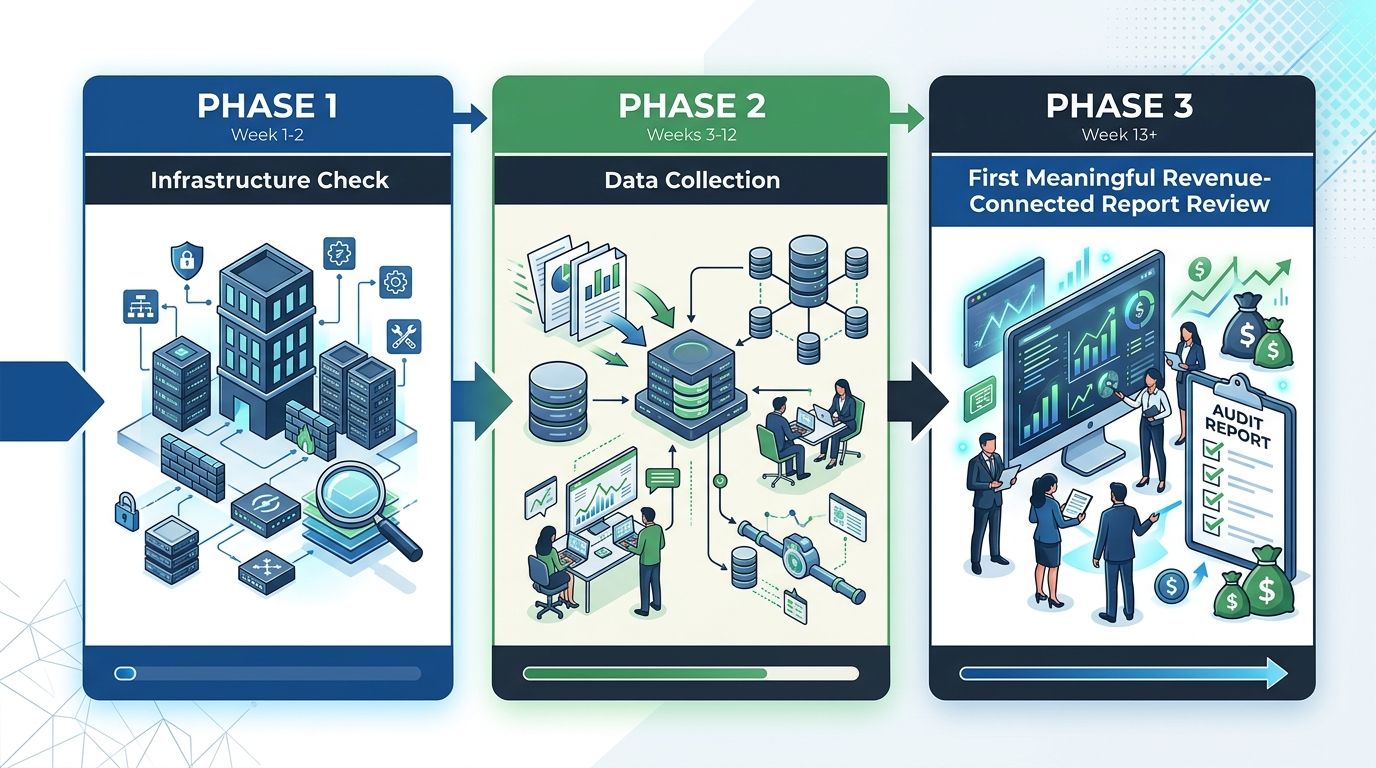

The audit works in three layers, and each one builds on the layer before it.

Layer 1: Attribution Infrastructure Check

Before you evaluate a single metric, you need to verify that the measurement plumbing actually exists. This means confirming that your analytics setup can trace a visitor's path from organic search entry through to a conversion event that has a dollar value attached. If your agency has been reporting for twelve months and you still can't pull a report showing revenue attributed to organic search through both last-click and multi-touch models, the attribution infrastructure is broken. Full stop.

What to verify:

Goal tracking in your analytics platform is configured with actual monetary values, not just event counts

UTM parameters and channel groupings correctly separate organic search from other traffic sources

CRM integration exists so that leads generated from organic traffic can be followed through the sales pipeline to closed/won status

The agency has access to this data and references it in reporting

An agency that claims great results but has never requested CRM access is an agency that can't prove those results connect to revenue.

Layer 2: The Three Revenue-Connected Reports

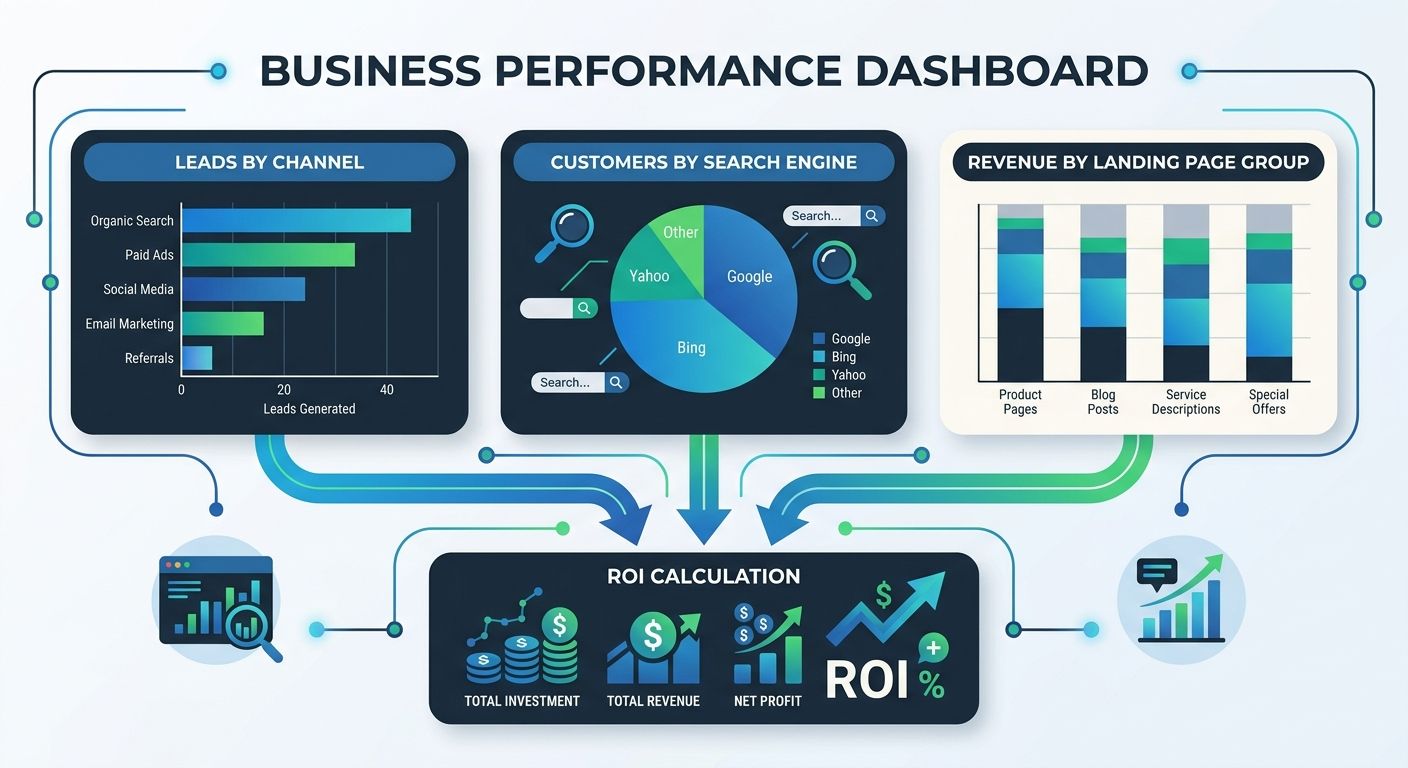

The SEO attribution research from SEO.com identifies three specific reports that move beyond ranking metrics into genuine ROI territory:

Leads by Channel compares organic search performance against paid search, social, email, and direct traffic. This isn't about proving SEO is better than other channels. It's about understanding SEO's proportional contribution and whether that contribution is growing or shrinking relative to spend.

Customers from SEO by Search Engine breaks down which search engines are actually sending people who buy. With AI-driven search platforms like Perplexity and Bing's copilot features gaining traction, this report reveals whether your agency's optimization strategy accounts for the full search ecosystem.

Revenue from SEO by Landing Page Group is where the conversation gets genuinely useful. It shows which content categories and page types drive actual dollars. A blog section generating 70% of organic traffic but 4% of organic revenue tells a completely different story than an agency report that lumps all organic sessions together.

Layer 3: Forward-Looking AI Visibility Metrics

The audit's final layer addresses what's coming, not what's already happened. Adobe's 2026 analysis recommends measuring AI citation frequency, generative AI referral traffic, assisted conversions, and share of model compared to competitors. These metrics barely existed two years ago, and most agencies still don't track them.

Ask your agency whether they monitor how often your brand appears in AI-generated search responses. Ask whether they track referral traffic from AI search interfaces separately from traditional organic. If the answer is no, the agency's measurement framework is already outdated.

Applying the Audit to an Active Agency Relationship

Running a trust verification audit against your current agency doesn't need to be adversarial. Position it as a calibration exercise. You're aligning on what success actually means, and you're building the reporting infrastructure to prove it. Good agencies welcome this because it gives them better data to optimize against. Bad agencies resist it because measurement clarity threatens the vague success narrative they've been selling.

The performance metrics trap in agency selection applies here too. Agencies that present only the metrics they control are constructing a narrative, not demonstrating results. A trust verification audit forces the conversation into territory where narratives have to be backed by numbers that connect to your P&L.

The practical timeline looks like this: the attribution infrastructure check (Layer 1) should take one to two weeks. If your analytics and CRM aren't properly configured, fixing that comes before any other measurement work. Layers 2 and 3 require a full quarter of data collection before the reports become meaningful. Don't expect to run the audit and have answers in a week.

SaaS companies provide a useful benchmark here. 2026 ROI data shows SaaS businesses averaging 702% ROI on SEO with break-even periods as short as seven months. If your agency has been engaged for longer than that and you still can't calculate your organic search ROI, the measurement gap should concern you.

I've seen this play out with architects and other professional services firms where the disconnect between ranking success and signed contracts can be particularly stark. An architecture firm ranking well for "modern office design" might see solid traffic numbers while generating zero qualified project inquiries. The trust verification audit would catch this within the first quarterly review by showing revenue by landing page group.

The Resistance Pattern

Every agency I've seen pushed through this audit process follows a predictable resistance arc. The first response is usually enthusiasm. "We'd love to move to revenue-based reporting!" The second phase involves technical excuses about why the CRM integration is harder than expected, why the attribution model needs more customization, or why the client's sales cycle makes revenue tracking impractical. The third phase is where you learn what kind of agency you're actually working with.

Agencies that push through the technical challenges and deliver revenue-connected reports within a quarter are agencies worth keeping. Agencies that stall indefinitely in phase two are telling you something important about their confidence in the results they'd have to show you.

Transparent audit processes, as organizational risk research confirms, allow both parties to uncover problems that go unnoticed when reporting stays superficial. A collaborative approach to the audit surfaces real performance data. A combative approach surfaces the agency's priorities, and those priorities are worth knowing.

The State of Play

Agency accountability frameworks in SEO are still in early adoption. The majority of agencies continue to report rankings, traffic, and backlink counts because those metrics are easy to generate and hard for clients to challenge. The trust verification audit described here requires work from both the agency and the client. It requires analytics infrastructure that many businesses don't have, CRM integration that many marketing teams haven't prioritized, and a willingness from both sides to look at numbers that might be uncomfortable.

But the gap between what agencies report and what businesses actually need to know about their organic search investment is widening, not shrinking. AI search interfaces are fragmenting the click path between search and conversion. Traditional ranking positions carry less predictive value for revenue than they did even two years ago. The agencies that will survive this shift are the ones building revenue-driven SEO measurement into their standard reporting, voluntarily, before clients force the issue.

The audit itself is straightforward. Three layers. Verified attribution infrastructure, three revenue-connected reports, and forward-looking AI visibility tracking. Any agency that's genuinely driving revenue for your business should be able to pass it within a quarter. If yours can't, you now have a structured, specific way to determine whether the problem is technical (fixable) or fundamental (not fixable). That distinction is worth more than another twelve months of ranking reports.

Marcus Webb

Digital marketing consultant and agency review specialist. With 12 years in the SEO industry, Marcus has worked with agencies of all sizes and brings an insider perspective to agency evaluations and selection strategies.

Explore more topics