Google's Task Fulfillment Update: Why Traditional Ranking Metrics Miss What Search Is Becoming in 2026

Google announced new task-based search features on April 21, explicitly describing search as "a platform for navigating modern life.

Google's Task Fulfillment Update: Why Traditional Ranking Metrics Miss What Search Is Becoming in 2026

Google announced new task-based search features on April 21, explicitly describing search as "a platform for navigating modern life." Then, just hours ago, SearchEngineJournal published a follow-up assessment confirming that the reporting surfaces businesses rely on haven't kept pace with what search is actually doing. The mechanism underneath these changes is what the industry loosely calls "task fulfillment," and it represents a fundamental shift in what a search result is supposed to accomplish. Traditional ranking metrics measure where you appear on a page. Task fulfillment measures whether the searcher actually got their thing done. Those are different questions, and they demand different measurement systems entirely.

How Search Became a Task Engine

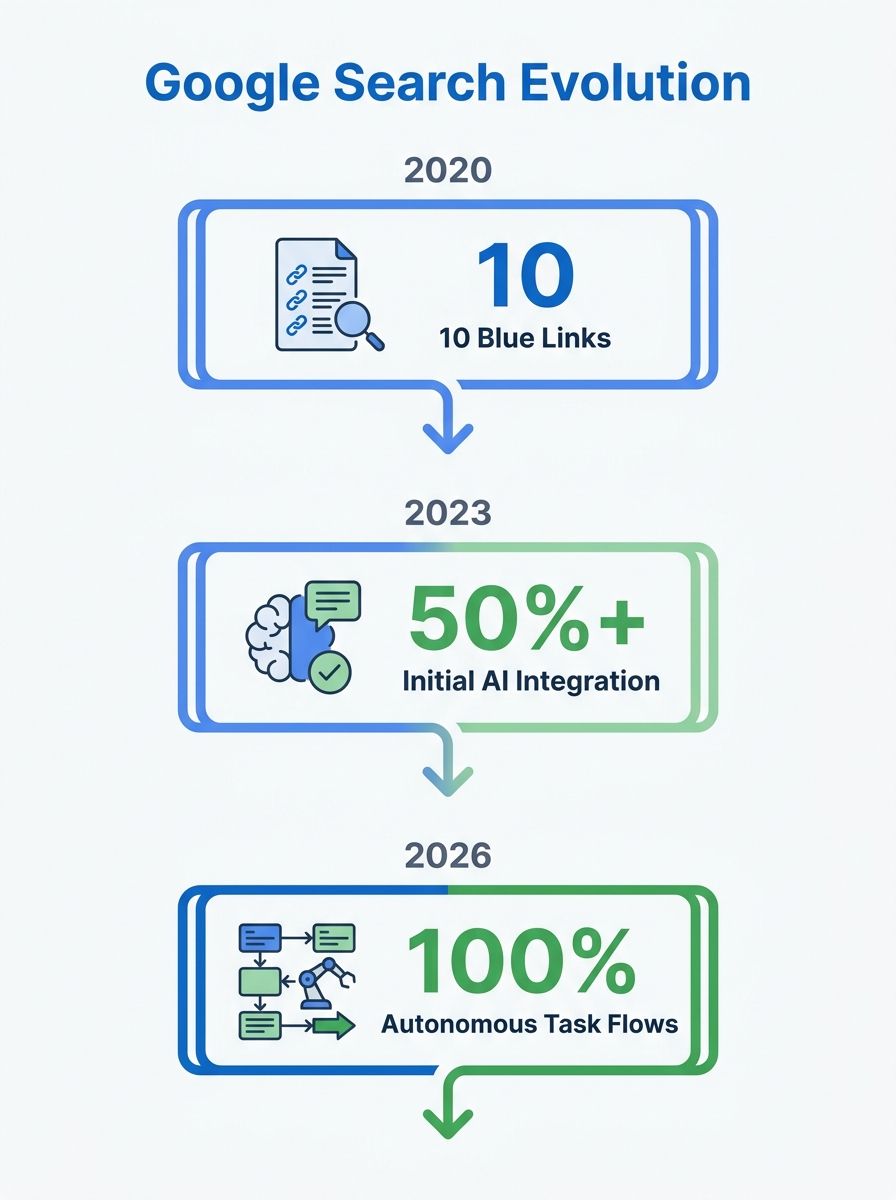

For two decades, Google operated on a link-retrieval model. You typed a query, Google returned ten blue links, you clicked one. Success was defined by the click itself. The searcher's actual outcome — did they book the appointment, compare the prices, fix the leaky faucet — was invisible to Google's systems.

As Primotech documented this month, Google Search is actively becoming a task engine. The March 2026 Core Update, which rolled out from March 27 to April 8 over 12 days and 4 hours, refined Google's ability to assess whether a page fully satisfies user intent, including anticipating follow-up questions. The task-based features announced on April 21 push this even further, allowing users to complete multi-step processes directly within the search interface.

The implication for anyone running or hiring an SEO agency is uncomfortable: if your monthly reports still center on keyword position tracking, you're measuring an artifact of the old model. And the gap between what those reports show and what's actually happening in search widens every week.

Five Signals Driving Google Task Completion Ranking

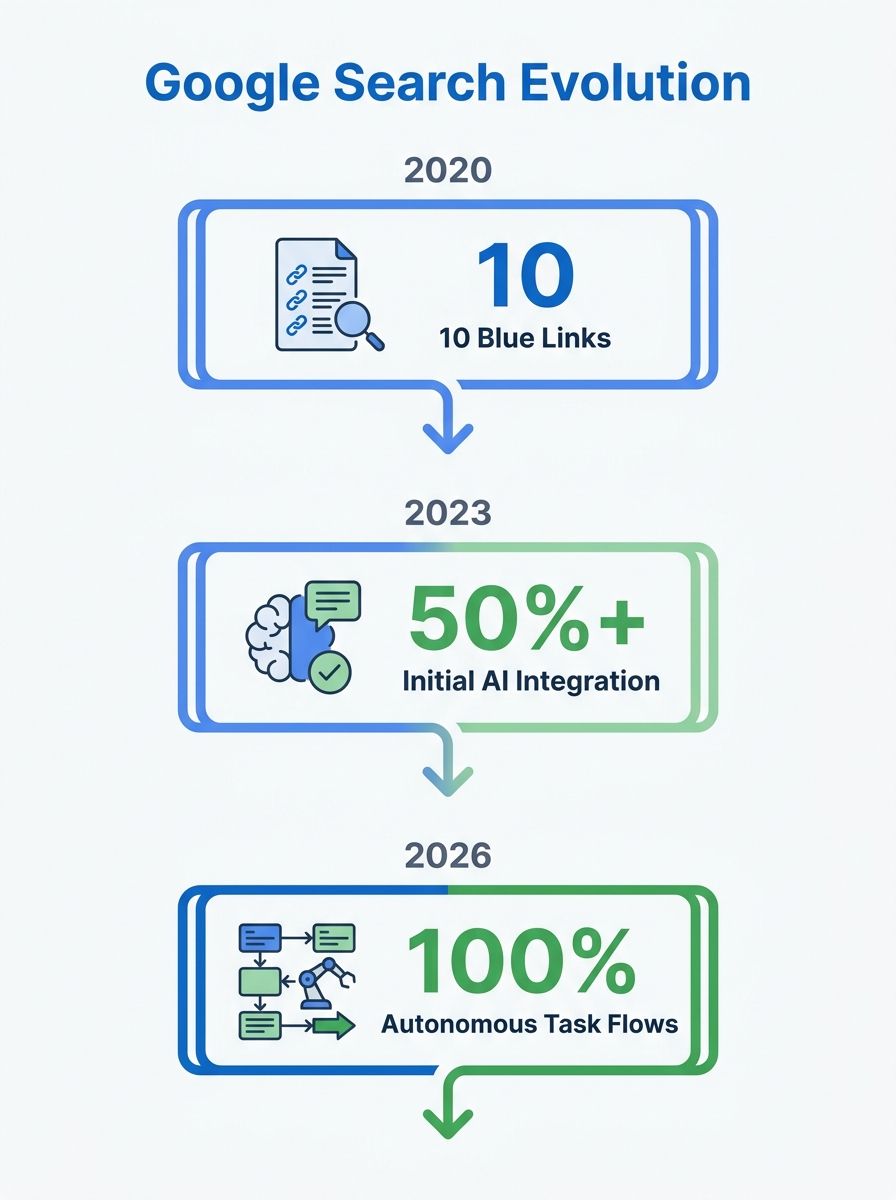

ALM Corp published research this month identifying five data-backed signals behind organic growth in 2026: products, task completion, proprietary assets, topical focus, and brand strength. That list is worth unpacking because it reveals how far the ranking model has drifted from traditional keyword-and-backlink thinking.

Task completion sits at the center of this framework. Google's systems now evaluate whether a piece of content enables the user to finish what they came to do. For an informational query, that means answering the primary question and the probable follow-ups. For a transactional query, it means providing enough information to make a purchase decision without bouncing back to the SERP.

Proprietary assets matter because original data, tools, and calculators signal to Google that your content offers something a user can't get by reading three other pages. If your agency is producing rewritten summaries of competitor content, the March 2026 update penalized exactly that approach through its information gain scoring.

Brand strength connects to the brand signal gap that Google's April 2026 Core Update now rewards. Google is increasingly using brand recognition as a trust proxy, especially for YMYL queries where credibility determines whether a searcher can safely act on the information.

Topical focus and product signals round out the model by rewarding depth over breadth. Sites that try to rank for everything rank for less. Sites that own a specific topic cluster and back it with real products or services are seeing disproportionate gains.

What Task Fulfillment Signals SEO Teams Should Actually Track

If rankings don't capture what's happening, what does? The shift toward 2026 SEO metrics beyond rankings requires retooling your measurement stack around user outcomes rather than SERP positions.

SearchEngineJournal's research on engagement metrics points to one reliable indicator: high-engaged session durations signal to search engines that your page provides content satisfying user intent, which feeds back into your rankings. But session duration alone is a crude proxy. The real task fulfillment signals break down into several layers.

Completion rate by intent type

Segment your content by the intent it serves (informational, navigational, transactional, commercial investigation) and track whether users take the expected next action. For a "how to" article, completion might mean the user doesn't return to Google for the same query within 30 minutes. For a product page, it's adding to cart or clicking through to a spec sheet. If you're still building content strategies around volume rather than matching specific intent types, this segmentation will expose the mismatch quickly.

Pogo-sticking and return-to-SERP rate

GoDaddy's research confirms that Google bounces its listings around as part of its algorithm to test which best meet the searcher's intent. A rank report might say you're at position #2, but when you check manually, you're at #8 or gone from page one entirely. This volatility is the mechanism in action. Google is running live experiments with your listings, and the ones where users don't return to the SERP get promoted over time.

AI citation frequency

iPullRank's framework for designing AI search metrics asks a critical question: does the LLM perceive you as a trusted source for a specific entity? With AI Overviews now appearing for a growing share of queries, whether your content gets cited in those summaries is becoming a primary visibility metric. If you're working on search intent fulfillment optimization without tracking AI citation, you're missing where a growing portion of your visibility actually lives.

Why Position Reports Create a False Sense of Security

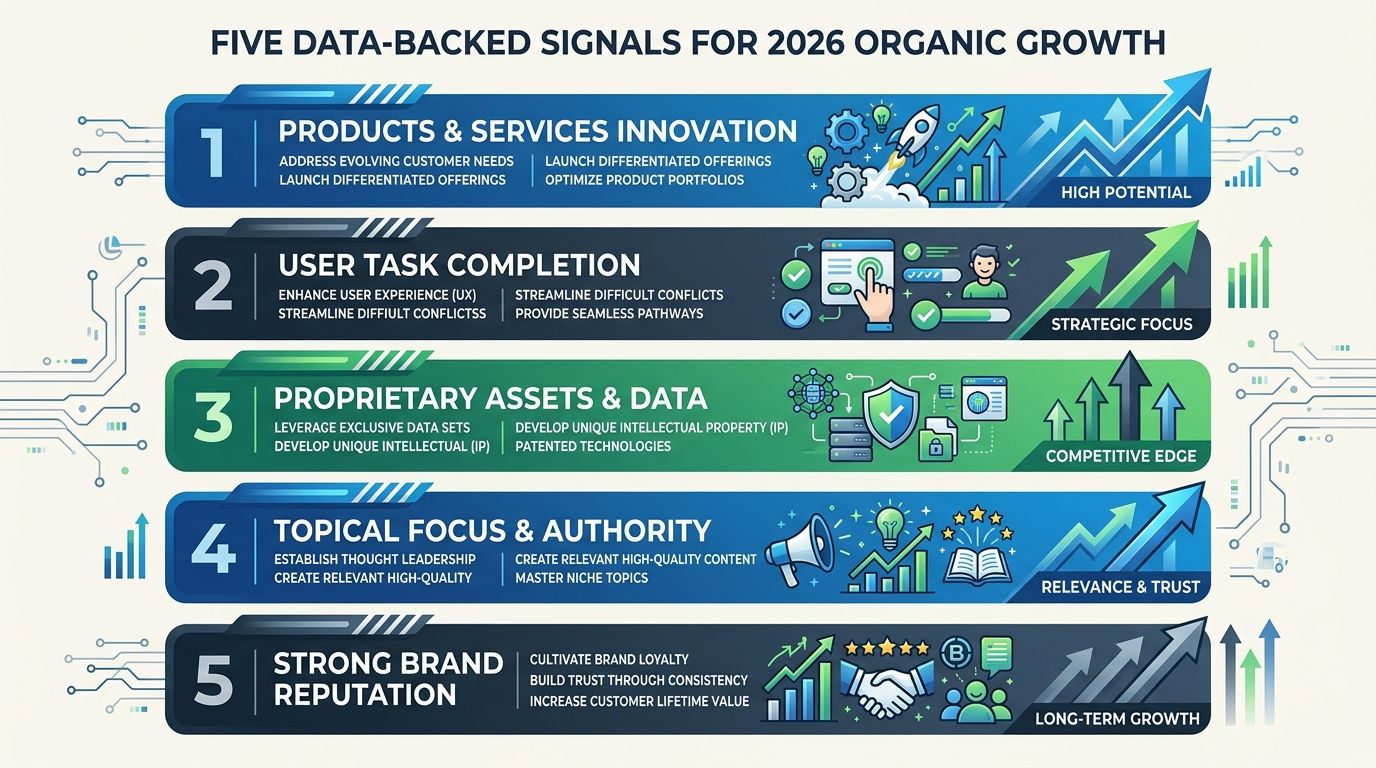

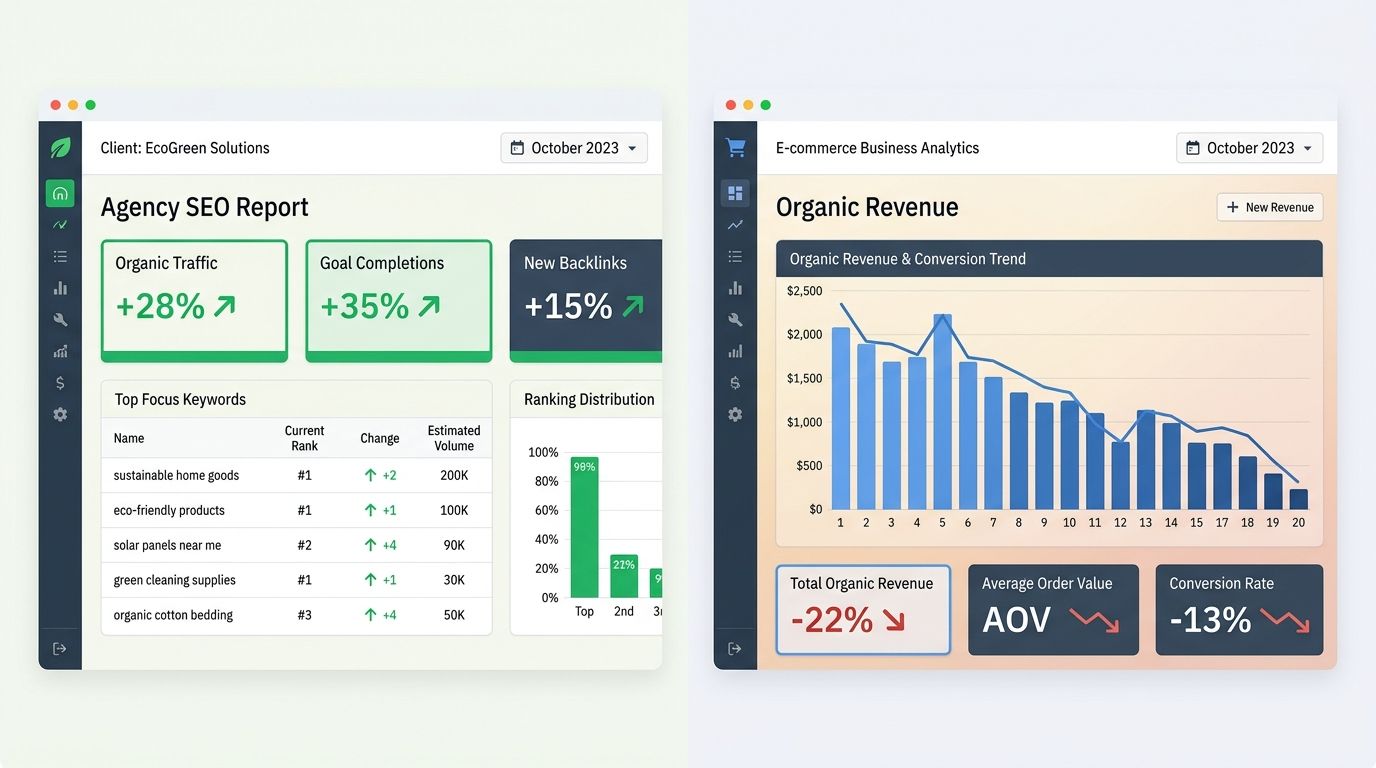

I've reviewed over 200 SEO agencies in my career, and the single most common reporting failure I encounter is this: agencies deliver beautiful rank-tracking dashboards showing steady improvement while the client's actual organic revenue declines. I wrote about this exact pattern in the context of how traditional SEO metrics fail architecture firms, but it applies across every industry now.

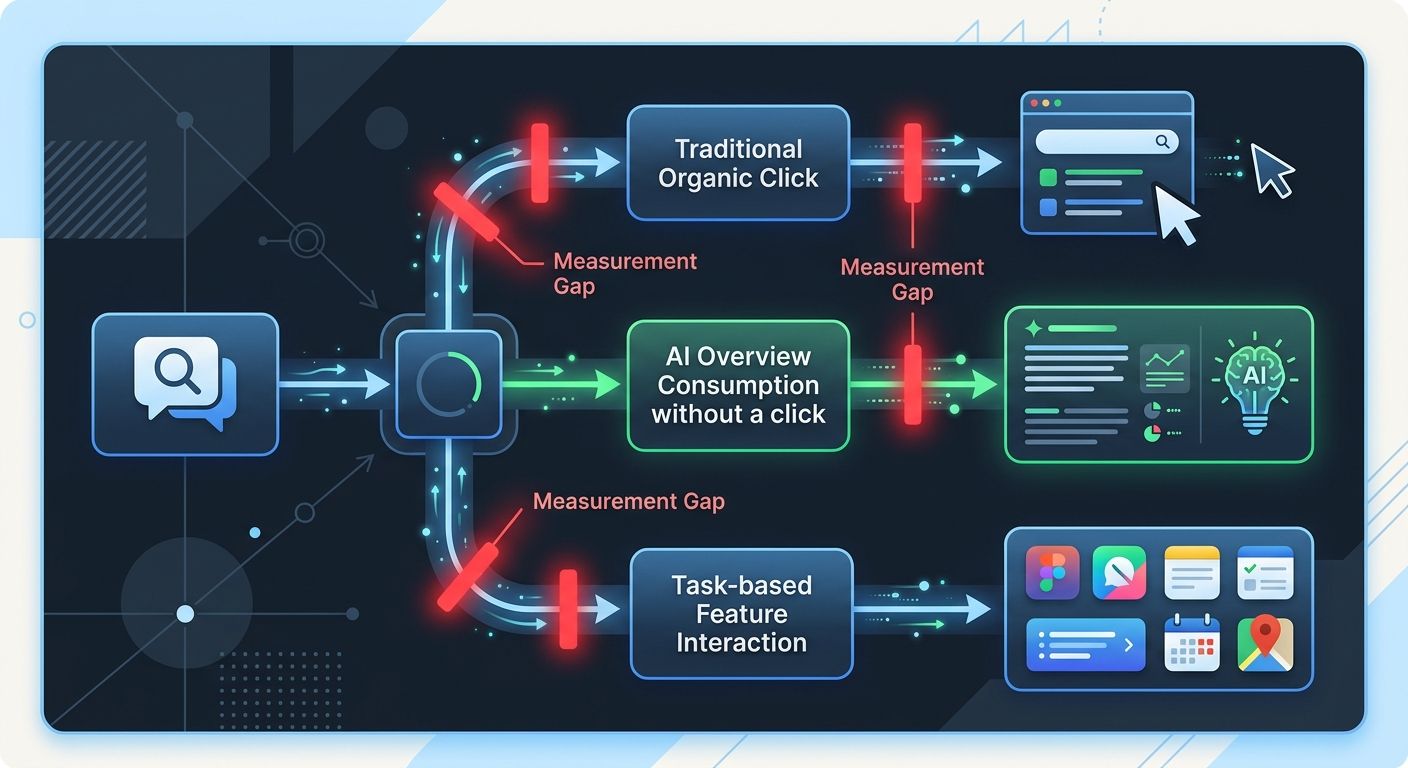

The problem is structural. Position tracking assumes a static SERP. The 2026 SERP is anything but static. Between AI Overviews, featured snippets, People Also Ask expansions, and now task-based features, a "position #1" organic result might sit below three AI-generated answer blocks and a shopping carousel. The click-through rate for that position #1 has eroded significantly compared to even two years ago.

And here's what makes this worse for agency accountability: the rank tracker still shows #1. The client still sees a green arrow. But the phone isn't ringing, form submissions are down, and nobody can explain why because the dashboard says everything is fine. If you're evaluating agencies right now, ask them specifically how they measure task fulfillment signals for SEO campaigns. Ask them what happens when a keyword they're tracking gets absorbed into an AI Overview. If they can't answer that question clearly, their reporting model is already outdated.

The Behavioral Testing Mechanism Under the Hood

Here's how this works at the systems level, based on observable ranking volatility patterns and Google's documented behavior.

Google serves a query result to a cohort of users. It then monitors behavioral signals: did the user click through? How long did they stay? Did they return to the SERP and click a different result? Did they refine their query? Did they complete a transaction or take a measurable action?

Based on those signals, Google adjusts the ranking for the next cohort. This explains the ranking volatility that SEO tools have been flagging this week, as the system constantly runs micro-experiments. The amplitude of fluctuations increases after major core updates as Google recalibrates its baseline assumptions about which content fulfills which tasks.

The March 2026 Core Update amplified this testing cycle. Analysis from WSI World confirms the update shifted visibility toward credible businesses rather than content volume. Credibility here is measured partly through task fulfillment: does the content enable the user to act, or does it inform without resolution?

This mechanism explains something that confuses a lot of SEO teams. A page can have perfect on-page optimization, strong backlinks, and high domain authority, yet lose rankings to a page that's technically weaker on all those traditional signals. The weaker page wins because users who land on it complete their task and don't come back to Google. That behavioral signal overrides the technical signals over time, which is why understanding Google task completion ranking matters more than chasing backlink counts.

How AI Overviews Accelerate the Shift

AI Overviews deserve separate attention because they represent Google's most aggressive move toward task completion within the SERP itself. When Google generates an AI Overview for a query, it's attempting to fulfill the task without requiring a click at all. We've been tracking how AI Overviews reshape visibility for local businesses, and the pattern holds for every vertical.

For businesses, this creates a measurement blind spot. You can't track impressions or clicks for traffic that never reaches your site because Google answered the question directly. Traditional SEO reporting has no line item for "queries where our content was synthesized into an AI answer and the user never visited us." Yet that interaction may still build brand awareness and trust, and it may influence the user's eventual purchase decision through a different channel.

The agencies that have adapted are tracking AI Overview inclusion as a distinct metric, separate from organic rankings. They're monitoring whether their client's content gets cited as a source within the AI-generated answer. And they're measuring downstream brand search volume as an indicator of whether AI Overview exposure translates into direct visits later.

The agencies that haven't adapted are still sending rank reports. The gap between what they measure and what matters widens with every update.

Where the Reporting Infrastructure Falls Short

RS Web Solutions reported this month that no major SEO platform has articulated a clear approach to measuring task fulfillment interactions. Until that shift occurs, businesses will navigate optimization for interactions they cannot monitor. Upcoming signals are expected to emerge at Google I/O and Microsoft Build in the next three weeks, which may finally give the tool ecosystem something concrete to build on.

This infrastructure gap is the real problem. The conceptual framework exists. We understand that task fulfillment signals in SEO favor content resolving the user's need. We know the behavioral signals Google tracks. But the tools most agencies use were built for position tracking, and they haven't caught up. Even the routine SEO tasks suitable for automation still center on rank tracking and reporting. An agency that has automated its reporting but hasn't rethought what it reports is efficient at producing the wrong information.

Where the Model Breaks

Task fulfillment as a ranking mechanism has real limitations, and anyone selling it as a clean replacement for traditional metrics is oversimplifying.

The first limitation: task fulfillment is extremely difficult to measure for informational queries with no clear conversion event. If someone searches "why do leaves change color," how does Google determine whether the task was fulfilled? Session duration is a weak proxy. Lack of pogo-sticking is slightly better. Neither captures whether the user actually learned what they needed. Google is inferring satisfaction from behavioral signals that are, at best, correlated with actual task completion.

The second limitation: the mechanism creates a rich-get-richer dynamic. Pages that already receive traffic generate behavioral signals that Google uses to refine rankings. Pages that don't receive traffic can't generate those signals. New content from new sites faces a cold-start problem that traditional link-based ranking at least partially addressed through crawling and indexing.

The third limitation: task fulfillment optimization can conflict with business goals. Google wants the user's task completed as quickly as possible, ideally within the SERP. Your business wants the user on your website, engaging with your brand, entering your funnel. These objectives are increasingly misaligned, and no amount of search intent fulfillment optimization resolves that fundamental tension.

The model also struggles at the edges of personalization. Task fulfillment for one user (a quick answer) can be task failure for another user on the same query (who needed depth). Google attempts to segment these users through search history and behavior patterns, but the segmentation is imperfect. The ranking volatility we're seeing may partly reflect the system struggling to serve multiple user types from a single ranked list.

None of this means the direction is wrong. Google is moving toward outcome-based ranking, the tools will eventually follow, and the agencies that adapt their measurement frameworks around the I/O and Build announcements this May will serve their clients better than those clinging to position reports. But treating task fulfillment as a solved, complete model rather than an evolving, imperfect mechanism will produce its own category of expensive strategic mistakes.

Marcus Webb

Digital marketing consultant and agency review specialist. With 12 years in the SEO industry, Marcus has worked with agencies of all sizes and brings an insider perspective to agency evaluations and selection strategies.

Explore more topics