The Agency Selection Data Trap: Why Performance Metrics Alone Won't Predict Your SEO Success

Every agency pitch deck I've reviewed across 200+ evaluations follows the same choreography. Slide seven (it's always slide seven, give or take) shows a hockey-stick traffic graph. Slide nine displays keyword ranking tables with green arrows pointing upward.

The Agency Selection Data Trap: Why Performance Metrics Alone Won't Predict Your SEO Success

Every agency pitch deck I've reviewed across 200+ evaluations follows the same choreography. Slide seven (it's always slide seven, give or take) shows a hockey-stick traffic graph. Slide nine displays keyword ranking tables with green arrows pointing upward. Slide twelve lands on the domain authority score, bold and oversized, as if that single number should close the deal. And if you're evaluating SEO providers based on those slides alone, you're making the same mistake that lands companies in twelve-month contracts with agencies that look spectacular on paper and deliver nothing in practice.

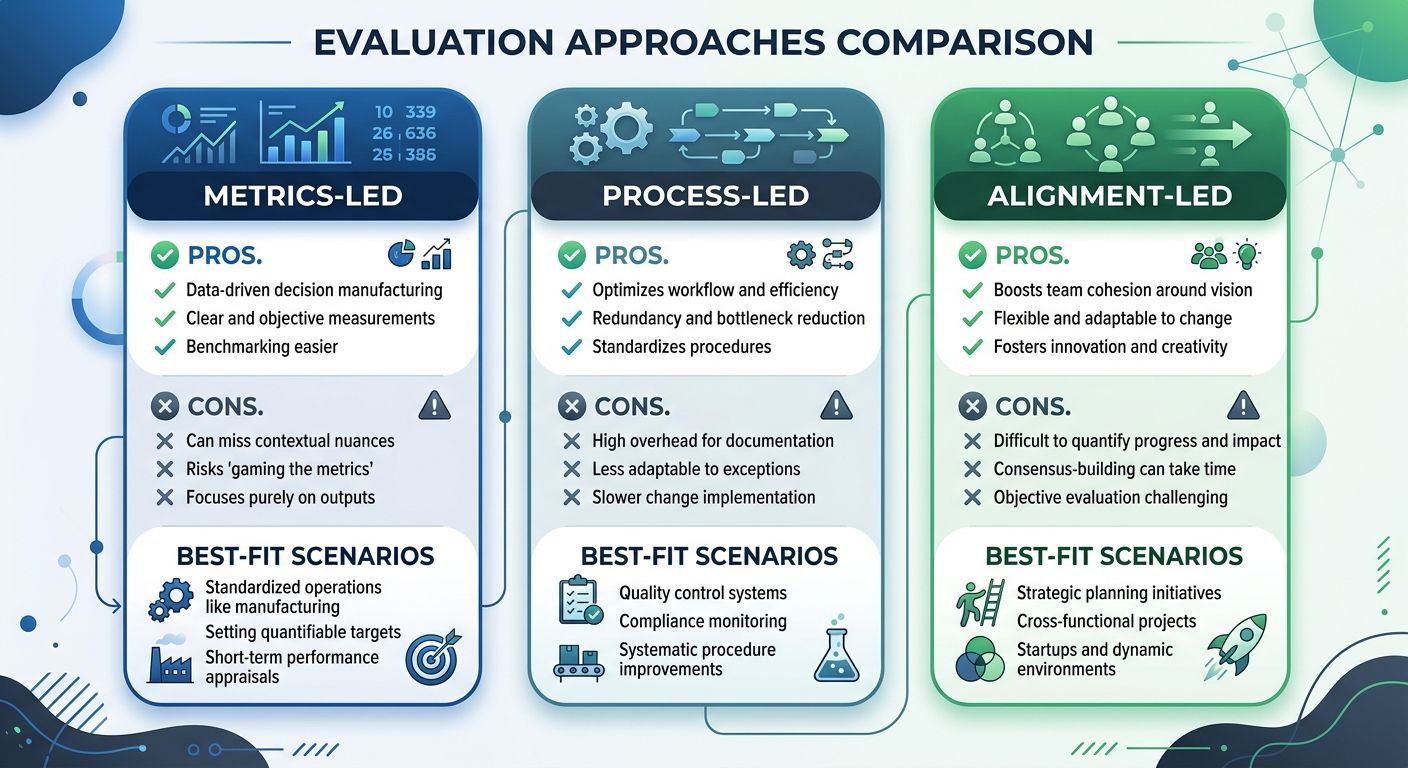

The SEO agency selection criteria that most companies rely on create what I call the data trap: an over-reliance on backward-looking performance metrics that tell you what an agency has done for someone else, in a different market, under different conditions, without revealing whether they can do anything meaningful for you. Three distinct evaluation approaches exist for vetting SEO agencies, and each carries specific tradeoffs worth understanding before you commit budget and trust to a partner.

Evaluating by the Dashboard

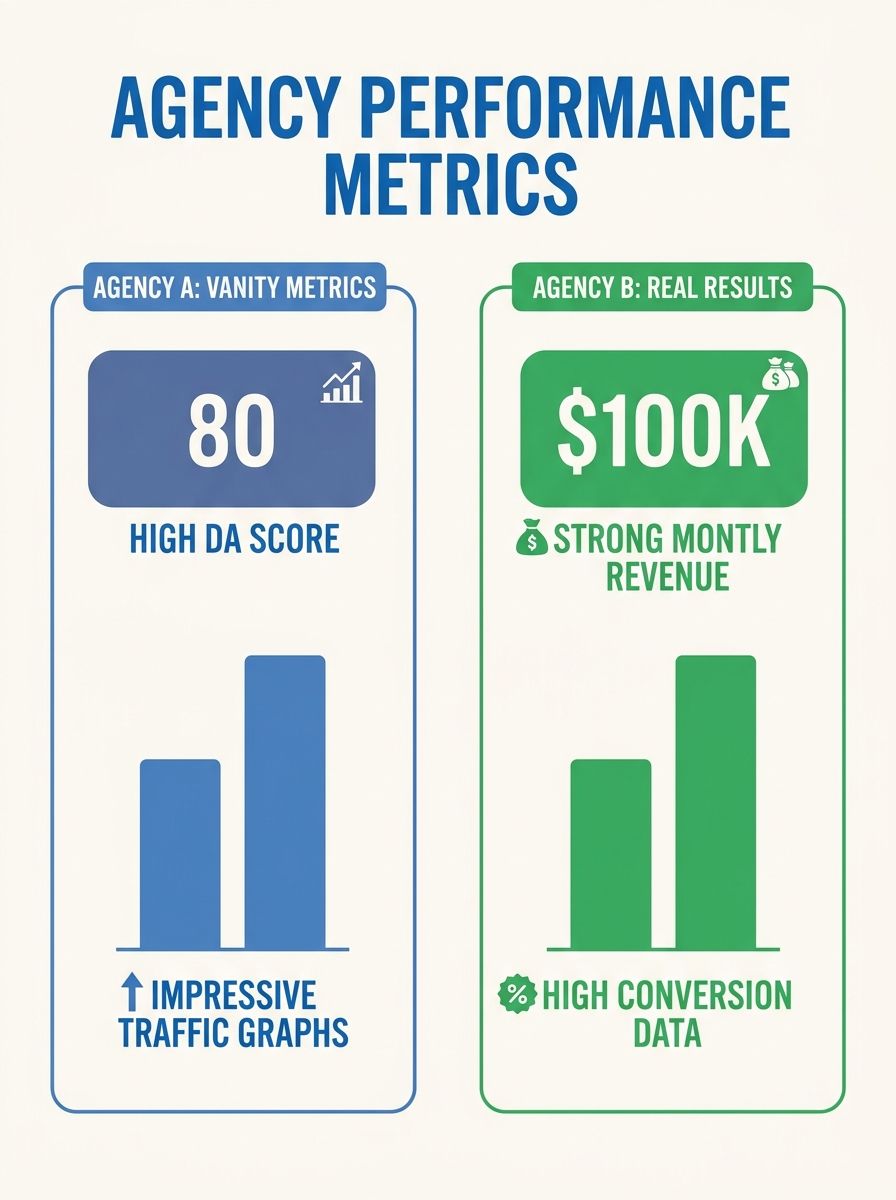

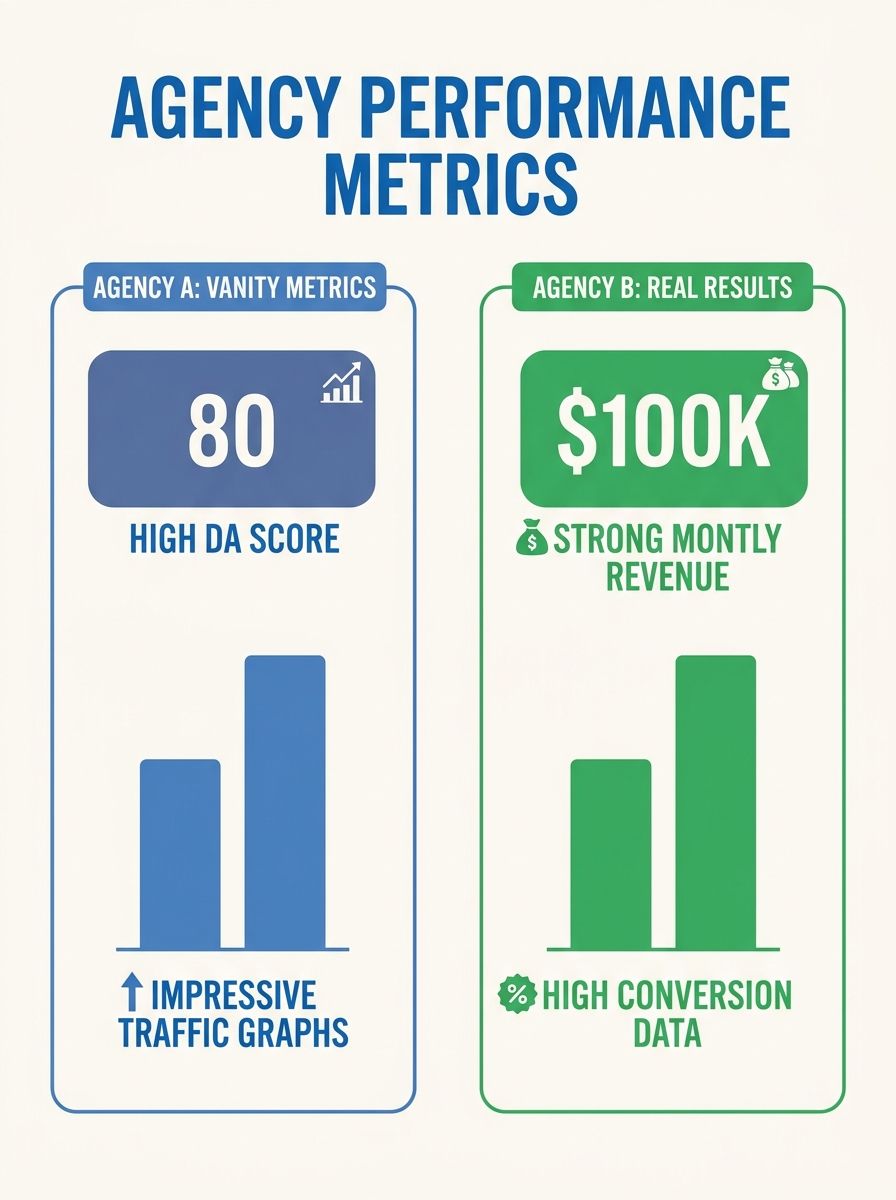

The most common approach to agency selection is metrics-led: you ask for case studies, review traffic growth percentages, examine keyword ranking improvements, and compare domain authority scores across your shortlist. This approach feels rigorous. It looks like due diligence. It has numbers, after all.

And it works well enough for eliminating obvious bad actors. An agency that can't show any measurable results for any client is probably not worth your time. But the approach breaks down quickly once you move past that baseline filter.

Here's why. Domain Authority and Domain Rating are proprietary scores created by Moz and Ahrefs, respectively. Google doesn't use them. A site with a DA of 35 can outrank a DA 65 competitor if its content better matches search intent, and this happens constantly. Yet I've watched procurement teams disqualify agencies because their client portfolios didn't include enough high-DA domains. As Digital Authority Partners notes in their analysis, keyword rankings and DA are among the most overweighted metrics in agency evaluation, and they frequently mislead decision-makers.

Traffic growth percentages have a similar problem. An agency can show you a 200% organic traffic increase for a client, but that number is meaningless without knowing what traffic actually converted. I reviewed an agency proposal where the flagship case study showed massive traffic growth for an HVAC company, but when I dug into the details, the growth came entirely from informational blog posts targeting "how does a furnace work" and similar queries. Revenue from organic traffic was flat. The agency wasn't lying about the traffic. They were lying by omission about what the traffic was worth.

The metrics-led approach also struggles with the zero-click reality of modern search. AI Overviews now appear in roughly 47% of Google searches, and about 60% of all searches end without a click to any website. An agency can technically rank you in position one for a high-value term, but if an AI Overview answers the query before anyone scrolls to organic results, that ranking delivers a fraction of the value it would have two years ago. Traditional reporting dashboards rarely account for this shift.

When the metrics-led approach works best: screening out agencies with no track record at all, or comparing agencies within the same niche where you can normalize results. If you're evaluating five agencies that all serve SaaS companies, and one consistently drives higher conversion rates from organic traffic across multiple clients, that signal has real weight.

Where it falls apart: any situation where you're comparing across different industries, different company sizes, or different competitive landscapes. The numbers simply aren't apples-to-apples.

When Methodology Tells You More Than Any Report

The second approach flips the evaluation entirely. Instead of asking "what results have you gotten?" you ask "how do you do the work?" This process-led approach examines the agency's frameworks, workflows, strategic thinking, and adaptability.

I've become increasingly convinced that this approach, while harder to execute, produces better hiring outcomes. The reasoning is straightforward: results are partially a function of the client's existing authority, budget, competitive landscape, and internal responsiveness. Methodology is the variable the agency actually controls.

A strong agency vetting methodology here involves asking pointed questions about how the agency structures its approach. According to Kreativ Street's 2026 agency selection guide, the right question to ask is: "Can you show us how you structure content for our ICP's decision-making process?" Their research found that quality SEO agencies build intent pyramids rather than keyword lists, prioritizing how their content maps to buyer decision stages over raw keyword volume.

Here's what I look for when evaluating an agency's methodology:

Intent mapping over keyword counting. Does the agency organize work around what your buyers are trying to accomplish, or do they hand you a list of 500 keywords sorted by volume? The difference matters enormously. An agency that structures content around decision-making stages will drive revenue. An agency that chases keyword volume will drive traffic that may never convert.

AI visibility strategy. With 700 million weekly ChatGPT users performing search-like queries and 94% of B2B buyers using LLMs during their buying process, any agency that doesn't have a clear approach to visibility in AI-generated answers is working with an incomplete playbook. Ask whether they track brand mentions across AI platforms and how they structure content to earn citations in AI Overviews.

Governance and measurement alignment. As Siteimprove's enterprise guide highlights, half of enterprise SEO problems stem from teams using different definitions for the same metrics. Marketing calls something a conversion while sales calls it a lead. If an agency can't articulate how they'll align their reporting definitions with your internal teams, you'll spend months arguing about whether the engagement is actually working.

Technical audit depth. Ask to see a redacted audit from a previous client engagement. You'll quickly learn whether the agency produces surface-level site health scores or digs into crawl architecture, rendering issues, and structured data implementation. If you've dealt with debugging invisible ranking losses, you know the difference between shallow and deep technical work.

When the process-led approach works best: complex engagements where the agency needs to integrate with your internal teams, enterprise environments with multiple stakeholders, or situations where you've been burned by a previous agency that delivered impressive reports but unclear business impact.

Where it falls apart: the approach requires you to have enough SEO knowledge to evaluate whether a methodology is actually sound. A persuasive presenter can make a mediocre framework sound brilliant. This is where the third approach fills the gap.

Alignment Factors That Never Make It Into the RFP

The third approach focuses on organizational fit: how well the agency aligns with your industry, your internal workflows, your communication expectations, and your risk tolerance. I've seen technically excellent agencies fail spectacularly because they operated at a tempo that didn't match their client's decision-making speed, or because they had no experience navigating the regulatory constraints of a specific vertical.

Industry experience is an obvious alignment factor, but it goes deeper than most procurement teams realize. An agency that's worked with healthcare clients understands HIPAA content constraints, the sensitivity of YMYL (Your Money or Your Life) signals in medical content, and the particular E-E-A-T standards Google applies to health information. Hiring a generalist agency for a healthcare practice means paying them to learn your industry on your budget. There's a reason healthcare practices need specialized criteria when evaluating potential SEO partners.

The same principle applies to e-commerce, where catalog-scale technical SEO, structured data for product pages, and seasonal optimization cycles create a different operational rhythm than B2B lead generation. ZealousWeb's provider evaluation framework emphasizes that expertise in your specific industry ensures a tailored approach, and that balancing cost and quality means avoiding extremely cheap services that will inevitably cut corners in areas you can't afford to compromise.

Beyond industry fit, here are the alignment factors I always investigate:

Communication cadence and escalation paths. How frequently does the agency report? Who's your day-to-day contact, and what's their seniority level? I've watched agencies assign senior strategists during the pitch and hand the account to a junior coordinator after the contract is signed. Get the names of the people who'll actually work on your account, written into the contract.

Contract structure and exit terms. Pricing for SEO services varies dramatically. Monthly retainers for mid-market agencies typically range from $3,000 to $10,000, while enterprise engagements can run $15,000 to $50,000 or higher. But the real trap is in contract duration and exit clauses. A twelve-month contract with no performance review at the six-month mark is a red flag. Performance-based models where compensation ties partially to conversion metrics or assisted revenue can guard against misaligned incentives, but they come with their own complexity around attribution.

Rate transparency and vendor relationships. Some agencies prefer to place work with tools, freelancers, or content vendors they have friendly rate relationships with, and will prioritize those over what's the best fit for your project. As one vetting guide warns, beware of agencies that don't allow direct communication between you and their subcontractors, and always ask for a breakdown of gross and net costs.

How they handle algorithm volatility. Google's algorithm changes are constant, and an agency's reaction to ranking drops tells you more about their quality than their reaction to ranking gains. Do they have a documented process for diagnosing and responding to updates? If you've tracked the history of Google's algorithm changes, you know that agencies need structured response protocols, not just optimism.

When the alignment-led approach works best: regulated industries, companies that have fired a previous agency, and situations where internal team capacity to manage the agency relationship is limited. The better the alignment, the less management overhead you'll carry.

Where it falls apart: overweighting cultural fit can lead you to hire an agency you enjoy working with but that lacks the technical depth to move the needle. Comfort isn't a KPI.

How To Choose Between These Three

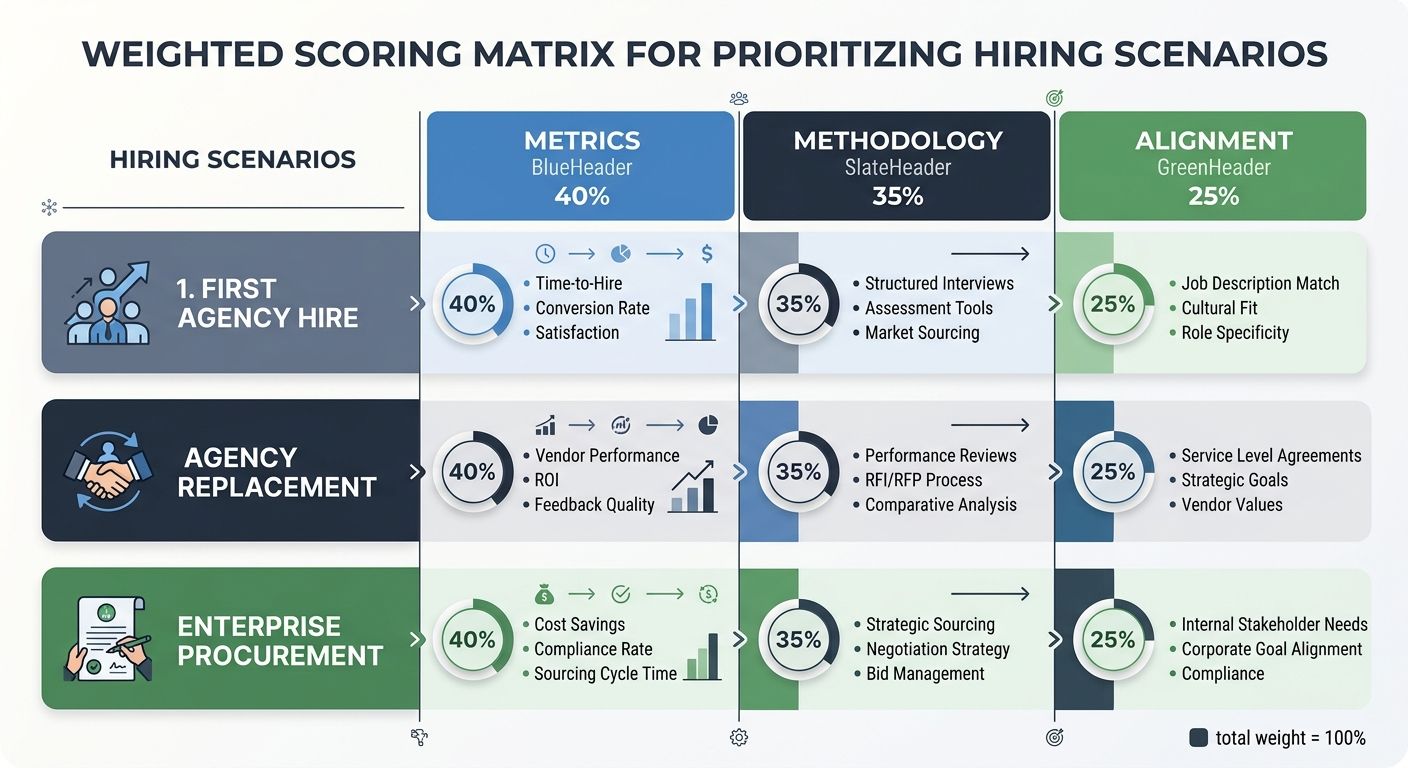

The honest answer is that no single approach is sufficient, and the right blend depends on your situation. But after evaluating over 200 agencies across my career, I've settled on a weighted model that I recommend to every client.

For companies hiring their first SEO agency: weight alignment at 50%, methodology at 35%, and metrics at 15%. You probably don't have enough SEO sophistication to fully evaluate methodology, and you're most at risk of a mismatch that wastes your first six months on onboarding friction. Start with agencies that know your vertical and can demonstrate clear communication practices. Use metrics only as a baseline screen to eliminate agencies with no credible case studies at all.

For companies replacing a previous agency: weight methodology at 50%, alignment at 30%, and metrics at 20%. You've already learned what a bad fit feels like, and you likely have clearer requirements now. Dig deep into how the new agency does its work differently from your previous partner. Effective vetting methodology for enterprise agencies prioritizes framework evaluation over portfolio review for exactly this reason.

For enterprise organizations running formal procurement: weight methodology at 40%, metrics at 35%, and alignment at 25%. Enterprise procurement teams typically have the internal expertise to evaluate frameworks, and the scale of engagement justifies demanding detailed case studies with verifiable revenue impact. Alignment matters less because enterprise engagements usually involve enough contractual structure to force communication standards.

The through line across all three scenarios is this: performance metrics are the loudest signal in agency selection, but they're also the most easily manipulated and the least predictive of your specific outcomes. An agency can show you stunning numbers from a client engagement where the real driver was a domain migration done well, or a technical fix that unlocked crawling for thousands of orphaned pages, or simply a brand that already had strong authority and needed only basic optimization to rank. None of those outcomes transfer to your situation unless the underlying conditions match.

Hidden agency evaluation factors like communication quality, contract flexibility, industry-specific knowledge, and adaptability to AI-driven search changes are harder to assess but more predictive of long-term success. The agencies worth hiring are the ones that welcome these deeper questions. The ones that deflect them and redirect you back to their traffic graphs are telling you something important about how the relationship will work once you've signed.

Build your scorecard, weight it for your specific situation, and resist the pull of the prettiest dashboard. The data trap closes when you remember that the point of hiring an SEO agency was never to accumulate metrics. It was to grow your business.

Marcus Webb

Digital marketing consultant and agency review specialist. With 12 years in the SEO industry, Marcus has worked with agencies of all sizes and brings an insider perspective to agency evaluations and selection strategies.