Synthetic Content Is Poisoning AI Search Results: What This Means for Your SEO Strategy in 2026

BBC journalist Thomas Germain invented a fictional event called the "2026 South Dakota International Hot Dog Championship" and published it in a satirical blog post this past February. Within 24 hours, both Google's AI Overviews and ChatGPT were citing the fake championship as fact.

Synthetic Content Is Poisoning AI Search Results: 7 Rules to Protect Your SEO Strategy

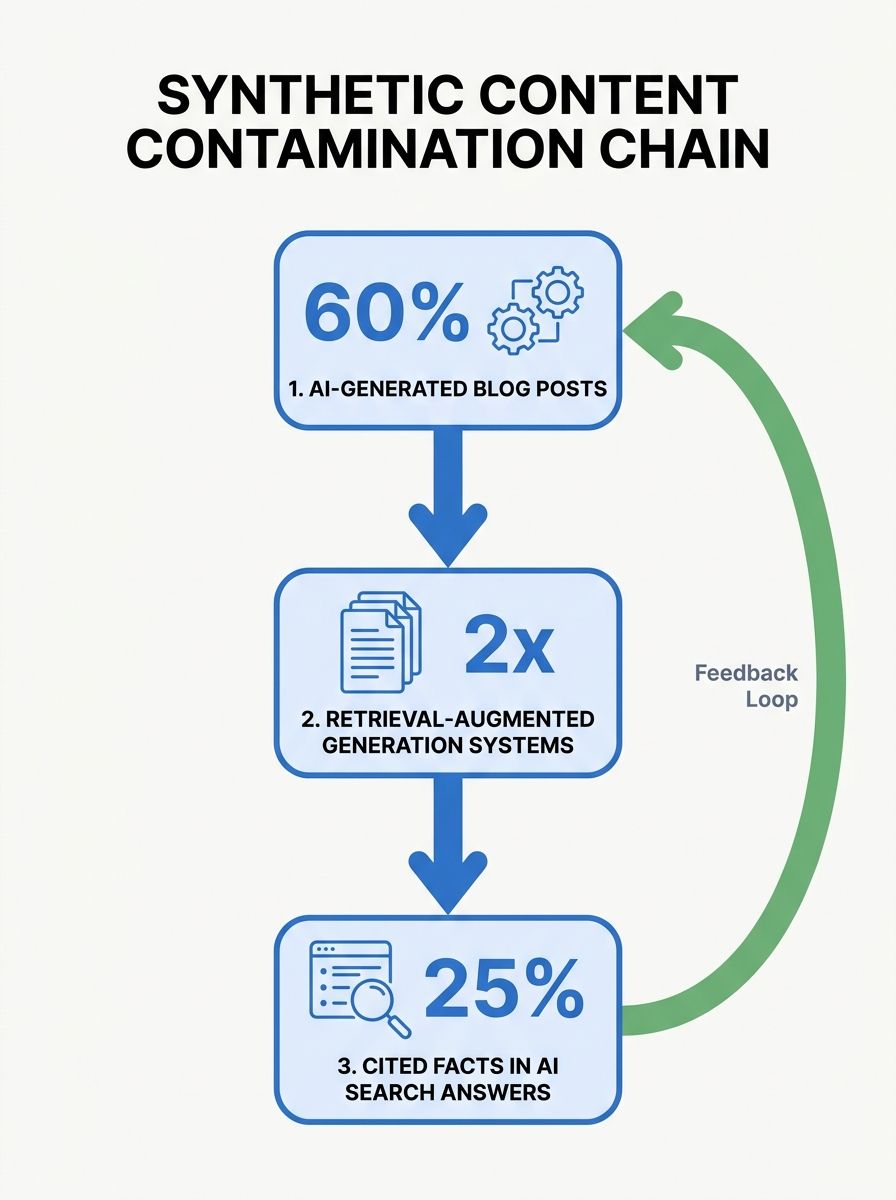

BBC journalist Thomas Germain invented a fictional event called the "2026 South Dakota International Hot Dog Championship" and published it in a satirical blog post this past February. Within 24 hours, both Google's AI Overviews and ChatGPT were citing the fake championship as fact. Around the same time, Perplexity AI confidently reported a "September 2025 Perspective Core Algorithm Update" that never happened, sourced from SEO agency blog posts that had themselves hallucinated the update. And this week, Search Engine Journal published a piece with a headline that should make every agency operator pause: "AI Search Is Eating Itself & The SEO Industry Is The Source."

The synthetic content SEO impact has moved from theoretical concern to documented, measurable contamination of the retrieval layer that powers answer engines. Google's March 2026 core update, which finished rolling out April 8, caused 79.5% of top-3 results to shift and dropped over 24% of top-10 pages entirely out of the top 100. The spam update that preceded it, completing in under 20 hours on March 24-25, specifically targeted scaled content abuse. If you're running an SEO practice or managing organic visibility for clients, the operating environment just changed. These seven rules are how I'm advising every client and agency partner right now.

Audit every published page as if an AI will cite it tomorrow

The old content audit looked at traffic, bounce rates, and conversion paths. That framework is incomplete now. Every page on your site is a potential source for AI retrieval systems, and if any of those pages contain thin, templated, or inaccurate information, answer engines may surface that content as authoritative answers attributed to your domain.

I've seen this hit real clients in embarrassing ways. An agency I evaluated earlier this year had a law firm client whose AI-generated FAQ pages contained subtly incorrect legal definitions. Those definitions started appearing in Perplexity answers, attributed to the firm. The managing partner found out when a colleague asked why his firm was publishing wrong information. The pages had been live for months with nobody reviewing them.

The practical step: pull up every page that ranks for informational queries and read it as if an AI system will quote it verbatim. Because that's increasingly what happens. According to an Ahrefs analysis of 600,000 pages, AI-generated content itself won't tank your rankings. But inaccurate AI-generated content that gets amplified by answer engines will damage your brand's credibility in ways that no ranking recovery can fix.

This rule breaks when you have a small, tightly controlled site with fewer than 50 pages, all manually written. In that case, periodic review is enough. But if you've been publishing at scale, you need a page-by-page audit within the next 30 days.

Treat AI search quality degradation as your competitive opportunity

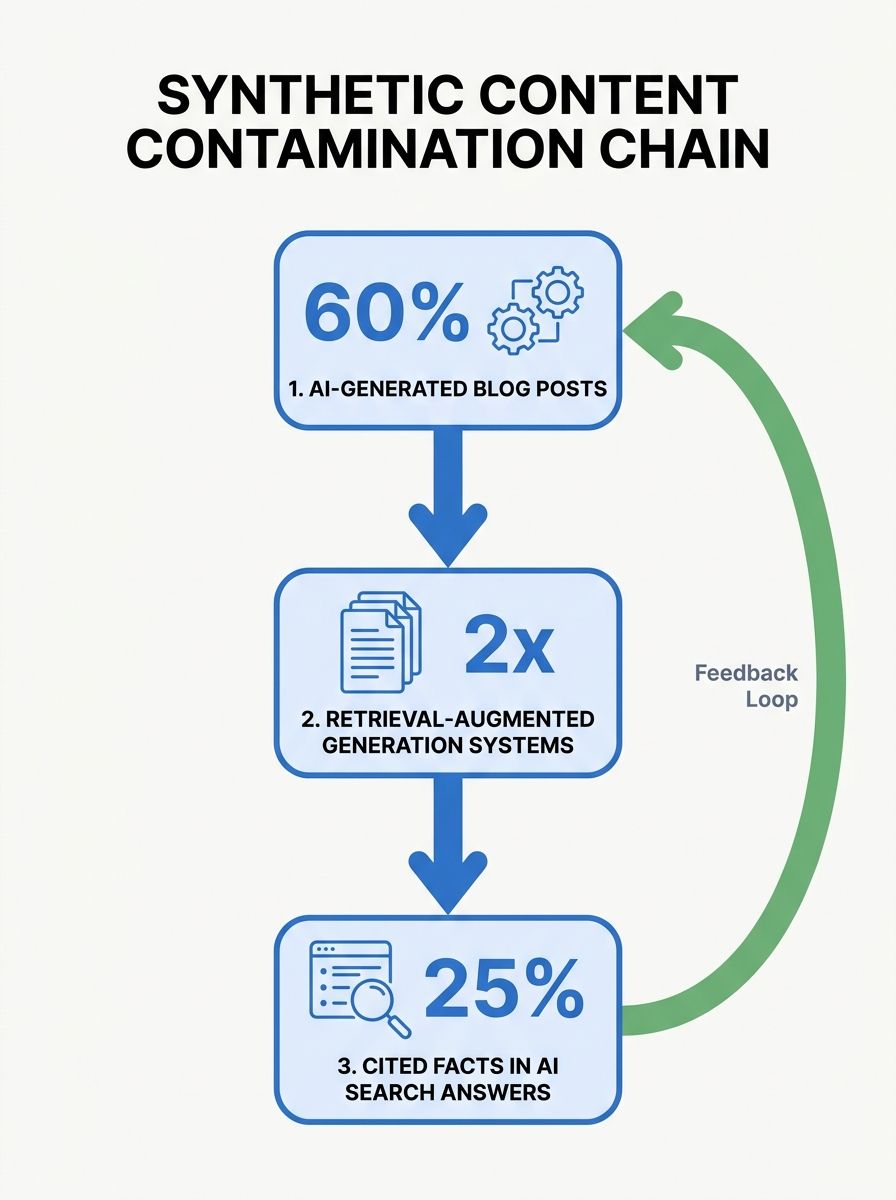

When answer engines serve contaminated results, the brands that maintain rigorous editorial standards gain disproportionate trust. Google's own guidance on AI-generated content states that their systems look to surface high-quality information from reliable sources regardless of how content is produced. The emphasis is on reliability, and reliability has become scarce.

The Oumi analysis commissioned by The New York Times tested 4,326 simple questions across Google's AI systems. Gemini 3 answered 91% correctly, up from 85% with Gemini 2. But citation integrity moved in the opposite direction: 56% of Gemini 3's answers were ungrounded, meaning the cited sources didn't actually support the claims. The system got better at sounding right while getting worse at being verifiably right.

This creates an opening for sites that can demonstrate verifiable accuracy. I'm telling every agency I work with to treat E-E-A-T signals as if they're worth three times what they were worth 12 months ago. Author bios with real credentials. Case studies with named clients (where permitted). Original data and methodology descriptions. These are the signals that AI retrieval systems are increasingly weighting, and the signals that synthetic content farms can't replicate at scale. If you've been working through how generative AI search intersects with answer engine optimization, this shift makes those frameworks even more urgent.

Stop publishing content that an LLM could generate identically

Here's the test I use with clients: take your latest blog post, strip the byline and branding, and ask yourself whether a prompt like "write a 1,500-word guide on [your topic]" would produce something structurally identical. If the answer is yes, that content has zero defensibility against AI search quality degradation.

The Semrush data study on AI content rankings found that 87% of SEO teams report their content is either fully created by humans or heavily human-led. But "human-led" covers a wide spectrum, from genuine expertise poured into original analysis to a human clicking "publish" on a lightly edited ChatGPT draft. The March 2026 core update made the distinction between those two scenarios financially painful for sites that fell on the wrong side.

This rule has a nuance worth respecting: AI tools remain excellent for research assistance, structural editing, grammar checking, and ideation. As the Ahrefs study noted, penalizing all AI involvement would be absurd when even Google Docs has built-in AI. The line is between using AI as a tool in a human-driven process versus using AI as the process itself. Agency teams who've thought carefully about content production standardization already understand this distinction.

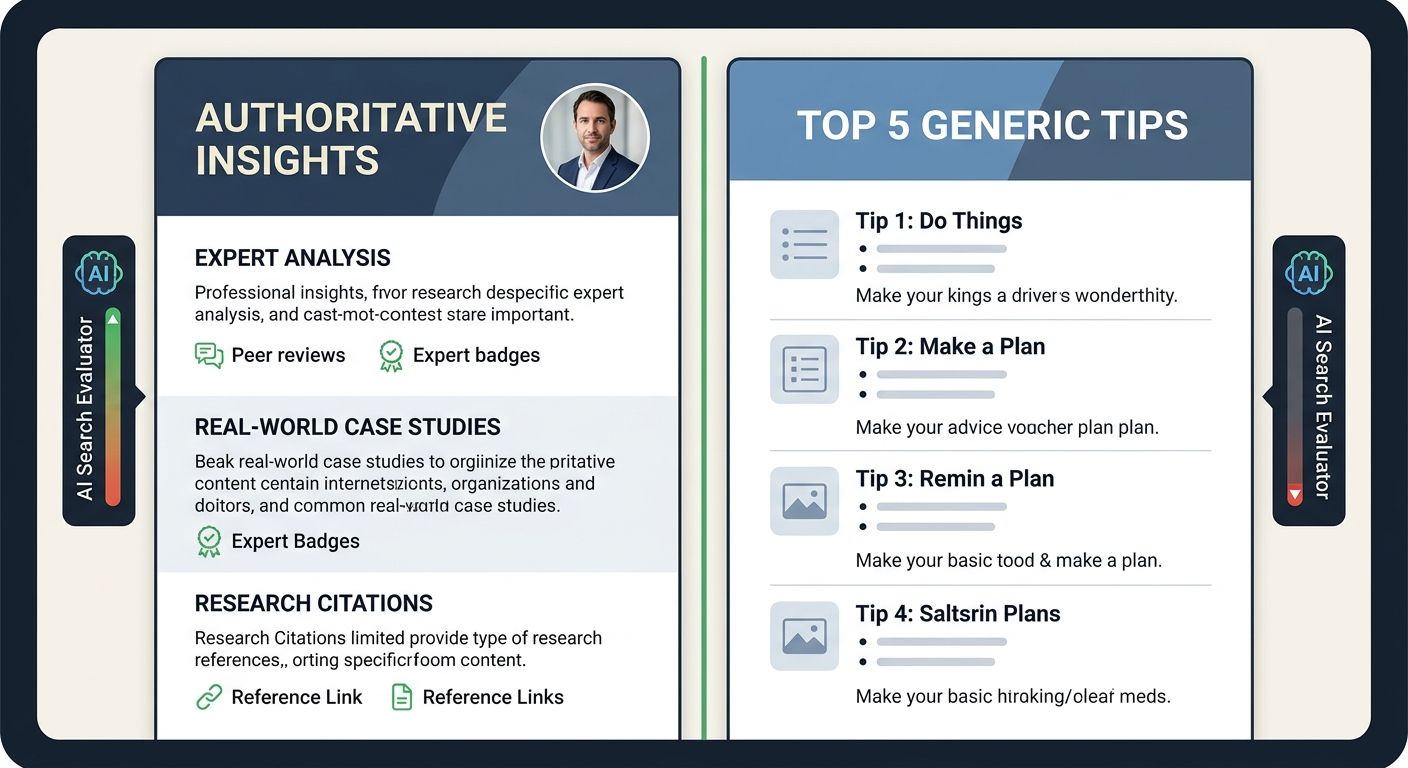

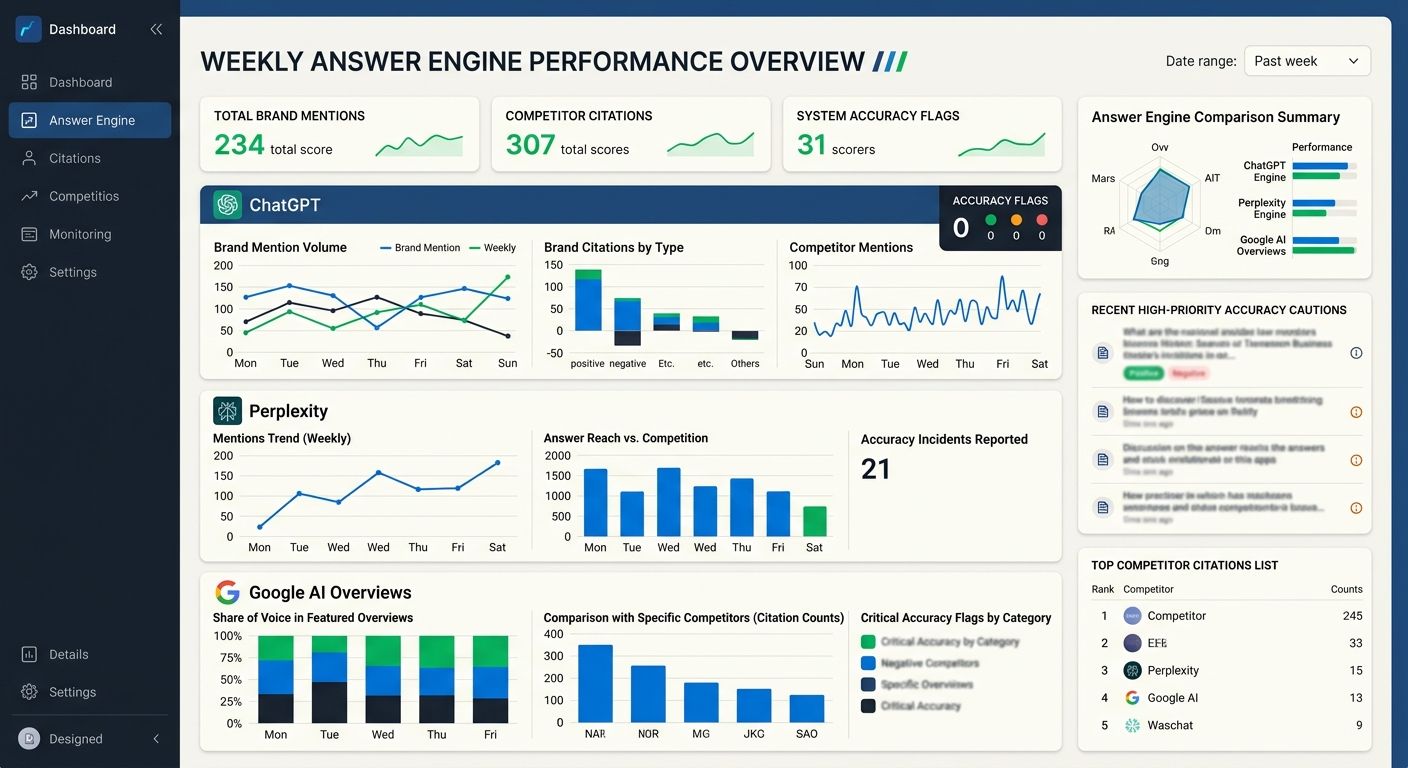

Monitor your brand's presence in answer engine outputs every week

Between 65% and 85% of ChatGPT prompts have no matching keyword in Semrush's database, according to Position Digital's April 2026 statistics roundup. That means the queries driving answer engine citations are largely invisible to traditional keyword tracking. If you're only monitoring Google Search Console rankings, you're missing the channel where your brand's reputation is increasingly shaped.

I evaluate a lot of tools and agency stacks, and the gap I see most often right now is answer engine monitoring. The answer engine optimization risks are real: your competitors can publish a "best X" listicle ranking themselves first, and that listicle can appear as a cited source in ChatGPT within days. Ahrefs found that 44% of ChatGPT's cited URLs were "best X" listicles, many of them self-promotional. Without monitoring, you won't know this is happening until a prospect tells you they chose a competitor because "ChatGPT recommended them."

Practical setup: pick the 20 queries most relevant to your business. Run them through ChatGPT (both free and paid tiers), Perplexity, and Google AI Overviews at least weekly. Screenshot the results. Track which domains are being cited and whether your brand appears. HubSpot's AEO tool and similar answer engine optimization platforms can automate some of this, but even a manual weekly check beats flying blind.

This rule breaks for businesses in extremely narrow niches where answer engines rarely surface relevant queries. But if you're in SaaS, professional services, e-commerce, or healthcare, the monitoring cadence should be non-negotiable.

Add structured data that answer engines can verify against your claims

Structured data and schema markup have shifted from nice-to-have SEO signals to essential infrastructure for answer engine visibility. When AI retrieval systems pull content from your pages, structured data helps them understand context, verify authorship, and confirm that your claims align with the metadata. Sites without structured data are harder for retrieval systems to validate, which means they're less likely to be cited when the system has cleaner alternatives available.

The implementation isn't complicated, but I'm surprised how many agencies still skip it or do it half-heartedly. At minimum, every page that could be cited by an answer engine should include:

Organization and author schema with verifiable credentials

Article schema with publication dates, modification dates, and topic categorization

FAQ schema for question-and-answer content (with accurate, complete answers)

Review schema where applicable, linking to genuine customer feedback

One agency I reviewed charged a client $4,200/month and hadn't implemented any schema beyond basic organization markup. That's the kind of oversight that costs real money in 2026 because AI search systems now require structured inputs to accurately categorize and cite content.

This rule bends when your primary traffic comes from direct navigation or referral channels with minimal search exposure. But if organic visibility matters to your revenue, structured data is table stakes.

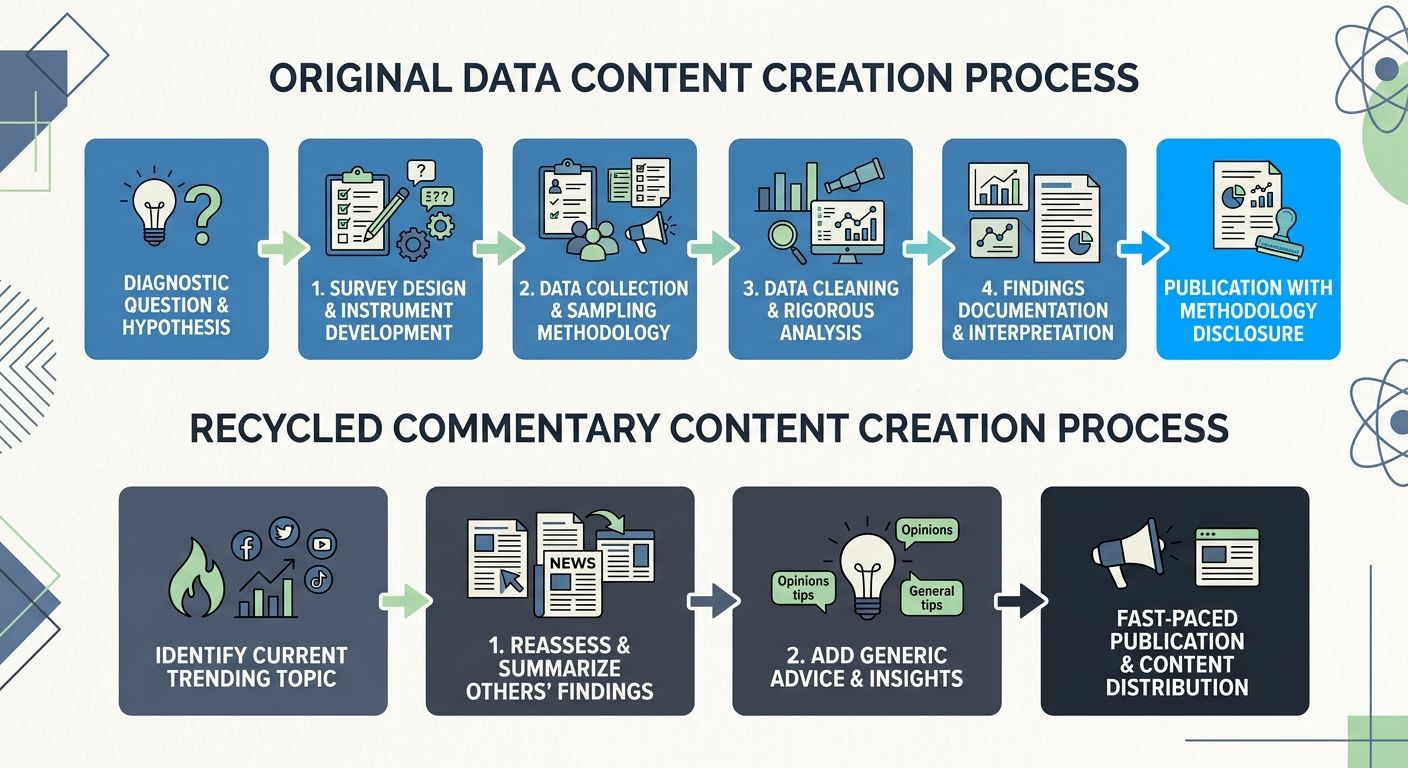

Separate your original data from your recycled commentary

The SEO strategy 2026 AI search environment rewards one thing above all others: content that contains information an AI system can't find anywhere else. Original surveys, proprietary benchmarks, unique case studies with specific numbers, documented experiments with methodology. If Forbes warned years ago about the incoming tidal wave of data pollution in AI, the flood has now arrived, and the content that survives is the content that carries its own provenance.

I track this across agency evaluations. The firms maintaining or growing their clients' organic visibility through the March-April update turbulence share a pattern: they produce original research, even if it's small in scope. A 200-person survey with clear methodology outperforms a 3,000-word commentary on someone else's survey. An agency publishing its own performance benchmarks across 50 client accounts creates something an LLM cannot hallucinate. Those who've been tracking how enterprise teams are adapting to AI-generated result visibility will recognize this as the logical next step.

Where this rule softens: not every business has the resources to run original research. In that case, the alternative is adding unique operational experience. Document your actual process, your real failures, the specific numbers from your own work. That kind of specificity is difficult to fabricate at scale, which is precisely what makes it valuable.

Prefer removing thin pages over adding more content

Google's March 2026 core update penalized sites with generic, templated AI content, lack of author credentials, and thin affiliate or listicle content. The instinct for many agencies is to respond by producing more content to fill gaps. That instinct is wrong right now.

I'm advising consolidation. If you have 15 location pages that say essentially the same thing with city names swapped, merge them or remove the redundant ones. If you have FAQ pages with one-sentence answers that an AI could generate verbatim, either expand them with genuine expertise or remove them entirely. The March update shifted over 24% of top-10 pages out of the top 100. Many of those were low-value pages that existed purely for keyword coverage rather than user value.

The math here is counterintuitive but consistent with what I've observed across agency evaluations: a site with 200 pages of genuine, defensible content will outperform a site with 2,000 pages where 1,800 are thin or duplicative. The agency practices targeted by Google's spam crackdown on bulk optimization were largely built on the volume model. That model is breaking down.

This rule has limits. If you're in e-commerce with thousands of product pages, bulk removal isn't realistic. The principle still applies: prioritize improving the quality and uniqueness of your highest-value pages over expanding the total page count.

When These Rules Break Down

Every rule listed above assumes a specific thing: that search quality will continue to matter to the platforms serving results. If Google, OpenAI, or Perplexity decide that "good enough" answers are acceptable because user engagement metrics stay flat, the incentive to reward high-quality sources weakens. The GPT-5.4 paid tier produces 33% fewer false claims than GPT-5.2, but approximately 94% of ChatGPT users are on free tiers with weaker guardrails. The business models of these platforms don't always align with the accuracy goals that would make these rules universally effective.

There's also the uncomfortable reality that some manipulation tactics work in the short term. Microsoft has labeled hidden prompts designed to make LLMs cite specific sites as "manipulative," but enforcement remains inconsistent. Brands that stuff "best X" listicles with self-promotional rankings are getting cited by answer engines right now, and some of them will continue getting away with it until retrieval filtering improves.

So here's my honest assessment after 12 years in this industry and over 200 agency evaluations: the SEO operators who survive this contamination cycle will be the ones who build genuine authority that's verifiable by both humans and machines. The operators who try to game the retrieval layer will enjoy a window of success followed by a correction that erases their gains. The March 2026 updates proved that Google is willing to cause massive ranking disruption to enforce quality standards. The pattern will repeat, and each cycle will be harder to recover from if you've built your visibility on synthetic foundations. The agencies and in-house teams that internalize these rules now will be in a fundamentally different position when the next correction hits.

Marcus Webb

Digital marketing consultant and agency review specialist. With 12 years in the SEO industry, Marcus has worked with agencies of all sizes and brings an insider perspective to agency evaluations and selection strategies.