The Technical SEO Debugging Pyramid: A 5-Layer Framework for Diagnosing Invisible Ranking Losses

A client came to me after firing their third SEO agency in 18 months. Their organic traffic had dropped 62% over that period, and every agency had focused on content, backlinks, and keyword optimization. Reasonable enough. But the actual problem?

The Technical SEO Debugging Pyramid: A 5-Layer Framework for Diagnosing Invisible Ranking Losses

A client came to me after firing their third SEO agency in 18 months. Their organic traffic had dropped 62% over that period, and every agency had focused on content, backlinks, and keyword optimization. Reasonable enough. But the actual problem? A staging environment's noindex directive had leaked into production eight months earlier, silently de-indexing 340 product pages. Not a single agency had checked. They'd spent roughly $85,000 on content and link building for pages Google couldn't even see.

That experience crystallized something I'd been thinking about for years: most SEO troubleshooting fails because people start in the wrong place. They jump straight to ranking signals, content quality, or backlink profiles without confirming the foundational layers are even functioning. It's like diagnosing why a car won't accelerate when the engine isn't turning over.

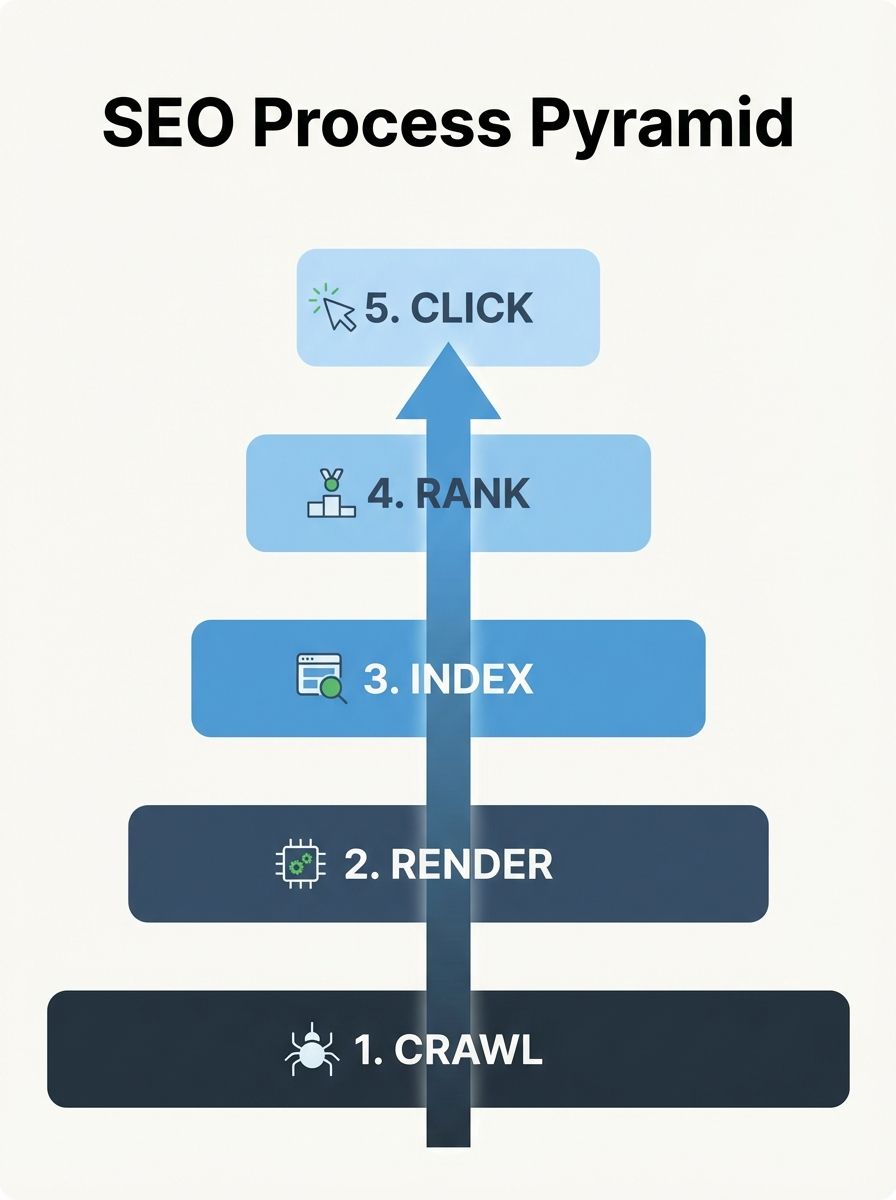

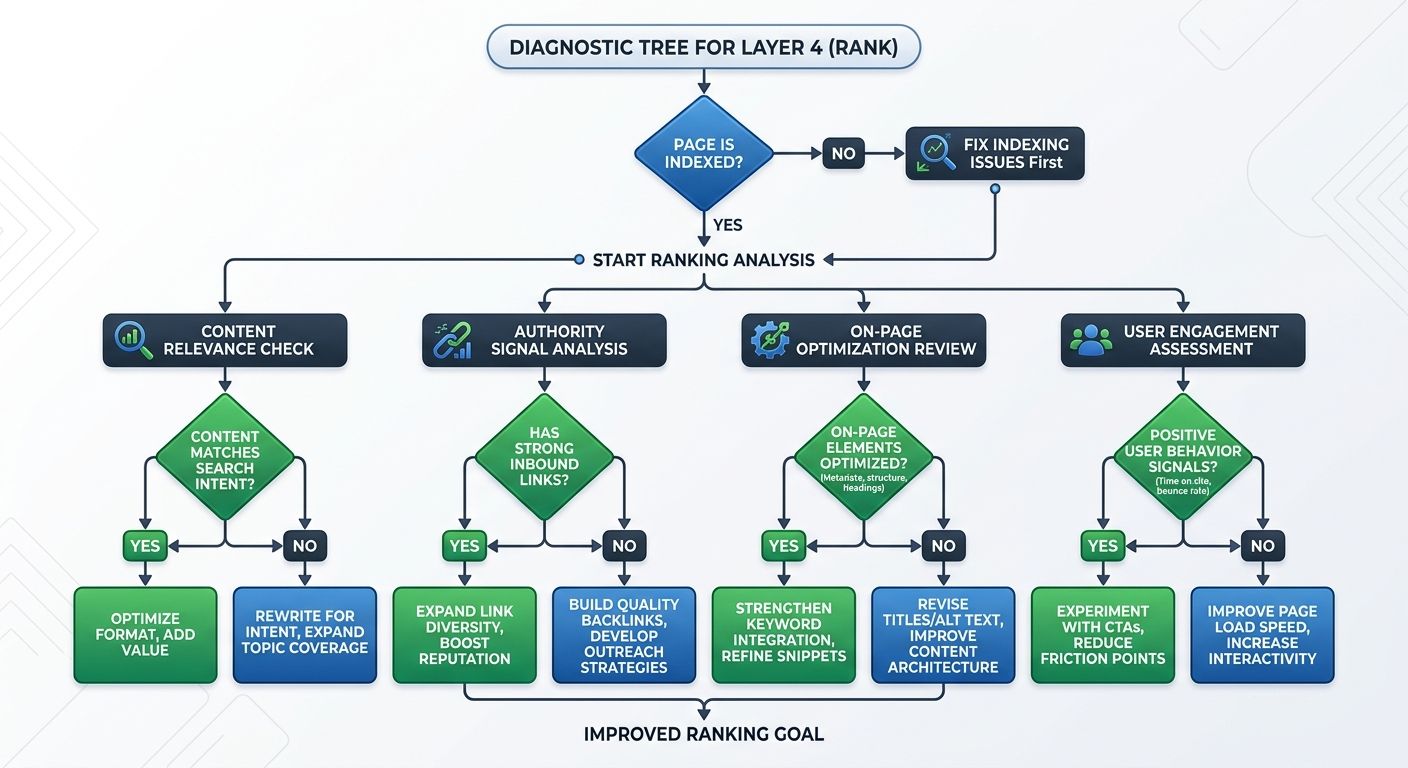

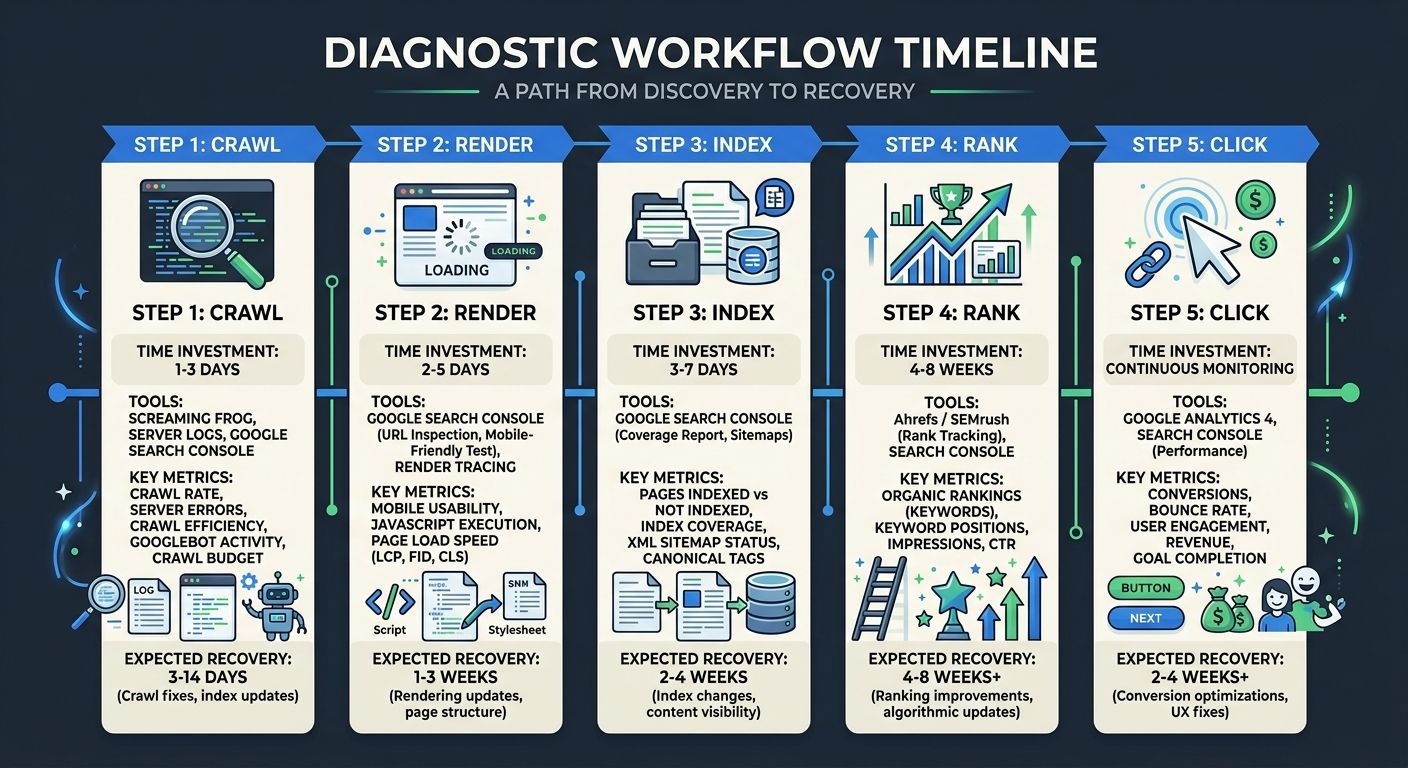

This is why I use the crawl, render, index, rank, click pyramid for every site visibility diagnosis I perform. It's a structured SEO troubleshooting methodology that forces you to work from the bottom up, confirming each layer works before moving to the next. And after applying it across 200+ agency evaluations and my own consulting work, I can tell you: roughly 70% of "invisible" ranking losses live in the bottom two layers.

Why Most SEO Debugging Fails

The typical SEO professional sees a traffic drop, opens their rank tracker, and starts analyzing keyword movements. That's layer four of five. They've skipped three entire diagnostic layers where the root cause almost certainly lives.

As Search Engine Land's debugging guide puts it, the SEO debugging pyramid is "a diagnostic framework that prioritizes technical issues in order of dependency." Each layer depends on the one below it. If crawling is broken, rendering doesn't matter. If rendering fails, indexing is irrelevant. And if pages aren't indexed, no amount of content optimization will bring them back.

This dependency chain is what makes the framework so effective as a technical SEO debugging framework. It eliminates wasted effort by confirming each prerequisite before investigating more complex problems.

Can Search Engines Find Your Pages?

This is the absolute foundation. If Googlebot can't discover and access your URLs, nothing else matters. Crawlability and indexability are the entry ticket to rankings, and when important URLs become undiscoverable, the impact is immediate: no impressions, no clicks, no revenue.

What to check at the Crawl layer:

robots.txt directives: Are you accidentally blocking critical directories? I've seen WordPress sites block /wp-content/uploads/, killing image indexing across the entire domain.

XML sitemaps: Are they submitted, valid, and actually reflecting your live URL structure? Sitemaps with 404s or redirects waste crawl budget.

Internal link structure: Can Googlebot reach every important page within 3 clicks of your homepage? Orphaned pages are effectively invisible.

Server response codes: Are your servers returning 200s for live pages, or are intermittent 5xx errors causing Googlebot to back off?

Crawl budget allocation: For larger sites (10,000+ pages), is Google spending its crawl budget on parameter URLs, faceted navigation, or other low-value pages instead of your money pages?

The diagnostic process:

Pull your server logs. Seriously. Google Search Console gives you a filtered view, but raw server logs tell you exactly which URLs Googlebot requested, how often, and what response it got. If your highest-value pages aren't being crawled at least weekly, you have a Layer 1 problem.

I wrote about this kind of systematic investigation in more detail when covering how to diagnose visibility drops quickly, but the short version is: start with logs, cross-reference against your sitemap, and identify the gaps.

Can Search Engines See What Users See?

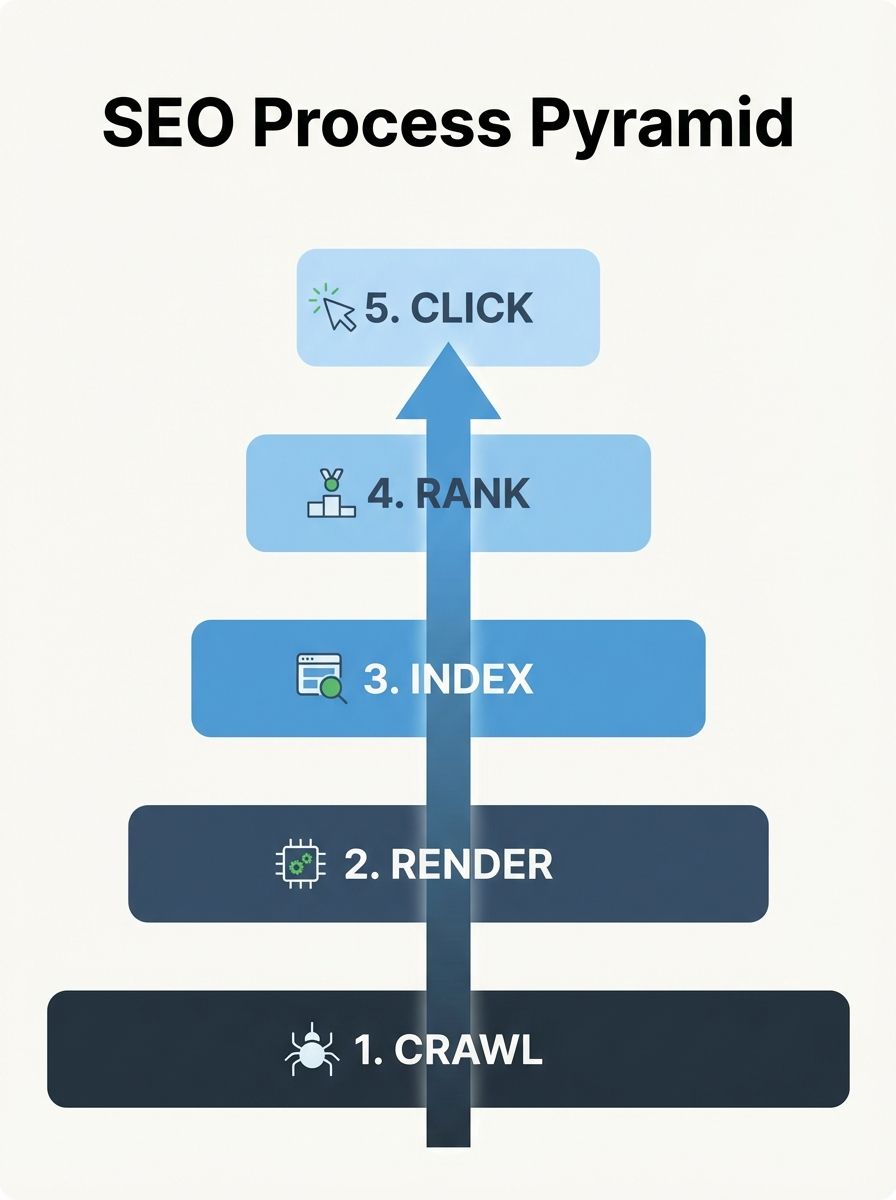

This is the layer most SEO professionals skip entirely, and it's where I find some of the most damaging invisible ranking factors.

Google renders JavaScript. But not always completely, not always quickly, and not always the way your browser does. If your critical content, internal links, or structured data are injected via JavaScript after page load, there's a real chance Google is seeing a different page than your users.

Common Render failures:

JavaScript-dependent navigation: If your primary nav menu only appears after JS execution, Googlebot may miss those internal links entirely during its initial HTML crawl.

Lazy-loaded content above the fold: Content that requires scroll or interaction triggers to load may never get rendered by Google.

Client-side routing issues: Single-page applications that don't implement server-side rendering or pre-rendering properly can appear as blank pages to crawlers.

Third-party script blocking: If a third-party script fails to load (analytics, chat widgets, consent managers), it can block the rendering of your actual content.

How to verify rendering:

Use the Google Search Console URL Inspection Tool and click "view crawled page" to see the HTML that Google actually rendered. Compare it against what you see in your browser. If content is missing from the rendered version, you've found your problem.

I audit rendering issues by taking a screenshot of the rendered page in Search Console and placing it side-by-side with a browser screenshot. Any differences are potential ranking killers.

Is Google Choosing to Include Your Pages?

Here's where it gets subtle. Your pages might be crawled and rendered perfectly, but Google can still choose not to index them. This distinction trips up even experienced SEOs.

Google's index is selective. With the helpful content system and quality raters evaluating page-level and site-level signals, Google actively excludes pages it considers low-value, duplicative, or thin. The "Discovered — currently not indexed" and "Crawled — currently not indexed" statuses in Search Console are Google essentially saying: "I saw your page. I just don't think it's worth including."

Index-layer problems to investigate:

Canonical tag conflicts: Is your self-referencing canonical correct? Are pages being consolidated under the wrong canonical URL?

Duplicate content: Do you have near-identical pages competing with each other? Parameter variations, print versions, and AMP pages are common culprits.

Thin content thresholds: Pages with minimal unique content may be crawled but never indexed. Google's quality bar has risen significantly since the March 2026 core update.

Noindex directives: Check both meta robots tags and HTTP X-Robots-Tag headers. I've seen CDN configurations inject noindex headers without anyone on the marketing team knowing.

Manual actions: Check Search Console's Security & Manual Actions panel. A stark example from Seomator's audit research: an e-commerce company called Healthspan incurred a Google penalty for low-quality backlinks and keyword stuffing, causing a sharp loss in search visibility that went unnoticed until revenue had already cratered.

The fix at this layer requires judgment, not just technical knowledge. You need to evaluate whether Google's decision not to index a page is a technical error (wrong canonical, accidental noindex) or a quality signal (the page genuinely doesn't deserve to rank). The response is completely different for each scenario, and understanding the nuance matters when you're choosing between enterprise-grade audits and DIY tools.

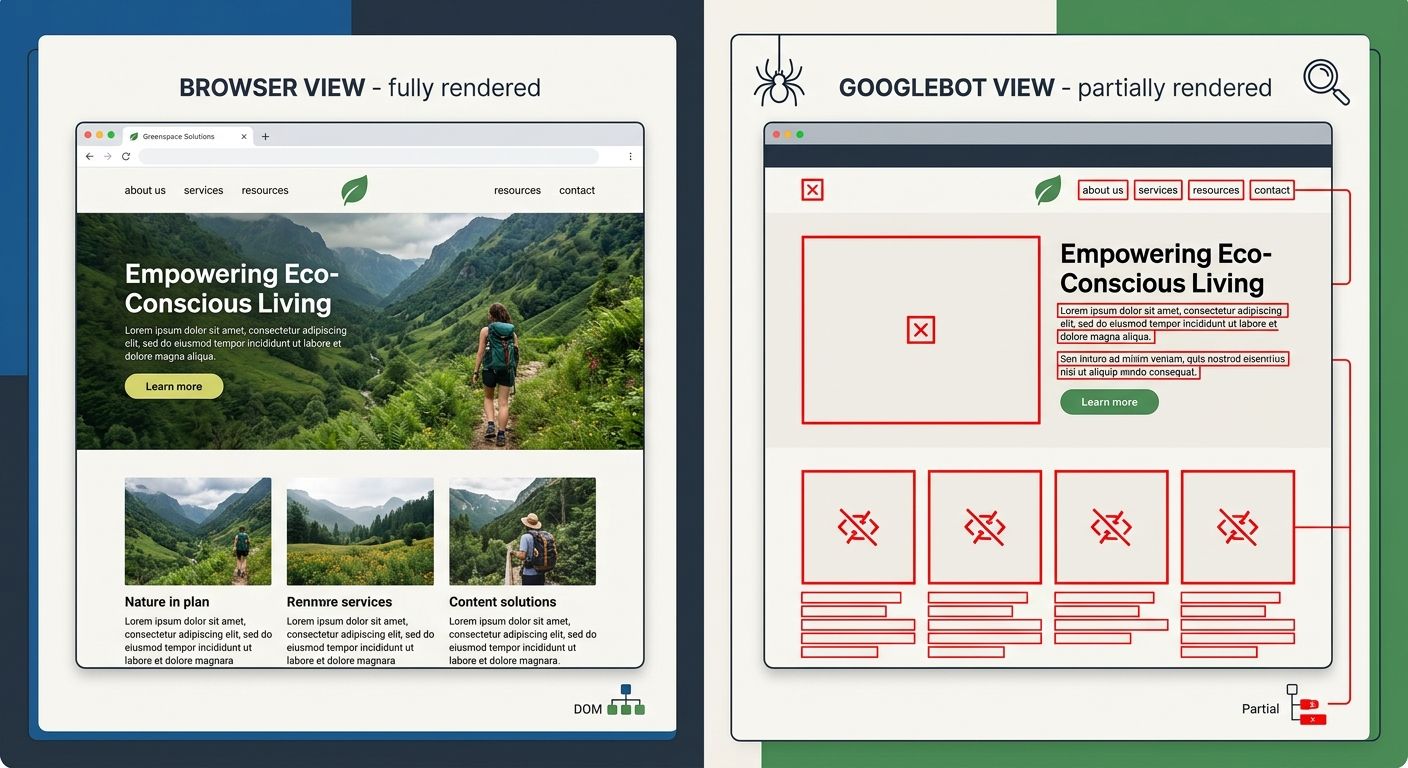

Are Your Pages Competitive for Target Queries?

Only after confirming layers 1-3 should you investigate ranking signals. This is where most SEO work traditionally focuses, and where most agencies start their analysis. That's backwards.

But assuming your pages are being crawled, rendered, and indexed correctly, ranking problems come down to a few categories:

Content relevance and quality

Does your page actually answer the query better than what's currently ranking? Not "is your content longer" or "does it have more keywords" but genuinely: would a human searching this query prefer your page? Google's systems have gotten remarkably good at evaluating this, especially after the March 2026 core update changes.

Authority signals

Backlink profiles still matter, but the weighting has shifted. A handful of topically relevant links from authoritative sites in your niche outperform hundreds of generic directory links. Check your referring domain growth rate against competitors, not just raw numbers.

On-page optimization

Title tags, heading structure, internal link anchor text, and content structure all play roles. But these are refinements, not foundations. Optimizing a title tag on a page that isn't being indexed is like polishing a car that has no engine.

User engagement signals

Click-through rates, bounce rates, and engagement metrics feed back into ranking calculations. If your pages rank but nobody clicks, or they click and immediately return to search results, Google notices. This connects directly to Layer 5.

Are Users Choosing Your Result?

The top of the pyramid. Your pages are crawled, rendered, indexed, and ranking. But are users actually clicking through?

This layer deals with your SERP presentation: title tags, meta descriptions, rich snippets, and how your result appears relative to competitors. It also accounts for SERP feature displacement, where AI overviews, featured snippets, knowledge panels, and "People Also Ask" boxes push organic results below the fold.

Click-layer diagnostics:

CTR analysis by position: In Search Console, filter by pages and compare your CTR against expected benchmarks for each position. Position 1 should get roughly 25-35% CTR for non-branded queries. If you're significantly below that, your SERP presentation needs work.

Title tag and meta description audit: Are your titles compelling and accurate? Do your descriptions include a clear value proposition? Generic descriptions get generic click rates.

Rich result eligibility: Proper schema markup can dramatically improve CTR through star ratings, FAQ dropdowns, pricing information, and other rich snippets. Test your pages with Google's Rich Results Test.

SERP feature competition: If an AI Overview or featured snippet dominates the top of the results page, even Position 1 organic results may get suppressed CTR. This is the new reality of site visibility diagnosis.

As Brandignity's research on invisible ranking factors notes, content professionals focus on the visible SEO factors like links and keywords, but "it's the ones you don't see that can do the most damage."

Putting the Pyramid Into Practice

I recommend running through this framework in order every time you encounter a traffic decline. The entire Layer 1-3 diagnostic can be completed in under four hours for most sites, and it eliminates the most common causes of invisible ranking losses before you invest time in the more nuanced Layer 4-5 analysis.

Here's my actual workflow:

Layer 1 (30 minutes): Check robots.txt, pull sitemap vs. indexed page counts from Search Console, review crawl stats report, scan for server errors.

Layer 2 (45 minutes): URL Inspection Tool on 10-15 key pages, compare rendered HTML to source, check for JS-dependent content.

Layer 3 (60 minutes): Review Page Indexing report, identify patterns in "not indexed" URLs, check canonical tags and noindex directives across templates.

Layer 4 (90 minutes): Analyze ranking movements by page template, compare content depth against ranking competitors, review backlink profile changes.

Layer 5 (45 minutes): CTR analysis by query and position, SERP feature audit, title/description optimization review.

If you're working with an agency and they can't articulate a similar structured approach, that's a red flag. The criteria you use to evaluate agencies should include their diagnostic methodology, not just their portfolio of results.

What This Framework Won't Tell You

I want to be honest about the limitations. The pyramid is a diagnostic tool, not a strategy. It tells you where the problem lives, not necessarily how to fix it. A Layer 3 problem caused by thin content requires a fundamentally different solution than a Layer 3 problem caused by canonical misconfiguration, even though both show up as indexing failures.

The framework also assumes you have access to the right data. If your client or employer hasn't given you Search Console access, server logs, and crawl tool data, you're diagnosing blind. I've walked away from consulting engagements where the client wouldn't provide log access, because any diagnosis without it is guesswork dressed up as expertise.

And finally, the pyramid addresses technical and on-page factors. It doesn't directly cover off-site reputation, brand searches, or competitive market shifts. Those require separate analysis frameworks. The pyramid handles the "is my house structurally sound?" question before you start debating paint colors.

The Practical Takeaway

Stop debugging SEO from the top down. The next time organic visibility drops on a page or section of your site, resist the urge to immediately check rankings or analyze content quality. Work the pyramid from the bottom: Crawl, Render, Index, Rank, Click. Confirm each layer is functioning before moving up.

The most expensive SEO mistake isn't choosing the wrong keywords or building the wrong links. It's spending months optimizing pages that search engines can't properly access, render, or choose to index. The pyramid prevents that waste by giving you a repeatable, dependency-aware diagnostic process.

Print it. Pin it to your monitor. Make your agency explain which layer they're investigating and why. Your traffic recovery will be faster, your spend will be more efficient, and you'll stop throwing money at problems that live three layers below where everyone's looking.

Marcus Webb

Digital marketing consultant and agency review specialist. With 12 years in the SEO industry, Marcus has worked with agencies of all sizes and brings an insider perspective to agency evaluations and selection strategies.

Explore more topics