How Enterprise SEO Agencies Evaluate Candidates: The Vetting Methodology That Actually Predicts Performance

A Fortune 500 retail brand I consulted for spent $340,000 on an enterprise SEO engagement that produced exactly zero measurable revenue impact over nine months. The agency had a gorgeous pitch deck, an impressive client logo wall, and a team of smooth talkers who knew every buzzword in the book.

How Enterprise SEO Agencies Evaluate Candidates: The Vetting Methodology That Actually Predicts Performance

A Fortune 500 retail brand I consulted for spent $340,000 on an enterprise SEO engagement that produced exactly zero measurable revenue impact over nine months. The agency had a gorgeous pitch deck, an impressive client logo wall, and a team of smooth talkers who knew every buzzword in the book. What they didn't have was a repeatable process, technical depth beyond surface-level audits, or any mechanism to connect their work to actual business outcomes. The CMO who signed that contract? Gone within a year. The SEO program? Set back by nearly two years because the next vendor had to undo damage before they could start building.

I've seen some version of this story play out dozens of times across the 200+ agencies I've evaluated in my career. And every single time, the root cause is the same: the enterprise SEO agency evaluation process was broken from the start. Companies relied on case studies they couldn't verify, references the agency hand-picked, and "proprietary frameworks" that turned out to be repackaged best practices from 2019.

So let me walk you through the vetting methodology I actually use when helping enterprise teams select SEO partners. Not the stuff agencies want you to ask about. The stuff that predicts whether they'll perform.

Why the Standard RFP Process Fails for Enterprise SEO

Enterprise procurement teams treat SEO vendor selection the same way they'd buy office furniture. They issue an RFP, collect responses, score them on a weighted matrix, and pick whoever checks the most boxes at the best price.

This approach misses the point entirely. As Previsible's 2026 enterprise agency analysis frames it, effective evaluation needs to address four key areas: understanding your business needs and internal teams, evaluating agency capabilities and track record, assessing operational fit, and focusing on the right people. Standard RFPs only touch the second area, and barely at that.

The problem is that SEO isn't a commodity. Two agencies can both "do technical SEO audits" and deliver wildly different results. One might flag 47 issues sorted by crawl priority. The other might identify the three issues actually suppressing revenue and build an implementation roadmap your dev team will follow. Same service line item. Completely different value.

Here's what I tell every enterprise team I work with: your agency selection methodology needs to test for three things that standard RFPs ignore completely.

Can they diagnose, not just audit? Any agency can run Screaming Frog and hand you a spreadsheet. You need to know if they can interpret what the data means for your specific business.

Can they operate inside your organization? Enterprise SEO fails more often from internal friction than from bad strategy. Your agency needs to navigate stakeholders, dev queues, and legal reviews.

Can they connect SEO to revenue? If their reporting stops at rankings and traffic, they're not an enterprise partner. They're a reporting service.

The Technical SEO Vetting Criteria That Actually Matter

I split technical SEO vetting criteria into two categories: what agencies show you, and what you need to test for yourself.

What Agencies Show You (And Why It's Insufficient)

Every agency pitching for enterprise work will present case studies, tool certifications, and team bios. Some of this is useful context. None of it is sufficient evidence.

Case studies are curated. I've literally seen agencies present results from projects where the client's dev team did 90% of the implementation work. Tool certifications mean someone passed an exam, not that they can apply the knowledge under pressure. And team bios? The senior strategist in the pitch deck often isn't the person doing your day-to-day work.

What You Should Actually Test

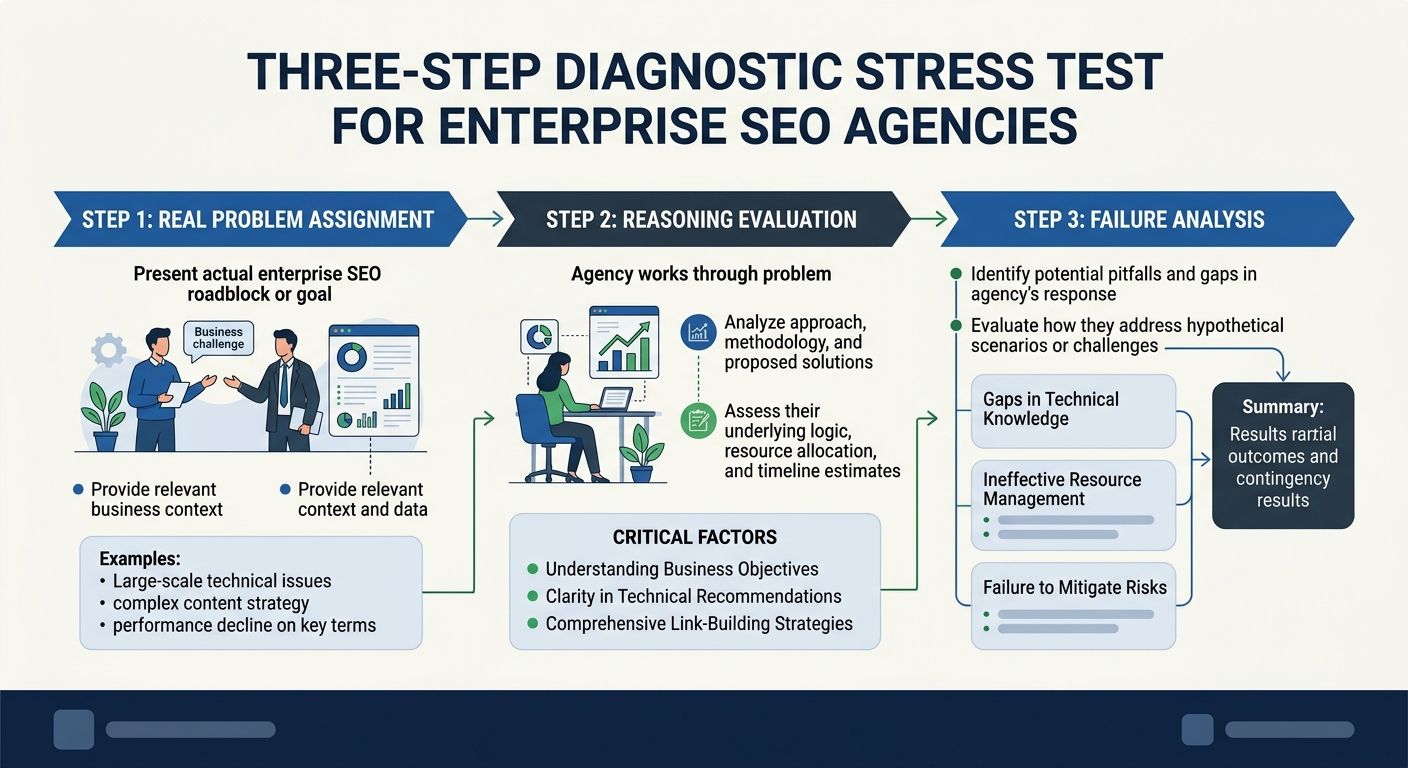

Here's my framework. I call it the Diagnostic Stress Test, and it's the single most predictive element of my enterprise vendor evaluation process.

Step 1: Give them a real problem. Take a section of your site that's underperforming. Don't tell them why you think it's underperforming. Give them access to Search Console data, crawl data, and 48 hours. Ask them to come back with a prioritized diagnosis.

Step 2: Evaluate their reasoning, not their recommendations. Anyone can say "fix your canonical tags." What you want to hear is why those canonical issues matter more than the thin content problem they also found, how they'd sequence the fixes given your CMS constraints, and what revenue impact they'd project from the changes.

Step 3: Ask about failures. Every agency that's worked at enterprise scale has had projects go sideways. If they claim a perfect track record, they're either lying or they haven't done enough enterprise work. The good ones will tell you what went wrong and what they changed in their process afterward.

Platforms like Vervoe offer structured SEO skills assessments that test for keyword research, site architecture, crawl budget optimization, and algorithm change response. Similarly, TestGorilla's technical SEO assessment evaluates candidates on their ability to analyze websites and improve rankings through structured scenarios. These tools can supplement your evaluation when you need to assess individual team members, not just the agency's pitch team.

If you want to understand how technical depth actually plays out in practice, the breakdown of five-layer diagnostic frameworks for ranking losses gives you a good sense of the rigor you should expect from any agency claiming enterprise capabilities.

The Performance Metrics That Separate Partners from Vendors

This is where enterprise teams get it wrong, and it's where I spend the most time coaching procurement and marketing leaders.

Stop Measuring Activity. Start Measuring Impact.

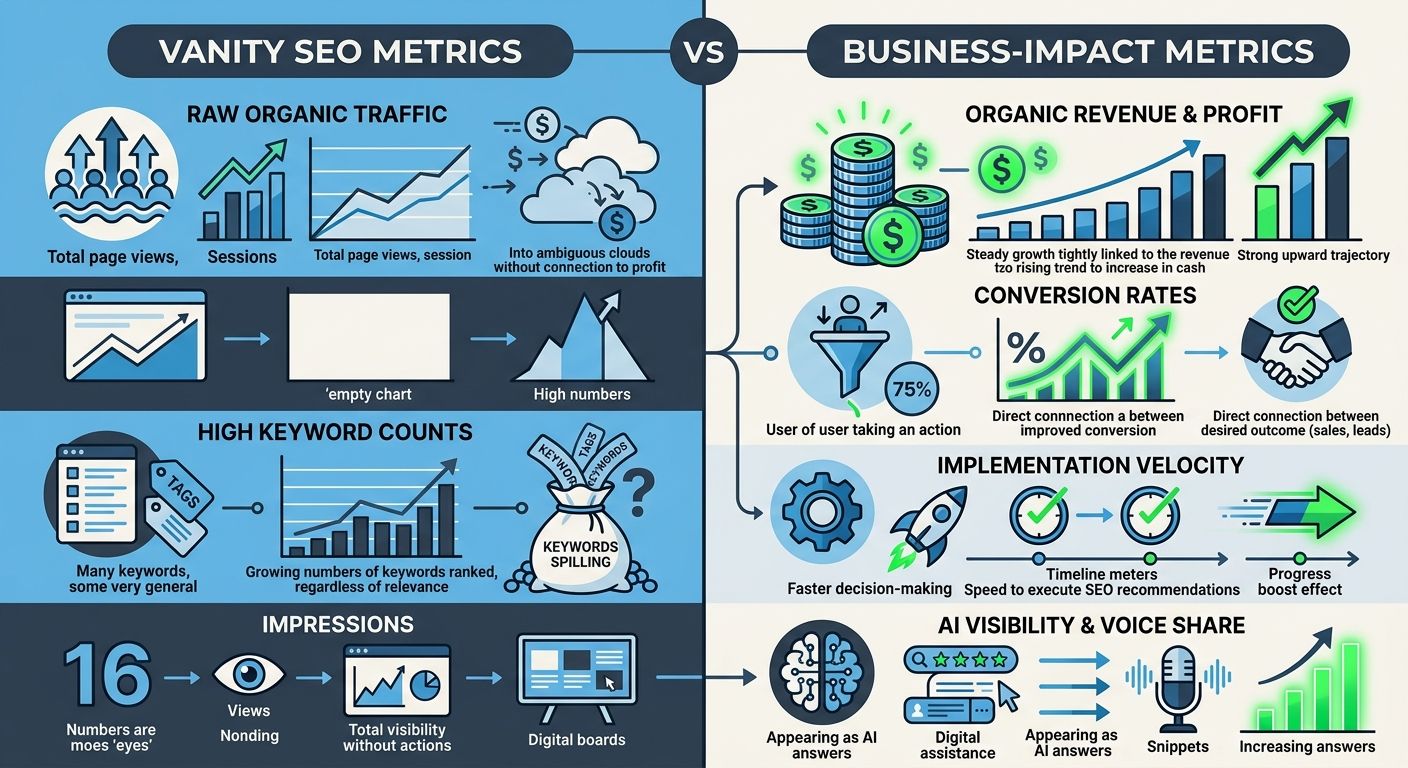

I've reviewed agency contracts where the deliverables section reads like a task list: "4 blog posts per month, 1 technical audit per quarter, monthly reporting call." That's activity, not performance. And activity-based contracts create perverse incentives. The agency hits their deliverable targets regardless of whether those deliverables moved the needle.

The SEO agency performance metrics that actually predict long-term success look different:

Organic revenue attribution: Not just traffic. Revenue. Can the agency connect their work to pipeline or sales figures?

Conversion rate from organic: High traffic with low conversions often signals misaligned keyword intent or poor landing page experience.

Visibility in AI-generated results: With zero-click searches continuing to climb, your agency needs to track and optimize for presence in AI Overviews and generative search features.

Implementation velocity: How quickly do their recommendations actually get deployed? This measures their ability to work with your internal teams, which matters enormously.

As DashThis's tracking guide puts it, SEO tracking should encompass keyword rankings, organic traffic, backlinks, technical performance, and conversions. But tracking and measuring aren't the same thing. Tracking tells you what happened. Measuring tells you what it was worth.

One Reddit thread in r/agency captures this distinction well: good agencies understand the difference between traffic and convertible traffic. One is the result of tactics, the other the result of a well-planned strategy.

If your team is still building reporting around rankings alone, the approach outlined in building custom performance dashboards is a good place to start rethinking what you measure and why.

Operational Fit: The Evaluation Layer Everyone Skips

I'm going to say something that might sound obvious but gets ignored in nearly every enterprise selection process I've observed: the best SEO strategy in the world is worthless if it can't survive contact with your organization.

Enterprise SEO doesn't fail because agencies lack knowledge. It fails because recommendations die in dev backlogs, get watered down by legal review, or stall because no one has analytics access. The dependency trap in SEO projects is real, and the agency you select needs to demonstrate they've navigated it before.

Questions That Reveal Operational Readiness

During your evaluation process, ask these:

"How do you handle a situation where your top recommendation gets deprioritized by the client's dev team for three consecutive sprints?" Listen for specifics. Do they escalate? Repackage the business case? Find workarounds? Or do they just document it and move on?

"Walk me through your onboarding process for the first 90 days." Enterprise onboarding is where relationships are made or broken. You want to hear about stakeholder mapping, access provisioning timelines, and baseline measurement. Not vague promises about "hitting the ground running."

"What does your team structure look like for an account our size, and what's your retention rate for senior strategists?" Staff turnover is the silent killer of enterprise SEO engagements. If the strategist who built your roadmap leaves six months in and gets replaced by someone junior, you're essentially starting over.

"How do you handle algorithm updates that affect our site?" UFO's enterprise SEO guide emphasizes that an enterprise SEO agency is not a simple vendor you hire to complete a task list. They're an extension of your marketing department. Their response to this question tells you whether they operate that way.

Pricing Transparency and Contract Structure

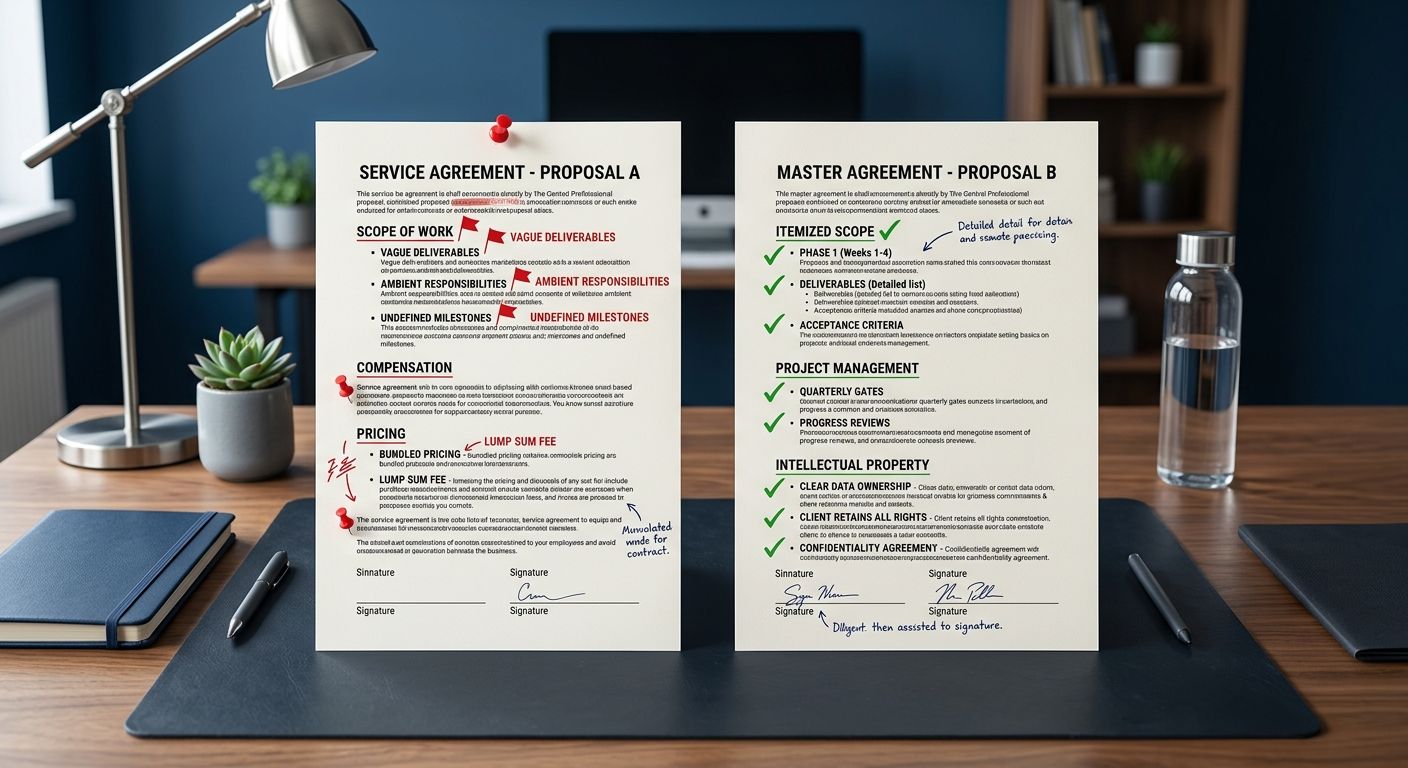

I've seen enterprise SEO contracts ranging from $8,000/month to $75,000/month. The price itself isn't what matters. What matters is whether you can trace every dollar to a clear scope of work and measurable outcome.

Here's what to look for:

Itemized scope, not bundled packages. If you can't see exactly what you're paying for technical audits versus content strategy versus link acquisition, you can't evaluate whether the allocation makes sense.

Quarterly review gates. The best contracts I've seen include 90-day performance checkpoints with defined criteria for continuation, adjustment, or termination. This protects both sides.

Clear IP and data ownership. When the engagement ends, who owns the keyword research, the content frameworks, the technical documentation? Get this in writing before you sign.

Defined escalation paths. As Zyxware's vendor selection checklist emphasizes, be sure to understand the vendor's approach and whether it aligns with your business goals. Pricing and contract terms can vary wildly, and you need absolute clarity on what's included.

If you're evaluating whether to go full-service or supplement with tools, the true cost comparison between tool stacks and agencies is worth reviewing before you finalize budget allocations.

The Pilot Project: Your Best Prediction Tool

After all the interviews, assessments, and reference checks, the single most reliable predictor of long-term agency performance is a paid pilot project. Not a free trial. Not a "discovery phase" where the agency works for free hoping to win the full engagement. A scoped, paid pilot with defined success criteria.

Here's how I structure pilot projects for enterprise evaluations:

Duration: 8-12 weeks

Scope: One business unit or product line, not the entire organization

Budget: Typically 30-40% of what the full monthly engagement would cost

Success criteria: Defined before kickoff, tied to both process metrics (communication quality, implementation support, reporting depth) and outcome metrics (traffic to target pages, conversion improvements, technical health score changes)

The pilot reveals everything a pitch process can't. How does the agency handle ambiguity? Do they push back when your internal team gives them bad direction? Are their "senior strategists" actually doing the work, or did the B-team show up after the contract was signed?

My Evaluation Scorecard

I score agencies across five dimensions, weighted by what I've found matters most for enterprise outcomes:

Diagnostic Ability (25%): Can they identify real problems and prioritize by business impact?

Operational Maturity (25%): Can they navigate enterprise bureaucracy and still move work forward?

Measurement Rigor (20%): Do they connect SEO to revenue, not just rankings?

Technical Depth (15%): Do they have genuine expertise across crawling, rendering, indexing, and structured data?

Cultural Alignment (15%): Will their communication style and work cadence mesh with your team?

Notice that technical depth is only 15%. That's deliberate. I've seen technically brilliant agencies fail spectacularly at enterprise scale because they couldn't get a dev ticket prioritized or communicate value to a CFO. And I've seen technically competent (not exceptional) agencies deliver extraordinary results because they knew how to operate inside complex organizations.

The takeaway here is straightforward: enterprise SEO agency evaluations optimize for the wrong signals. They reward polished presentations over diagnostic skill, logo walls over operational maturity, and guaranteed rankings (which no legitimate agency can promise) over honest assessment of what's achievable. If you're running an evaluation right now, throw out your standard RFP matrix and build one around the framework above. The agency that scores highest might not be the one with the flashiest pitch. But they'll be the one still delivering results in year two, which is where the real ROI of enterprise SEO lives.

Marcus Webb

Digital marketing consultant and agency review specialist. With 12 years in the SEO industry, Marcus has worked with agencies of all sizes and brings an insider perspective to agency evaluations and selection strategies.

Explore more topics