The Review Velocity Problem: Why Volume Matters Less Than Recency for Local SEO Rankings

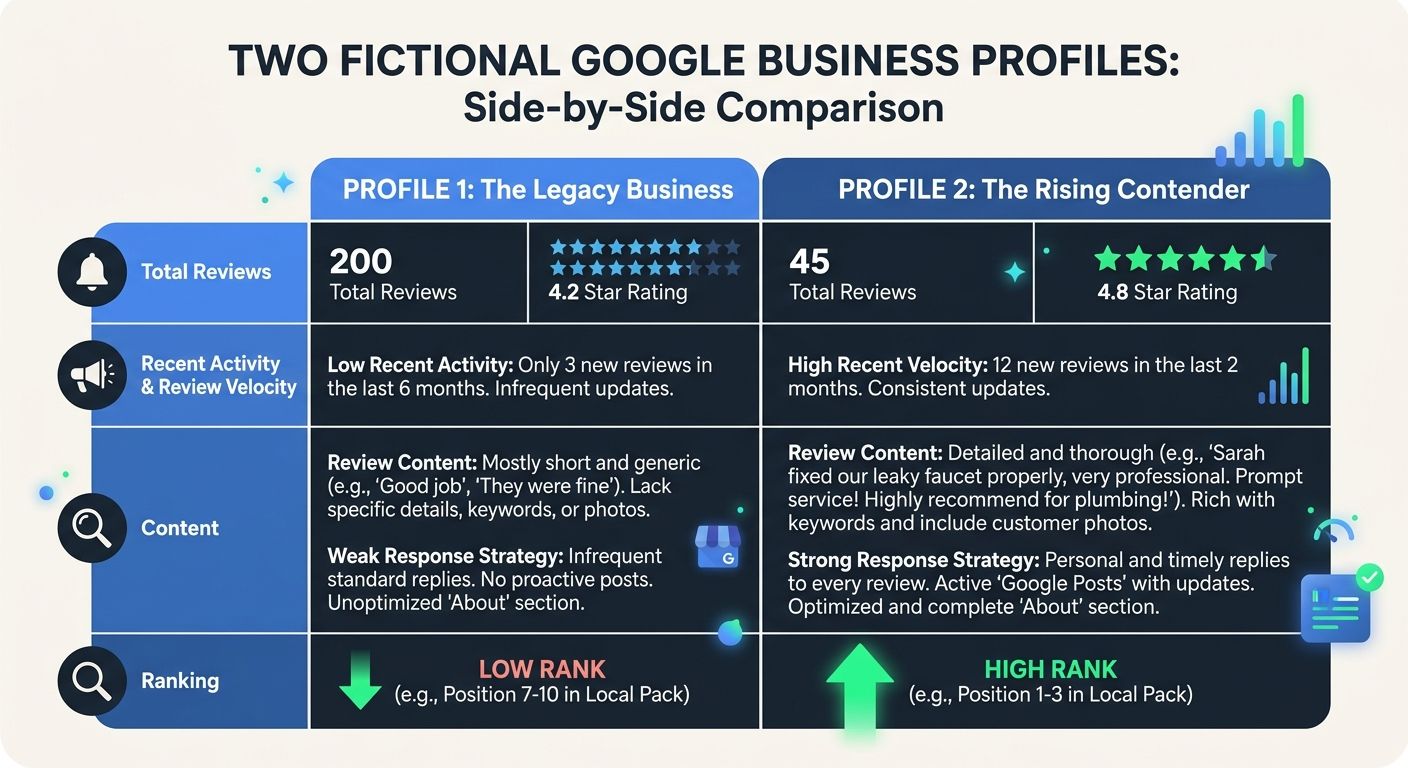

Google's local pack algorithm treats a profile with 20 reviews from the past three months as a stronger signal than a profile with 50 reviews and nothing posted in six months. That single fact invalidates the strategy most local businesses are running.

The Review Velocity Problem: Why Volume Matters Less Than Recency for Local SEO Rankings

Google's local pack algorithm treats a profile with 20 reviews from the past three months as a stronger signal than a profile with 50 reviews and nothing posted in six months. That single fact invalidates the strategy most local businesses are running. The mechanism at work is review velocity — the rate at which new reviews accumulate over time — and it explains why businesses with smaller review portfolios routinely outrank competitors who spent years building up massive totals. If you've been treating review acquisition as a one-time campaign rather than a continuous operation, the algorithm has already moved past you.

I've audited over 200 agencies in my career, and the ones managing local SEO campaigns still tend to report on cumulative review count as a headline metric. They'll proudly show a client they went from 85 to 140 reviews during the engagement. What they don't report is when those reviews landed, whether the pace was steady, or how many arrived in the most recent 90-day window. Understanding review velocity SEO at the mechanical level changes how you allocate budget, how you evaluate agency performance, and how you diagnose ranking drops that seem to come from nowhere.

How Google's Review Recency Algorithm Weighs Incoming Signals

Google has never published the exact weights of its local ranking factors, but the company has confirmed that reviews influence local rankings and that high ratings alone aren't sufficient. The working model, built from practitioner testing and confirmed patterns, breaks review signals into at least four distinct inputs: overall rating, total volume, recency of individual reviews, and velocity (the rate of new reviews over a defined period).

What practitioners have observed is that these inputs aren't weighted equally — and the hierarchy has shifted over time. Total volume used to carry significant weight. A business crossing certain thresholds (10 reviews, 50 reviews, 100 reviews) could see measurable bumps in local pack visibility. Sterling Sky's case study data still shows that going from 9 reviews to 10 reviews produced a noticeable ranking bump, but incremental gains beyond that threshold flattened quickly when the reviews were old.

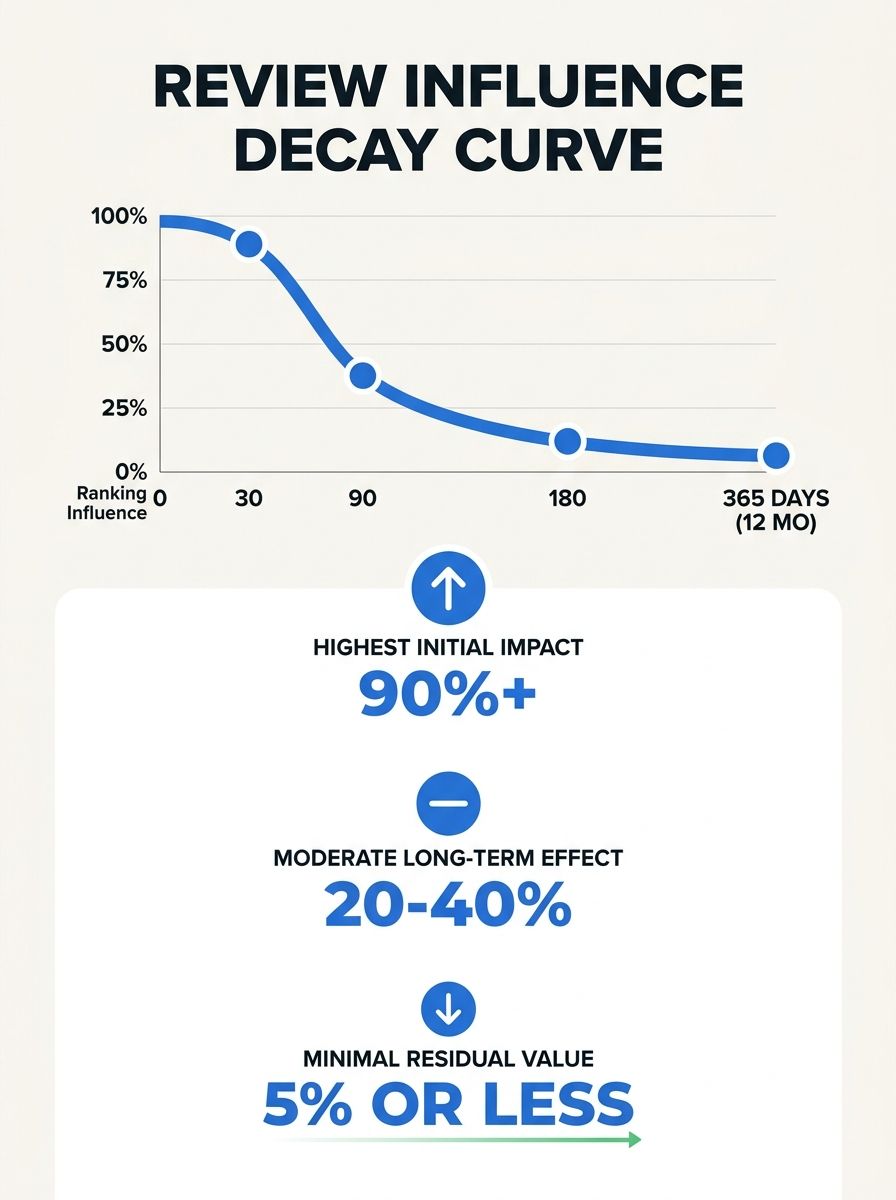

The review recency algorithm now functions more like a decay model than a ledger. Each review has a diminishing influence curve: high impact in the first 30 days, tapering through 90 days, and dropping sharply after six months. Data across multiple practitioner communities suggests that reviews aged 30-90 days retain roughly 70-80% of their ranking influence. At 90-180 days, that drops to 40-50%. Beyond six months, the influence shrinks to somewhere between 10-20%.

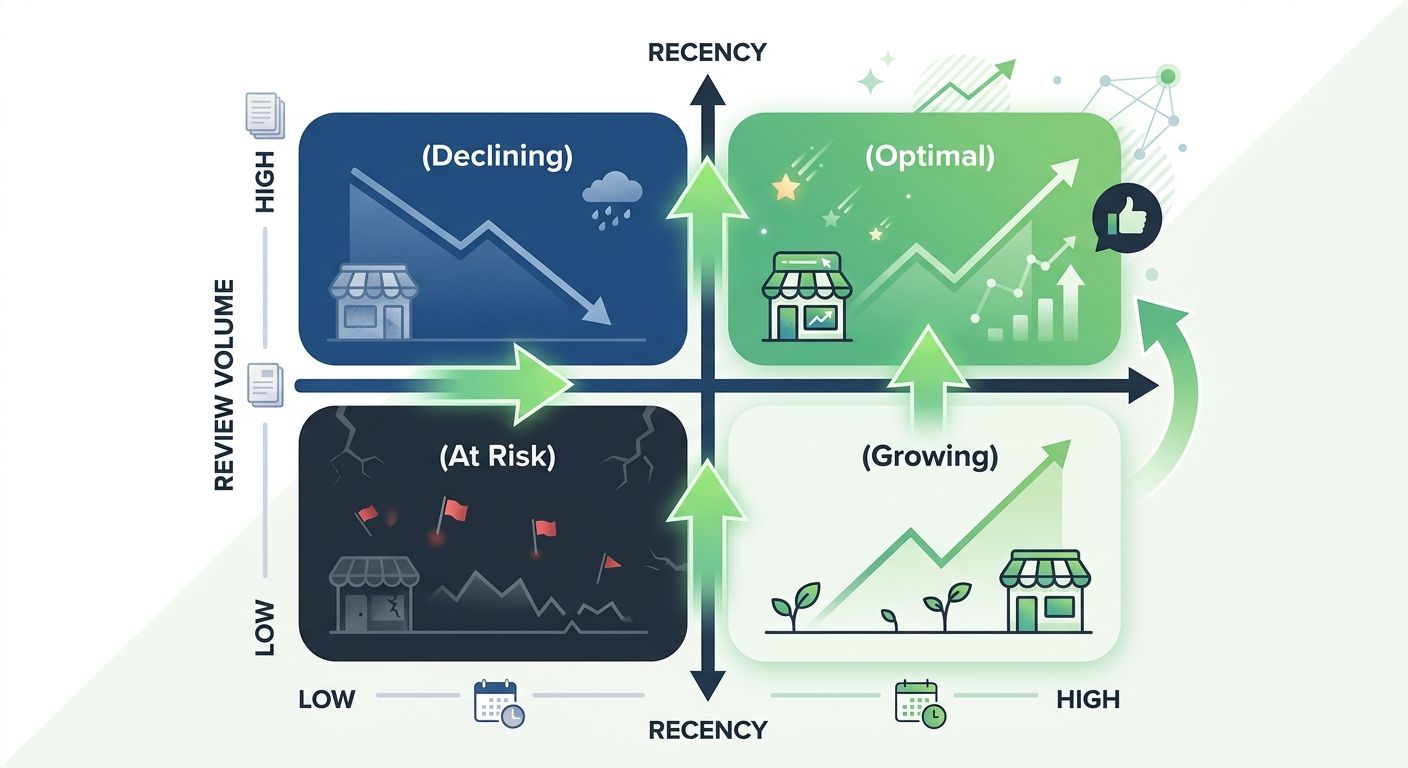

This decay model means that a business generating 4 reviews per month creates a continuously replenishing pool of high-influence signals, while a business that collected 200 reviews two years ago is operating on a dwindling reserve.

The Velocity Signal: What Google Is Actually Measuring

Review velocity measures the consistency and rate of new review acquisition. This is distinct from both total count and recency of the most recent review. You could have a review from yesterday but zero reviews in the preceding three months — that single fresh review doesn't constitute healthy velocity.

As one of the most misunderstood ranking factors in local search, velocity is best understood as Google's proxy for business activity. A business receiving steady reviews signals ongoing customer transactions, continued service delivery, and active market participation. A business with a dead review profile, regardless of historical volume, signals potential dormancy.

Among local ranking signals 2026, review velocity has climbed into the top tier alongside proximity, category relevance, and on-page optimization. Estimates from multiple ranking factor studies place the review cluster (velocity, recency, content, and response) at roughly 9-15% of local pack ranking weight. That's meaningful enough to swing competitive rankings, especially in categories where proximity and category match are similar across competitors.

The velocity signal also interacts with Google's behavioral engagement data. As SBGeeks documented, Google measures how users interact with your GBP listing after finding it in search results — clicks, calls, direction requests, photo views. Fresh reviews improve click-through rates on the listing itself, which feeds a reinforcing loop: better velocity leads to better engagement metrics, which leads to better ranking, which leads to more visibility and more organic reviews.

Why Spikes Fail and Consistency Wins

Here's where agencies and business owners make the most expensive mistakes. The instinct, once someone understands that velocity matters, is to run a burst campaign — blast out review requests to every customer in the database, collect 50 reviews in a week, and call it done. This approach backfires for two reasons.

First, sudden spikes in review activity can trigger Google's spam detection. If your profile has averaged 1-2 reviews per month for two years and suddenly receives 40 in a week, the pattern looks artificial. Google's systems are tuned to distinguish organic review accumulation from manufactured bursts, and getting flagged can result in review removal or, in severe cases, profile suspension. I've written about how Google's spam crackdown has already disrupted bulk optimization tactics, and the review velocity pattern is a direct extension of that enforcement posture.

Second, even if all 50 reviews survive, you've front-loaded your entire velocity signal into a single window. Within 90 days, those reviews start decaying. Within six months, they're contributing minimal ranking value. And your profile goes back to generating 1-2 reviews per month, which is exactly the low-velocity signal you were trying to escape.

The businesses that win the velocity game are generating 3-8 reviews per month, every month, with no gaps longer than two weeks. The actual number depends on your industry and competitive set. Data from Hashmeta's analysis confirms that the quality of reviews determines their ultimate impact alongside the rate — so five detailed, keyword-rich reviews per month will outperform fifteen generic "Great service!" reviews.

The Google Review Freshness Factor and Content Depth

The Google review freshness factor goes beyond timestamp alone. Google's natural language processing evaluates the content of each review, and specific, detailed reviews carry more weight in the ranking model than brief or generic ones.

What matters inside the review text:

Service or product mentions: Reviews that name specific services ("replaced our water heater," "handled the estate planning paperwork") help Google associate the business with relevant queries.

Location references: Mentioning neighborhoods, cities, or landmarks strengthens geographic relevance signals.

Outcome descriptions: Reviews describing results ("our energy bill dropped 30%," "got us through closing in 22 days") signal authenticity and quality.

Photo attachments: Reviews with images receive additional weight in display algorithms and tend to stay more prominent in the review feed.

This is where review generation strategy intersects with the broader work of extracting SEO value from your Google reviews. The reviews themselves function as user-generated content, and Google reads them for entity recognition, sentiment analysis, and topical relevance. A review that says "Best dentist in Clearwater, fixed my crown same day" does more ranking work than "5 stars, highly recommend" — even though both contribute to velocity.

Reddit's local SEO community has confirmed this in practice. One practitioner reported that newer listings outrank older ones when their recent reviews are frequent and detailed, even with far fewer total reviews. But the ranking advantage holds only if momentum continues. A burst of great reviews followed by silence produces the same decay problem as a burst of mediocre ones.

The Competitive Dynamics of Velocity

Review velocity doesn't exist in a vacuum. Your velocity is always measured relative to your competitors in the same geographic and categorical space. This is why rankings shift when competitors push their review velocity — an observation confirmed repeatedly in Reddit's r/localseo community.

If the top three businesses in your local pack are each generating 6-8 reviews per month and you're generating 2, your relative velocity deficit grows every month. The total review gap might seem manageable, but the velocity gap compounds. After six months, they each have 36-48 fresh, high-influence reviews while you have 12. The algorithm sees three businesses with strong activity signals and one that's falling behind.

This competitive dimension is what makes review velocity SEO an ongoing operational concern rather than a project you complete. It's similar to content production or link building in that stopping means losing ground, because your competitors don't stop. Any agency managing your local SEO should be tracking competitor review velocity monthly, and if yours isn't, that's a gap worth examining through the lens of whether your agency actually drives measurable results.

Industry benchmarks for healthy monthly review velocity look different depending on your category:

Restaurants and hospitality: 10-15+ reviews per month

Retail: 8-12 reviews per month

Professional services (law, accounting, consulting): 4-8 reviews per month

Healthcare: 3-6 reviews per month

Home services (plumbing, HVAC, electrical): 4-8 reviews per month

These numbers represent the floor for competitive visibility, not aspirational targets. In dense metro markets, the thresholds run higher.

The Response Layer Most Businesses Ignore

Owner responses to reviews act as a secondary velocity signal. Google's systems register response activity as a sign of active business management, and profiles with consistent response patterns tend to rank better than profiles that leave reviews unanswered.

The data from RevuKit's analysis supports this: a 4.3 rating with 300 reviews and consistent responses will typically outrank a 4.9 rating with 15 reviews and no responses. The response itself creates additional indexable text on the profile, gives Google more entity and keyword signals, and demonstrates the kind of active engagement that correlates with legitimate, high-quality businesses.

Response timing matters too. Responding within 24-48 hours of a review's posting adds to the freshness signal. Responding to a review six months after it was posted does far less. If you're going to build a review response habit, the mechanism rewards speed and consistency, the same way it rewards those qualities in review acquisition itself.

The Volume Threshold That Still Matters

None of this means total review count is irrelevant. Volume establishes baseline credibility — both for Google's algorithm and for the humans reading your profile. A business with 3 reviews, even if they're all from this week, lacks the social proof to convert clicks into customers.

Practitioner consensus places the credibility threshold at roughly 50-75 reviews. Below that number, each incremental review does meaningful work for both trust and ranking. Sterling Sky's testing showed that the jump from 9 to 10 reviews produced a visible ranking change, suggesting that early volume milestones still carry algorithmic weight.

Beyond 75-100 reviews, though, the marginal ranking value of each additional review drops dramatically unless those reviews are recent. This is the core of the velocity problem: businesses that crossed the 100-review mark years ago often assume they've "won" the review game, when in reality their review profile is depreciating month over month. You can see how this connects to the broader challenge of building a review-to-rankings pipeline that treats reviews as an ongoing signal rather than a completed project.

Where This Model Breaks

The velocity-over-volume model has real limitations, and pretending otherwise would be dishonest.

In low-competition markets, total volume can still dominate. If you're the only plumber within 30 miles with more than 20 reviews, velocity becomes secondary. The algorithm has limited options to rank, and your historical volume plus basic relevance signals will keep you visible regardless of recency patterns. The velocity mechanism shows its teeth primarily in competitive markets with multiple businesses vying for the same local pack positions.

For regulated industries, review generation at the rates described above can be genuinely difficult. Healthcare providers face HIPAA considerations around soliciting patient reviews. Financial advisors operate under compliance frameworks that restrict how they request testimonials. In these sectors, 2-3 reviews per month might represent maximum realistic velocity, and the competitive dynamics adjust accordingly because everyone in the category faces the same constraints.

When fake reviews enter the picture, the model gets distorted. A competitor generating fake reviews at a high velocity will temporarily gain ranking advantages. Google's spam detection catches many of these, but the lag time can be weeks or months. If you see a competitor's ranking spike alongside a suspicious review pattern, Google's spam enforcement mechanisms are the appropriate response channel, but expect delays.

Platform-dependent review ecosystems add noise. For businesses where Yelp, Healthgrades, Avvo, or industry-specific platforms carry significant weight, Google review velocity tells an incomplete story. The algorithm does pull signals from third-party review sites, and a business with strong Yelp velocity but weak Google velocity may still rank well if Google indexes those external reviews as supporting evidence.

The honest takeaway is that review velocity is a powerful and well-documented mechanism within Google's local ranking system, but it operates inside a broader context of proximity, relevance, link signals, and behavioral data. Treating velocity as the only lever to pull will leave you frustrated when a competitor two blocks closer to the searcher outranks you despite weaker review metrics. The mechanism works — and works consistently — but it works within boundaries set by every other signal in the local ranking model.

Marcus Webb

Digital marketing consultant and agency review specialist. With 12 years in the SEO industry, Marcus has worked with agencies of all sizes and brings an insider perspective to agency evaluations and selection strategies.

Explore more topics