The Review-to-Rankings Pipeline: Building an SEO Strategy Around Customer Feedback Signals

Google's review algorithm evaluates four distinct signal categories when it processes customer feedback: relevance, recency, volume, and sentiment.

The Review-to-Rankings Pipeline: Building an SEO Strategy Around Customer Feedback Signals

Google's review algorithm evaluates four distinct signal categories when it processes customer feedback: relevance, recency, volume, and sentiment. According to Consumer Fusion's breakdown of the algorithm, reviews that mention specific services or products help Google understand what a business offers and can influence where it appears in search results. The widespread misunderstanding is that reviews are a reputation management problem, something the marketing team handles on their own while SEO works in a separate silo. That's backwards. Customer feedback ranking signals feed directly into local pack placement, rich snippet eligibility, and the AI systems that now generate search overviews. Treating reviews as anything other than a core SEO input means you're ignoring one of the few ranking levers your customers build for you, for free, in their own words.

I've evaluated agencies that spend $8,000 a month on link-building campaigns while their Google Business Profiles sit at 12 reviews with no owner responses since 2023. The ROI math on that prioritization never works out. The review-to-rankings pipeline, when you understand each component, explains why.

How Google Decomposes Review Signals

The pipeline starts at the algorithm level. Google doesn't treat a five-star review and a one-star review as simple positive/negative data points. The system analyzes the text content of each review for keyword relevance, evaluates whether the reviewer has a history of credible activity, checks how recently the review was posted, and weighs it against the velocity of other reviews for the same business.

Whitespark's Local Search Ranking Report has consistently shown review signals contributing roughly 17% of local pack rankings. Moz's research estimates that review quantity, diversity, and velocity account for approximately 15% of Google's local pack ranking factors, placing them third behind link signals and proximity. These aren't soft signals. They're quantified.

What makes this mechanism interesting is the freshness component. A business with 400 reviews but nothing new in eight months will often lose ground to a competitor with 80 reviews that picks up three or four per week. Google interprets review velocity as a proxy for business activity. Stagnation looks like decline.

The practical implication: any SEO strategy that treats review acquisition as a one-time project rather than an ongoing pipeline is structurally flawed. You need systems that produce steady, authentic feedback on a weekly or monthly cadence. This is where the "pipeline" framing becomes useful, because each downstream component depends on the one before it.

The Translation Layer Between Reviews and Rich Results

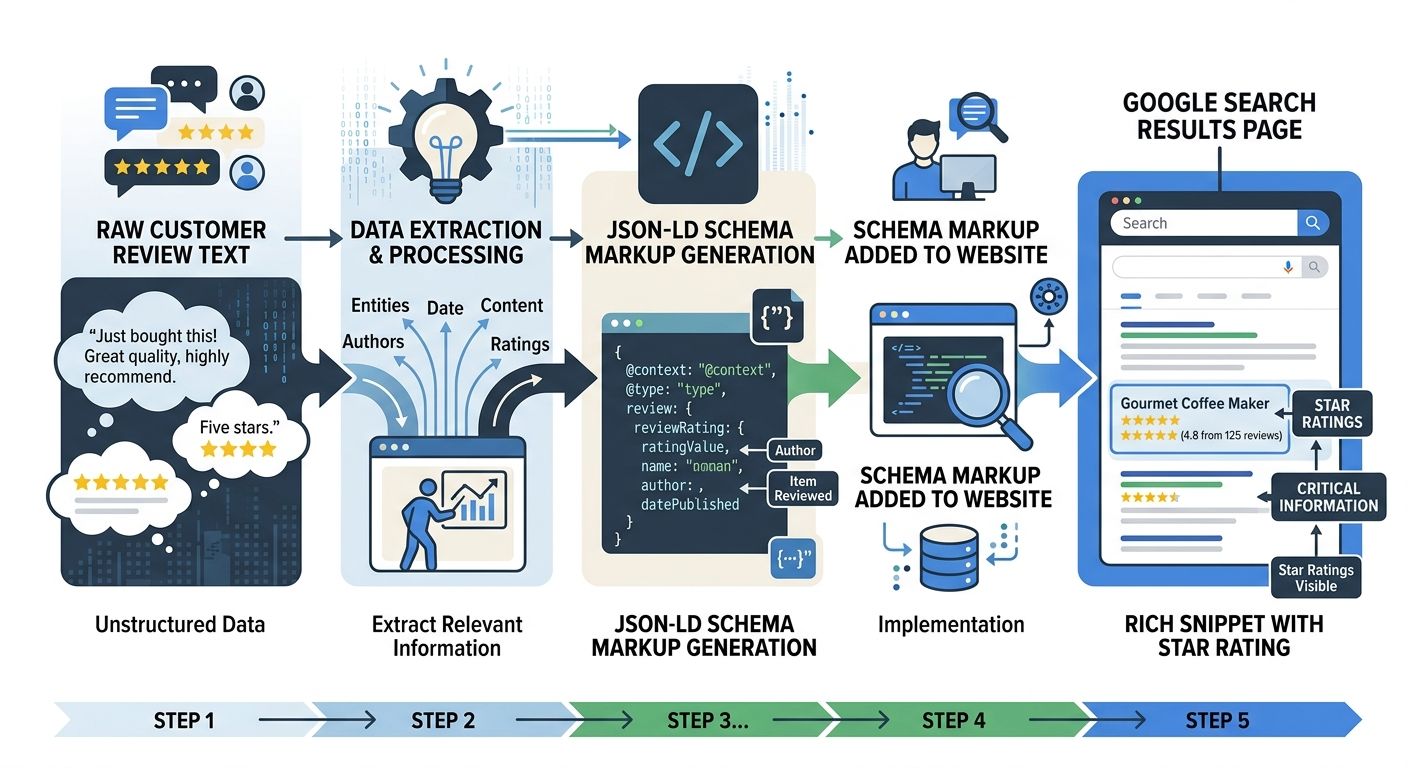

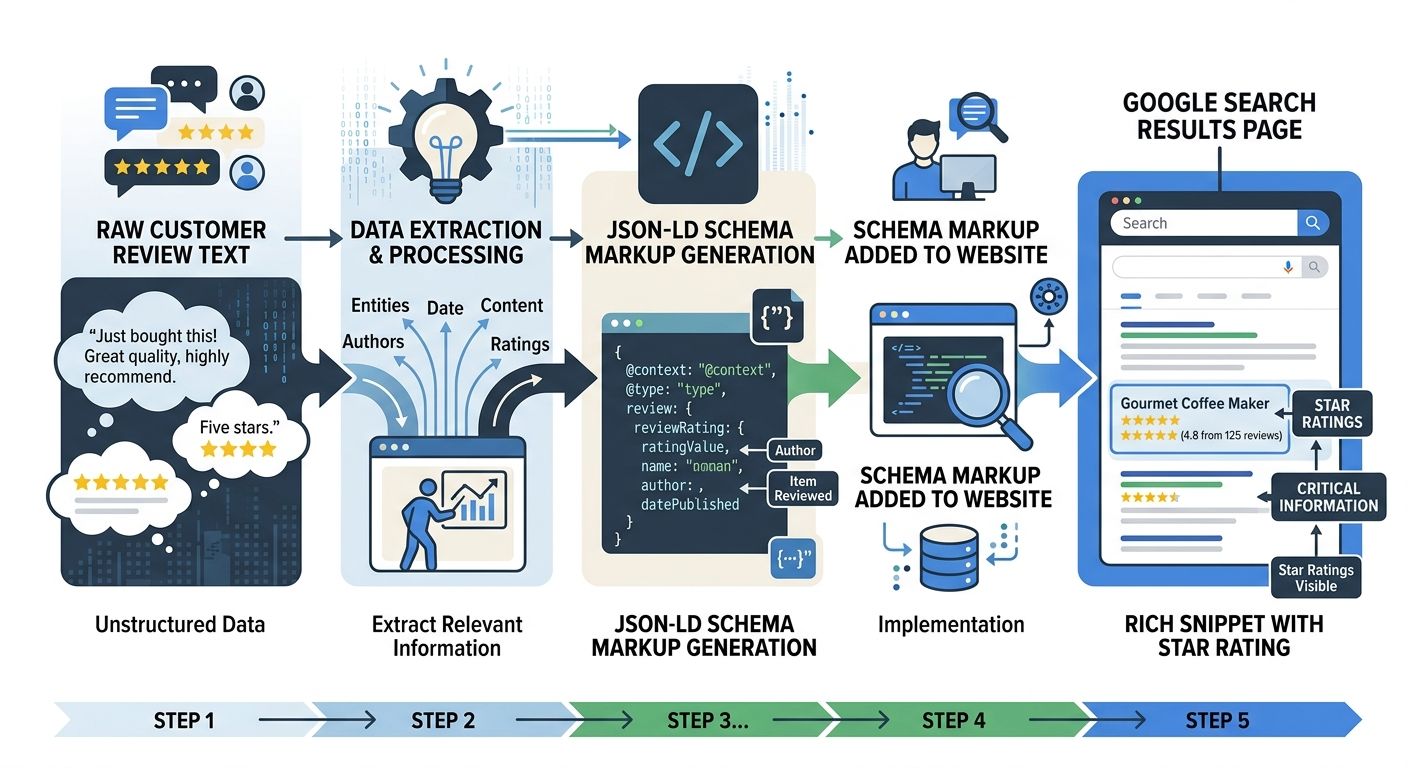

Here's where review schema markup SEO enters the picture. Raw reviews sitting on your Google Business Profile or product pages do contribute to rankings, but structured data is what makes those reviews visible in search results as rich snippets with star ratings, review counts, and reviewer names.

A Digital Strike analysis of schema's impact on SEO explains that rich results pull their visual elements directly from the structured data behind a webpage. When you implement JSON-LD markup that defines your aggregate rating, review count, and individual review objects, you're giving Google a machine-readable translation of what your customers said.

The CTR impact is significant. Studies consistently show that listings with star ratings in SERPs see click-through rate improvements of 20-35% compared to plain blue links. And here's the nuance that matters: schema markup doesn't directly move your ranking position. Structured data has no confirmed direct impact on ranking. But the CTR lift from rich results sends behavioral signals back to Google, and those behavioral signals do influence rankings over time. Webstix's research suggests that click-through rate improvements from schema may take one to three months to materialize as search engines gather interaction data.

This means the translation layer works on a delay. You implement schema, Google validates it, rich snippets appear, users click at higher rates, and eventually those engagement patterns feed back into ranking calculations. It's a feedback loop, and the businesses that set it up first in their vertical accumulate compounding advantages.

Google's own Reviews System documentation confirms that the system assesses content at the page level, can evaluate entire sites with substantial review content, and uses product structured data to identify reviews. Content improvements drive recovery over time, but the automated assessment is only one factor among many.

Review Distribution Across the Local Citation Pipeline

The third component of the mechanism is distribution. Reviews concentrated entirely on a single platform create a fragile signal profile. The local citation SEO pipeline works by spreading your business's review footprint across multiple authoritative directories and platforms, each of which reinforces your relevance to search engines.

Uberall's analysis of local SEO citations highlights that citations optimized with natural language and long-tail keywords improve voice search visibility. Customer reviews and ratings on citation sources directly influence both consumer decisions and search engine rankings. The connection between citations and reviews is tighter than most businesses realize: a clean business listing on a directory site, combined with active reviews on that same platform, sends a combined signal of activity, relevance, and trust.

Ciderhouse Media's local citation guide puts it plainly: a clean listing plus a steady flow of real reviews signals activity, relevance in local search results, and trust. They recommend asking satisfied customers to leave feedback on the same citation sources where you're listed, including your Google profile, Yelp, and industry-specific directories.

This is the part where I see agencies fail most often. They'll manage a client's Google Business Profile reviews while completely ignoring Yelp, industry-specific platforms, and the dozens of smaller citation sources that feed local search algorithms. I worked with a home services company whose agency was charging $2,500/month for "local SEO management" but hadn't touched their Yelp profile, Angi listing, or BBB page in over a year. Their local pack visibility had been declining for six months, and the agency's explanation was "algorithm changes." The actual problem was citation rot combined with review concentration.

For businesses in specialized industries, the citation sources that matter are often vertical-specific. A medical practice needs reviews on Healthgrades and Zocdoc. An architecture firm needs them on Houzz. If you're working with niche-specific SEO strategies for firms like architects, the review distribution map looks completely different from a generalist local business.

Why Review Aggregation Compounds Ranking Signals

Review aggregation for rankings works because it solves two problems simultaneously: it gives search engines a consolidated view of your reputation across platforms, and it gives your website fresh, keyword-rich user-generated content that would be impossible to produce through traditional content marketing.

Birdeye's analysis of review aggregators describes them as tools that provide fresh user-generated content to boost search engine rankings, particularly for local businesses. Elfsight's review of aggregation platforms positions them as local SEO tools for improving business visibility, especially for businesses prioritizing localized customer interactions.

The mechanism here is straightforward. When you aggregate reviews from Google, Yelp, Facebook, and industry directories onto your own website, you're creating pages that naturally contain the exact language your customers use to describe your services. Those phrases are almost always long-tail keywords that your content team would never think to target. A customer writing "they fixed my leaking basement drain on a Saturday" creates a piece of content that matches the search query "basement drain repair weekend service" in ways that professionally written copy rarely does.

The technical implementation matters here. Reviews displayed through JavaScript-heavy widgets that search engine crawlers can't parse won't contribute to your organic visibility. Reddit's SEO communities have discussed this extensively: crawlable, text-rich reviews displayed directly on product or service pages perform better than reviews hidden behind tabs, iframes, or dynamically loaded modules. If you're building a custom agency stack without vendor lock-in, the review aggregation component needs to prioritize server-side rendering or static HTML output.

The pricing landscape for aggregation tools varies widely. Entry-level platforms like Elfsight start around $5-10/month for basic widget embedding. Mid-tier solutions like Birdeye or Podium run $300-500/month and include multi-platform monitoring, response management, and schema markup automation. Enterprise-grade platforms can exceed $1,000/month. The ROI calculation should factor in both the direct SEO value and the time savings from centralized reputation management.

The Response Loop That Algorithms Actually Measure

The fifth component is engagement, specifically whether and how you respond to reviews. Research from Mobal clarifies that review replies aren't a direct ranking factor in the way that backlinks or on-page optimization are. But they create measurable secondary effects: they encourage more customers to leave reviews (which does affect rankings), they signal to Google that your profile is active and worth ranking higher, and they build trust signals that influence user behavior.

BreakingAC's coverage of AI-generated search responses adds an important dimension: AI systems notice engagement patterns. Regular responses signal an active, customer-focused business. This matters because AI Overviews and generative search features are increasingly drawing from review content and business engagement patterns when they construct answers. If you're thinking about how AI-generated results affect your visibility strategy, the response loop is one of the inputs those systems evaluate.

The mechanical explanation for why responses matter is that they create additional text content associated with your business listing. When you respond to a review mentioning "emergency plumbing repair," your response that references your 24/7 availability and licensed technicians adds keyword-relevant content to your profile. Google processes both the review and the response as content signals.

I've seen agencies charge $500-800/month for review response management as a standalone service. The good ones use templates adapted to each review's sentiment and content, maintaining brand voice while incorporating relevant service keywords. The bad ones paste the same "Thank you for your kind words!" response on every five-star review, which adds no SEO value whatsoever and looks transparently automated to both customers and search engines.

Where The Model Breaks

The review-to-rankings pipeline has real limitations, and any honest analysis needs to acknowledge them.

First, you can't control what customers say. Unlike content marketing, where you choose every word, customer feedback ranking signals are generated by people with their own vocabulary, priorities, and emotional states. A steady stream of reviews mentioning the wrong service category, or repeatedly flagging a problem you've already fixed, can create misleading signals that are difficult to correct without suppressing authentic feedback.

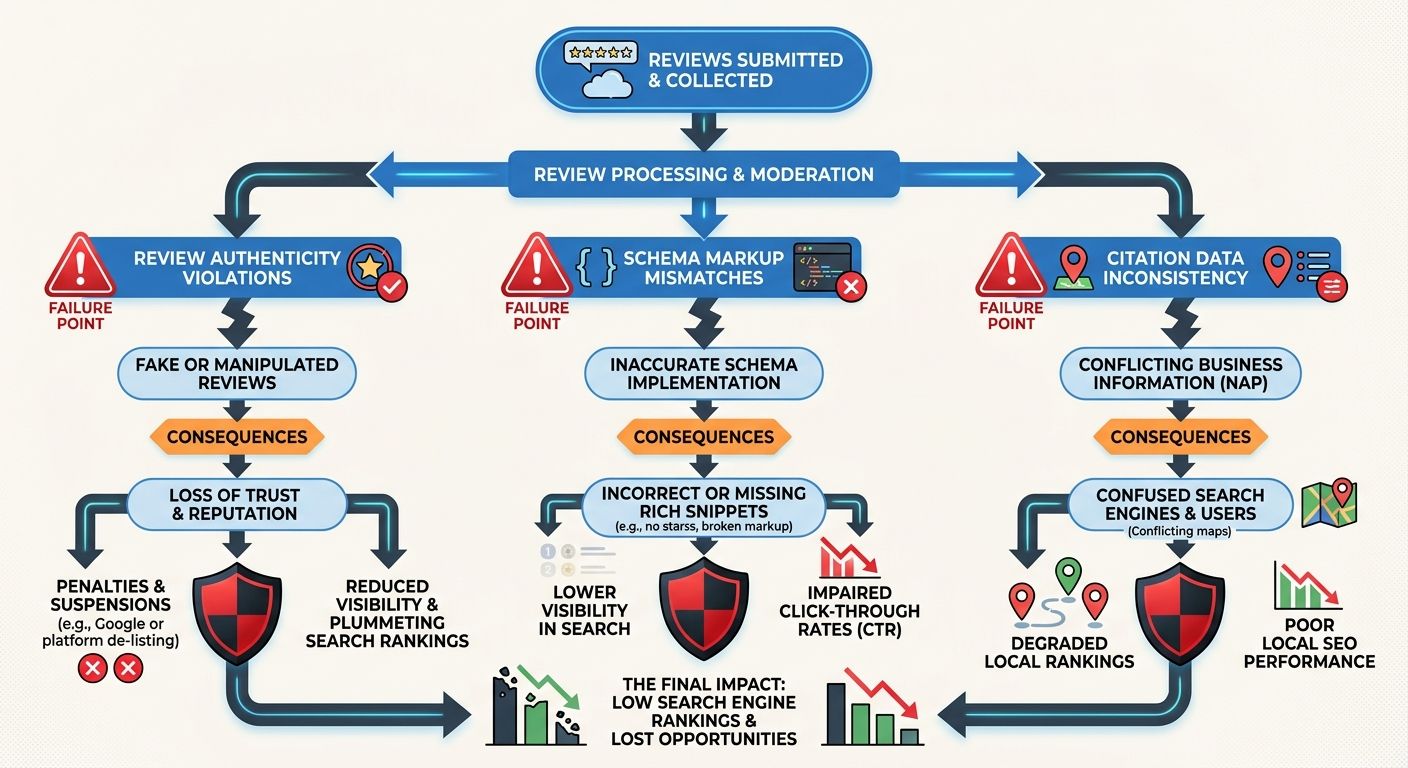

Second, review schema markup SEO only works when your reviews are genuine. Google's guidelines explicitly prohibit incentivized reviews, and their detection systems have improved considerably. Businesses caught soliciting fake reviews face removal of all reviews from their listing, suspension of their Google Business Profile, and in some cases, manual penalties that affect organic search visibility across the board. I've seen businesses lose years of legitimate review accumulation because an overzealous marketing intern offered gift cards for five-star ratings.

Third, the local citation SEO pipeline requires ongoing maintenance that many businesses underestimate. Business information changes (new phone numbers, updated hours, additional service areas) need to propagate across every citation source. Inconsistent NAP (name, address, phone) data across platforms doesn't just confuse customers; it dilutes the trust signals that search engines derive from citation consistency. We covered the ways algorithm changes have shifted ranking factors over time, and citation consistency has only become more important as Google cross-references more data sources.

Fourth, review aggregation for rankings creates a dependency on third-party platforms that can change their terms, APIs, or pricing at any time. Yelp has historically been aggressive about restricting how businesses display Yelp reviews on external sites. Google's Terms of Service for review display have their own constraints. Building your SEO strategy around aggregated reviews means accepting platform risk that you wouldn't face with owned content.

And fifth, the entire model assumes a business actually delivers good service. No amount of schema markup, citation optimization, or aggregation tooling will overcome a fundamentally poor customer experience. The pipeline amplifies reality. For businesses that deliver well, it creates a compounding SEO advantage. For businesses that don't, it accelerates the visibility of their problems.

The honest assessment is that this pipeline works best for established businesses with genuine customer satisfaction, the technical infrastructure to implement and maintain structured data, and the organizational discipline to respond to feedback consistently over months and years. It's a slow-build system. Anyone promising you dramatic ranking improvements from review optimization in 30 days is selling something that the underlying mechanism can't deliver.

Marcus Webb

Digital marketing consultant and agency review specialist. With 12 years in the SEO industry, Marcus has worked with agencies of all sizes and brings an insider perspective to agency evaluations and selection strategies.

Explore more topics