When SEO Tools Fail to Scale: Diagnosing Platform Breakdowns Before Your Client Campaign Collapses

Every SEO platform I've evaluated in the past twelve years performs beautifully in the demo. The keyword tracking refreshes in real time, the crawl reports render without a hitch, and the dashboards look like they were designed by someone who actually respects your retinas.

When SEO Tools Fail to Scale: Diagnosing Platform Breakdowns Before Your Client Campaign Collapses

Every SEO platform I've evaluated in the past twelve years performs beautifully in the demo. The keyword tracking refreshes in real time, the crawl reports render without a hitch, and the dashboards look like they were designed by someone who actually respects your retinas. Then the agency signs its eleventh or twelfth client, assigns a junior strategist to manage three accounts simultaneously, and the entire apparatus begins to groan under the weight of what it was supposedly built to handle. I've watched this pattern unfold at agencies billing $8,000 per month per client and agencies billing $800. The breaking point is remarkably consistent, and it's almost never where the agency expects it to be.

The conversation about SEO tool scalability tends to focus on features: can this platform track 50,000 keywords, does it support multi-location rank tracking, will it integrate with our CRM? Those are reasonable questions, but they're the wrong ones to ask first. The tools themselves are rarely the root cause of failure. The failure lives in the gap between what a platform can technically do and how an agency actually uses it when managing seven, twelve, or twenty concurrent campaigns. That gap widens fastest when nobody is watching.

The Twelve-Client Ceiling and What It Reveals

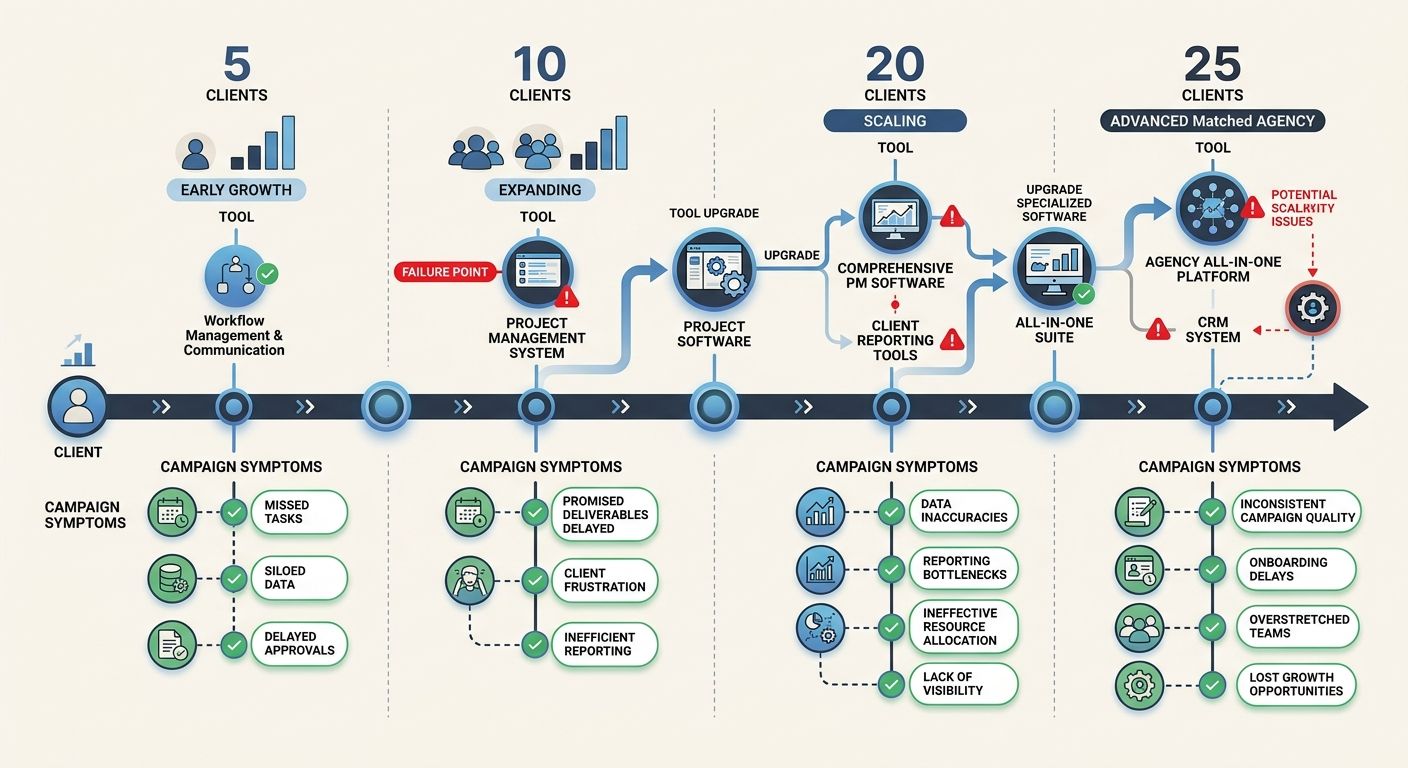

There's a thread on Reddit's r/agency forum where an SEO operator describes hitting a wall at twelve clients, charging $500 per audit and $1,000 per month for content and ongoing technical work. The specifics of that person's situation are less interesting than how precisely they mirror what I see during agency evaluations. Twelve clients is roughly the threshold where manual workarounds stop working. Before that number, a competent operator can export CSVs from Google Search Console, paste them into a spreadsheet, cross-reference rank tracking data from a separate tool, and assemble something resembling a coherent report. The work is tedious but survivable. Beyond that number, those manual processes compound into hours of non-billable labor each week, and accuracy starts to decay without anyone noticing.

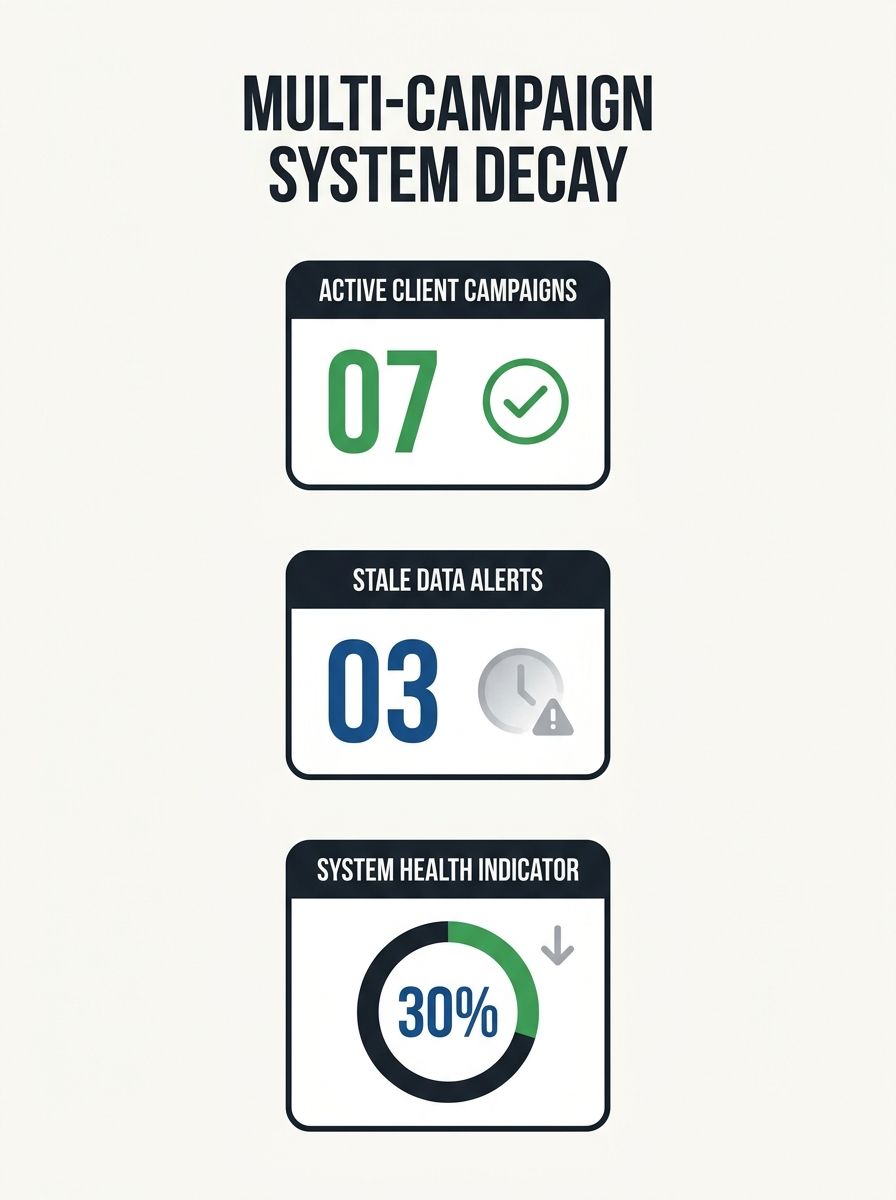

The decay is the dangerous part. When I audit agencies that have stalled at the 10-to-15 client range, I almost always find that their reporting has drifted. One client's keyword set hasn't been updated since onboarding. Another client's crawl schedule quietly lapsed because the tool's crawl budget was consumed by a larger account. A third client's content performance is being measured against the wrong baseline because someone duplicated a dashboard template and forgot to change the date filter. None of these errors produce a dramatic failure on any given Tuesday. They accumulate silently until a quarterly business review exposes a campaign that's been effectively unmanaged for weeks.

This is where the concept of agency platform limits becomes concrete. The limit is seldom a hard technical constraint like "this tool only supports X number of projects." The limit is operational: how many concurrent campaigns can this tool support before its users start making unforced errors? Terakeet's enterprise SEO research makes this point clearly, noting that sites with more than 6,000 pages require scalability built into the SEO program itself, not bolted on after problems emerge. The same principle applies to agencies managing multiple client sites. If your workflow depends on a person remembering to check a thing, your workflow will fail at scale. If your platform doesn't automate the remembering, your platform is the bottleneck.

Where the Stack Fractures Under Multi-Campaign Load

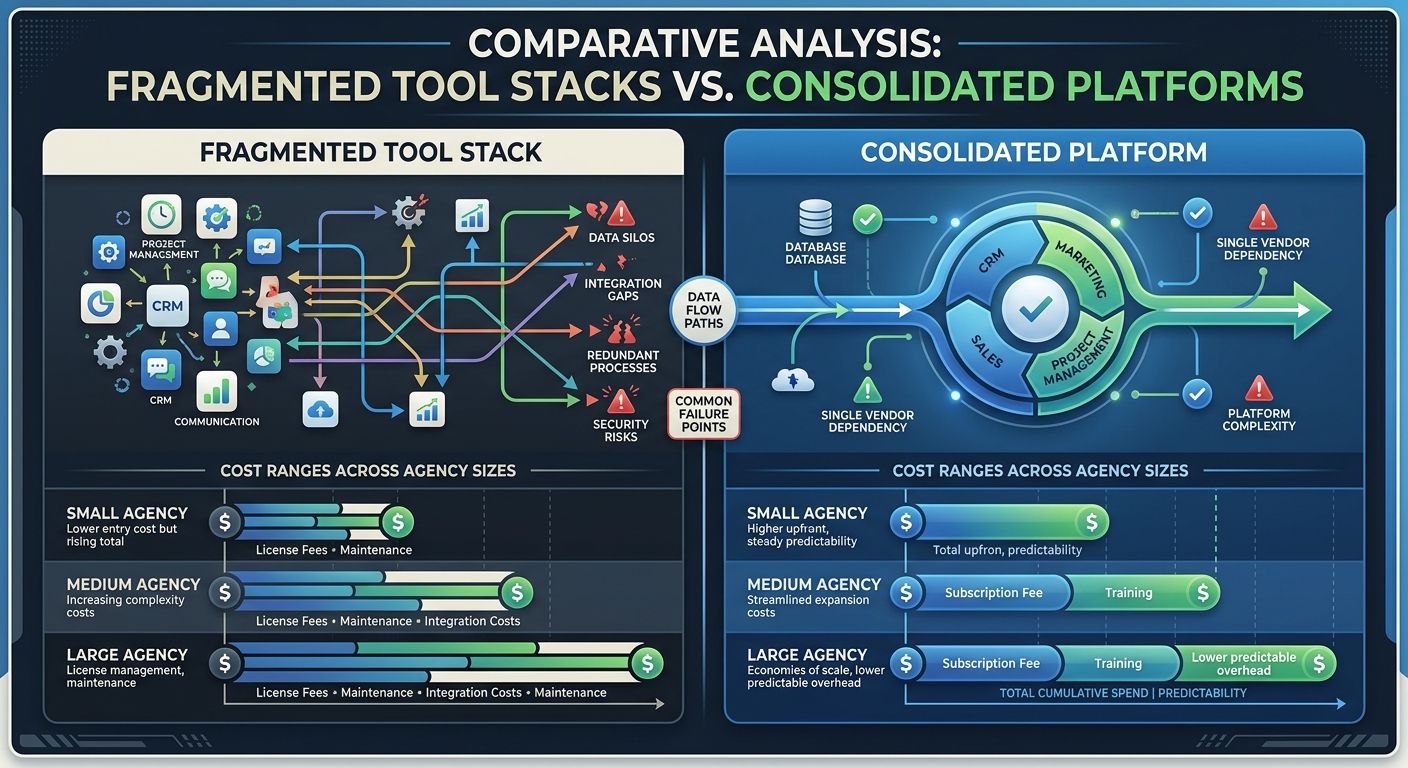

I've evaluated over 200 agencies, and the ones that struggle most with multi-campaign management share a structural flaw I think of as "tool archipelago." They've assembled a collection of best-in-class point solutions: Ahrefs for backlink analysis, Screaming Frog for crawling, Surfer for content optimization, Google Looker Studio for dashboards, Asana or Monday for task management. Each tool is excellent at its job. The problem is that no single system holds the authoritative version of truth about any given client. Growth Rocket's analysis of structural failure points at scaling agencies identifies this pattern precisely: channel-specific reporting with no unified view produces a fragmented picture for both account leads and clients.

The fragmentation matters because SEO decisions are rarely made within a single tool's domain. When you notice a ranking drop, you need crawl data, backlink data, content change history, Core Web Vitals metrics, and sometimes CRM data showing whether the traffic that did arrive actually converted. If those data sources live in six different platforms, the debugging process becomes an archaeological exercise. I've seen agencies spend three days diagnosing a traffic decline that a connected stack would have surfaced in thirty minutes. When you're billing $1,000 a month, three days of diagnostic work represents a devastating margin hit, and the client doesn't see the effort, only the delayed response. If you're curious about how implementation friction derails enterprise SEO strategies, the dynamics are strikingly similar at the agency level.

The alternative, of course, is a consolidated enterprise platform. Tools like BrightEdge, Conductor, seoClarity, and Oncrawl attempt to bring crawling, rank tracking, content analysis, and reporting into a single environment. According to Riff Analytics' 2026 enterprise SEO tools review, platforms like Oncrawl now allow teams to build custom SEO models and connect crawl data with other business metrics, with pricing tailored to crawl volume and data needs. But these platforms come with their own scaling risks. Enterprise SEO platforms typically price by crawl volume, tracked keywords, or user seats. An agency managing fifteen mid-market clients might find itself paying $2,500 to $5,000 per month for a platform tier that adequately covers all accounts, and that cost needs to be absorbed across the client base or passed through explicitly. The agencies I review that handle this well are transparent about platform costs in their proposals. The ones that handle it poorly try to run enterprise workloads on starter-tier plans and then wonder why their crawl data is three weeks stale.

There's a middle path that I've seen work at agencies in the 15-to-40 client range, which involves picking two or three core platforms and investing heavily in the integrations between them. The critical integration is almost always between the SEO platform and the project management system. When a crawl surfaces a batch of broken internal links, that finding needs to become a task assigned to a specific person with a deadline, without requiring someone to manually copy and paste the URLs into a separate system. Agencies that demand structured pilot tests before signing with a platform vendor are the ones most likely to discover integration gaps before they've committed to an annual contract.

SEO Software Reliability Testing Before the Contract Signature

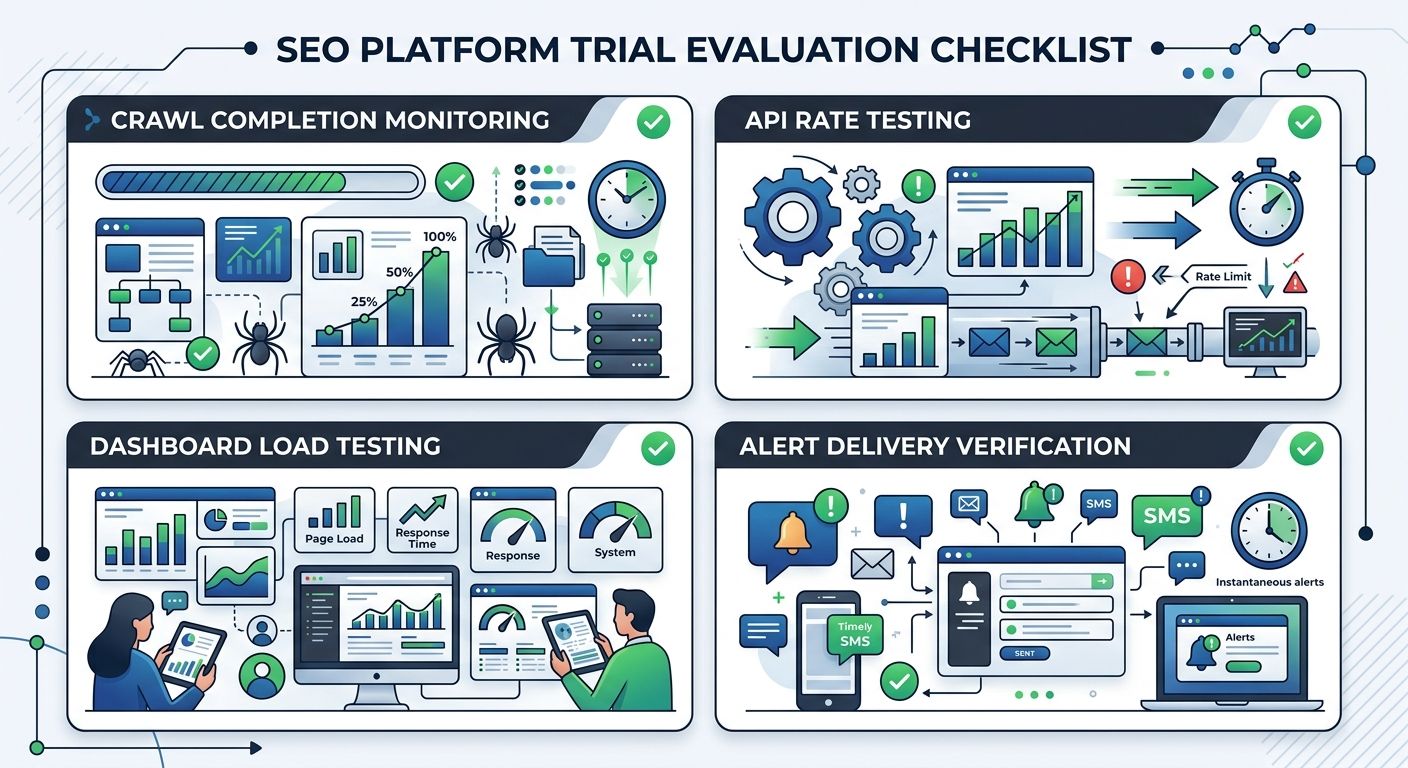

The phrase "SEO software reliability testing" sounds like something an enterprise procurement team would care about, and it should be something every agency cares about too. I recommend a specific evaluation protocol whenever I'm advising an agency on platform selection, and it's grounded in a principle that should be obvious but frequently gets ignored: test the tool at your projected scale, not your current scale.

Here's what that looks like in practice. If you're managing eight clients and evaluating a platform to support growth to twenty, don't run your trial with data from one client. Load the trial instance with data from all eight existing clients, configure the crawl schedules, keyword sets, and reporting dashboards to match your real workflows, and then evaluate the platform's performance under that load. Pay attention to crawl completion times, dashboard rendering speed, API rate limits if you're pulling data into external systems, and alert reliability. Most platform trials run for 14 to 30 days, which is enough time to observe whether automated crawls complete on schedule or silently fail when competing for resources with other accounts.

Growth Rocket's research on cross-functional alignment at scaling agencies makes a point I keep returning to in my evaluations: most agency dashboard failures are operational, not technical. The tools rarely crash in a dramatic way. They degrade quietly. A report that took four seconds to generate with three clients takes forty-five seconds with twelve. An API endpoint that comfortably handled 500 requests per day starts throttling at 1,200. A crawl that completed overnight for a 2,000-page site now takes three days for a 15,000-page site and overlaps with the next scheduled crawl. These degradations don't trigger error messages. They just make your team slower, less accurate, and more likely to skip steps they used to complete reliably.

The testing mindset should extend beyond the initial platform selection. I advise agencies to schedule quarterly tool audits, which don't need to be elaborate. Spend two hours checking whether crawl schedules are still running as configured, whether keyword tracking volumes match what was specified at setup, whether report templates still pull from the correct data sources, and whether any API integrations have broken due to platform updates. This kind of maintenance feels unglamorous compared to winning a new client or launching a content campaign, but it's the difference between an agency that scales to thirty clients and one that collapses at fifteen. The debugging decision frameworks that apply to client-side SEO problems work equally well when turned inward on your own tooling.

The testing dimension also intersects with how you evaluate your agency's credentials and promises. An agency that claims Google Partner status or similar certifications but can't demonstrate a stable, scalable technology infrastructure is selling a credential, not a capability. I look for evidence of operational maturity in every agency review I conduct, and the tool stack is one of the most revealing indicators. An agency that can show me documented crawl schedules, automated alert configurations, and integrated reporting pipelines is telling me something real about how they'll perform at scale. An agency that shows me a list of logos for tools they subscribe to is telling me nothing.

The Question Nobody Wants to Answer Honestly

The uncomfortable truth about SEO tool scalability is that it forces agencies to confront a question they'd rather avoid: are you a services business or a technology business? Agencies that treat their tool stack as an incidental expense, something to minimize rather than invest in, will reliably hit a growth ceiling. The tools won't crash spectacularly. They'll just slowly erode the quality of work in ways that are difficult to trace back to a single cause. A client churns, and the agency attributes it to "unrealistic expectations" or "market conditions." But when I pull the thread during a post-mortem review, the root cause is often that the campaign went effectively unmonitored for six weeks because the tool stack couldn't support the workload and nobody noticed the gap.

Agencies that treat their platforms as core infrastructure, budgeting 8-12% of revenue for tooling and allocating real hours for configuration, testing, and maintenance, tend to scale more predictably. They also tend to be more honest with prospective clients about what they can deliver, because their systems provide a clear picture of current capacity. When your tools show you that your team is already running crawls at 90% of your plan's capacity, you know that signing the next client requires a platform upgrade, and you can price accordingly.

What I remain genuinely unsure about is whether the current generation of enterprise SEO platforms will keep pace with the complexity agencies now face. The shift toward AI-driven search, the fragmentation of ranking signals across traditional organic results and generative outputs, and the increasing demand for revenue-tied reporting all pressure these tools in directions they weren't originally designed to handle. Agencies are being asked to prove that SEO drives pipeline, not just traffic, and the platforms haven't fully caught up to that expectation. The agencies that survive the next consolidation cycle will be the ones that diagnosed their platform's limits before those limits diagnosed them, by way of a client walking out the door.

Marcus Webb

Digital marketing consultant and agency review specialist. With 12 years in the SEO industry, Marcus has worked with agencies of all sizes and brings an insider perspective to agency evaluations and selection strategies.

Explore more topics