The SEO Debugging Decision Tree: When to DIY vs. When to Call an Agency

Every SEO debugging framework I've reviewed in the past decade puts the DIY-vs-agency threshold in the wrong place. The conventional wisdom says simple problems stay in-house and complex problems go to agencies.

The SEO Debugging Decision Tree: When to DIY vs. When to Call an Agency

Every SEO debugging framework I've reviewed in the past decade puts the DIY-vs-agency threshold in the wrong place. The conventional wisdom says simple problems stay in-house and complex problems go to agencies. After evaluating over 200 agencies and auditing the technical SEO troubleshooting workflows of dozens of companies, I've found the opposite pattern playing out. Companies consistently outsource the cheap, straightforward fixes they could handle in an afternoon and then attempt to DIY the deep architectural problems that genuinely require outside expertise. The result is wasted retainer spend on one end and slow-burning traffic disasters on the other.

This article defends that claim with three specific bodies of evidence, and it ends with a revised framework for when to hire an SEO agency based on diagnostic capacity rather than perceived problem severity.

Companies Outsource the $200 Problems and DIY the $50,000 Ones

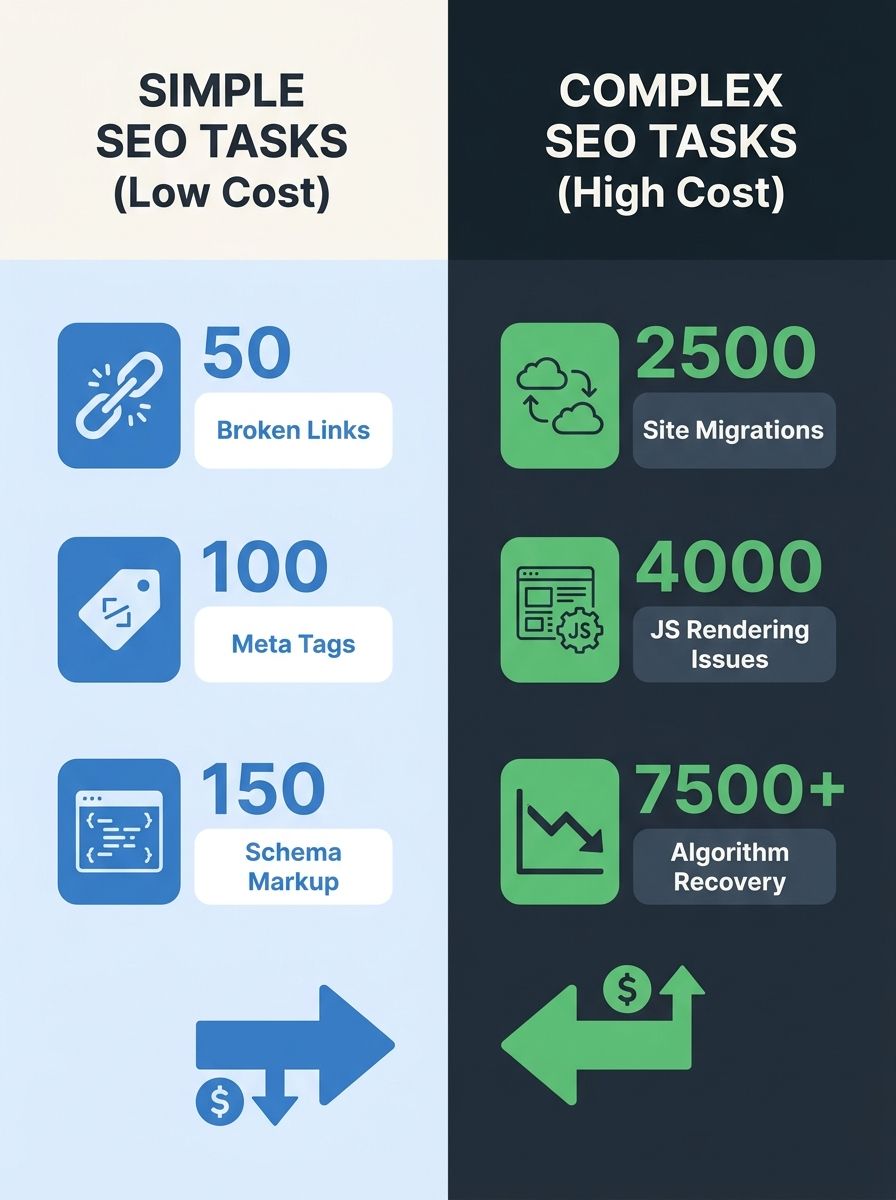

Here's a scenario I've seen at least thirty times: a mid-market company paying an agency $3,500/month to run monthly site audits, fix broken links, update title tags, and refresh meta descriptions. These are tasks that any marketing coordinator with access to Google Search Console and a crawling tool can handle. Google's own Search Essentials documentation walks through these fundamentals clearly enough that a non-technical person can follow along. The tooling for surface-level audits has gotten good enough that you don't need a specialist to spot a missing H1 tag or a batch of 404 errors.

Meanwhile, the same company's internal team is trying to figure out why 30% of their product pages dropped out of the index after a CMS migration. They're reading forums, guessing at robots.txt configurations, and waiting weeks to see if changes take effect. The migration was the $50,000 problem. The meta tag cleanup was the $200 problem. They got the allocation exactly wrong.

The pricing mismatch is real. Agencies typically charge between $1,500 and $10,000 per month for ongoing retainers, with the median sitting around $3,000-$5,000 for mid-sized businesses. When you're paying that range for someone to update your schema markup and check your page speed scores, you're overpaying dramatically. Tools like DebugBear for monitoring Core Web Vitals or SE Ranking for keyword tracking and site audits deliver the surface-level audit functionality at a fraction of that cost.

The tasks that belong in-house include:

Fixing broken internal links and 404 errors

Updating meta titles and descriptions

Adding or correcting Schema markup on existing page templates

Content refreshes on underperforming pages

Monitoring crawl errors in Google Search Console

Basic page speed optimization (image compression, lazy loading)

These are well-documented, repeatable, and low-risk. If you can follow a checklist, you can do them. And there's a solid argument, backed by recurring threads in SEO communities, that in-house execution of these tasks keeps your team closer to the site's actual performance data than any external partner could be. The people updating the content should be the people reading the search console reports. When you hand routine SEO tasks to automation tools, you free up the bandwidth that makes in-house ownership practical.

So why do companies still outsource this work? Usually because someone bought a retainer without defining what the retainer should actually cover. The agency fills the hours with whatever work is easiest to show progress on. Monthly reports full of green checkmarks for fixing 404s feel productive. They aren't expensive problems to solve, but they're satisfying to report.

The Tool Gap Closed, But the Diagnostic Gap Didn't

Here's where the in-house vs agency SEO question gets genuinely complicated. The tools available in 2026 are dramatically better than what existed even three years ago. SE Ranking offers rank tracking, keyword research, website audits, competitor analysis, and backlink monitoring at price points well below Ahrefs or SEMrush. Google Search Console is free and increasingly powerful. DebugBear tracks field and lab performance data continuously at the page level.

The surface-level audit is a solved problem. Any reasonably technical person can run a crawl, get a list of issues, and work through them systematically. The common technical SEO issues cataloged by SEOClarity are well-understood and well-documented.

But identifying that a problem exists and correctly diagnosing why it exists are fundamentally different skills.

Consider a practical example. Your crawl report shows that Googlebot is hitting your product pages but they're not appearing in the index. A surface-level reading says "indexation problem, add an indexing request." A deeper reading might reveal that your React-based product pages are rendering critical content via client-side JavaScript that Googlebot's renderer handles inconsistently. The crawl looks fine. The rendered output is the problem. Diagnosing that requires understanding how Google's rendering pipeline actually works, and as anyone who's dealt with technical SEO during a site launch knows, the gap between "crawled" and "properly rendered" catches experienced developers off guard.

Search Engine Land's structured diagnostic framework for SEO debugging breaks the process into layers: crawl errors, rendering issues, indexing blockers, ranking drops, and SERP changes. The first two layers are where in-house teams can operate effectively. The deeper layers require pattern recognition that comes from seeing hundreds of sites with similar symptoms but different root causes.

This is the diagnostic gap. Your tools can tell you what is happening. Understanding why it's happening, and which of seven possible causes is the actual culprit for your specific configuration, requires experience that most in-house teams don't accumulate because they only manage one site. An agency that handles 20+ client sites develops that pattern recognition naturally. They've seen the same symptom caused by five different underlying issues across different tech stacks.

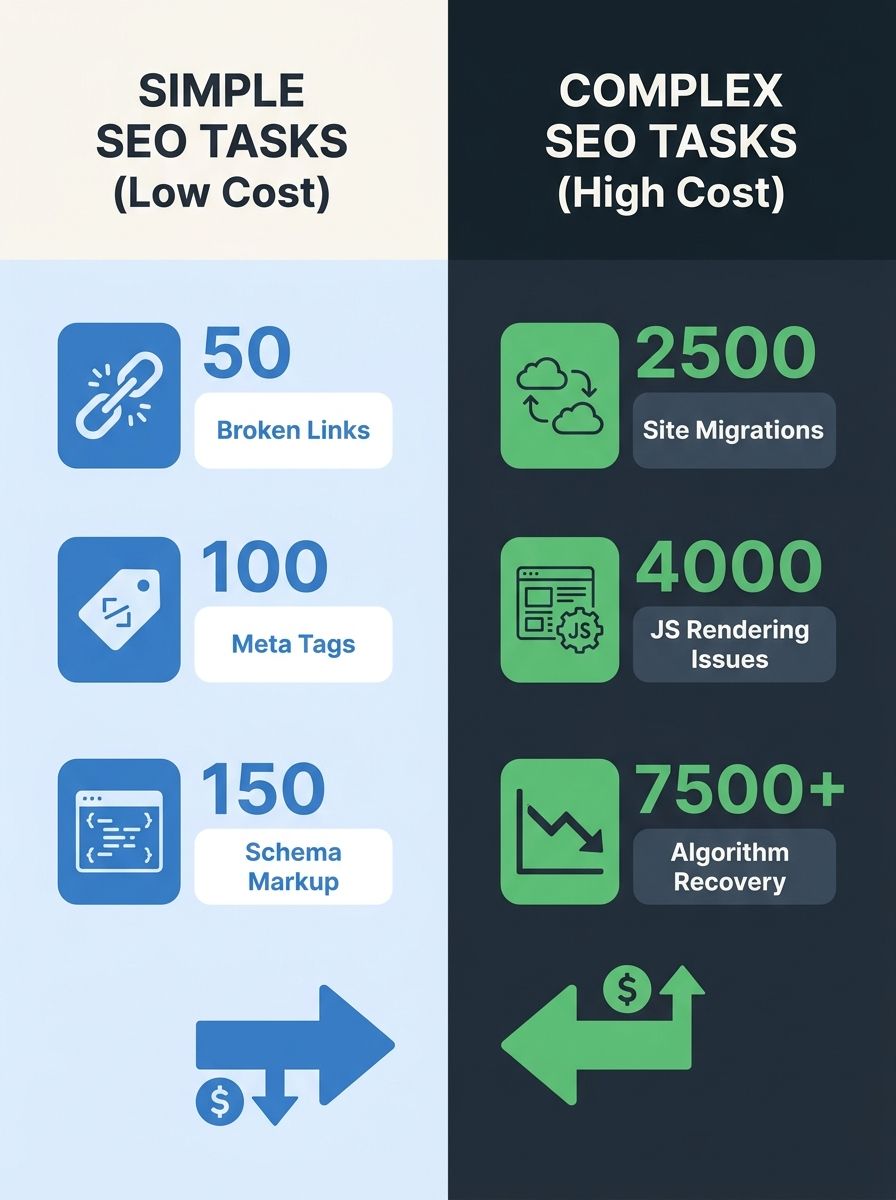

The question to ask yourself isn't "Is this problem simple or complex?" The question is: "Do I know, with confidence, what's causing this specific symptom?" If the answer is yes, you can probably fix it yourself regardless of complexity. If the answer is no, the cheapest path forward is usually a focused agency engagement.

Severity Isn't the Right Sorting Mechanism

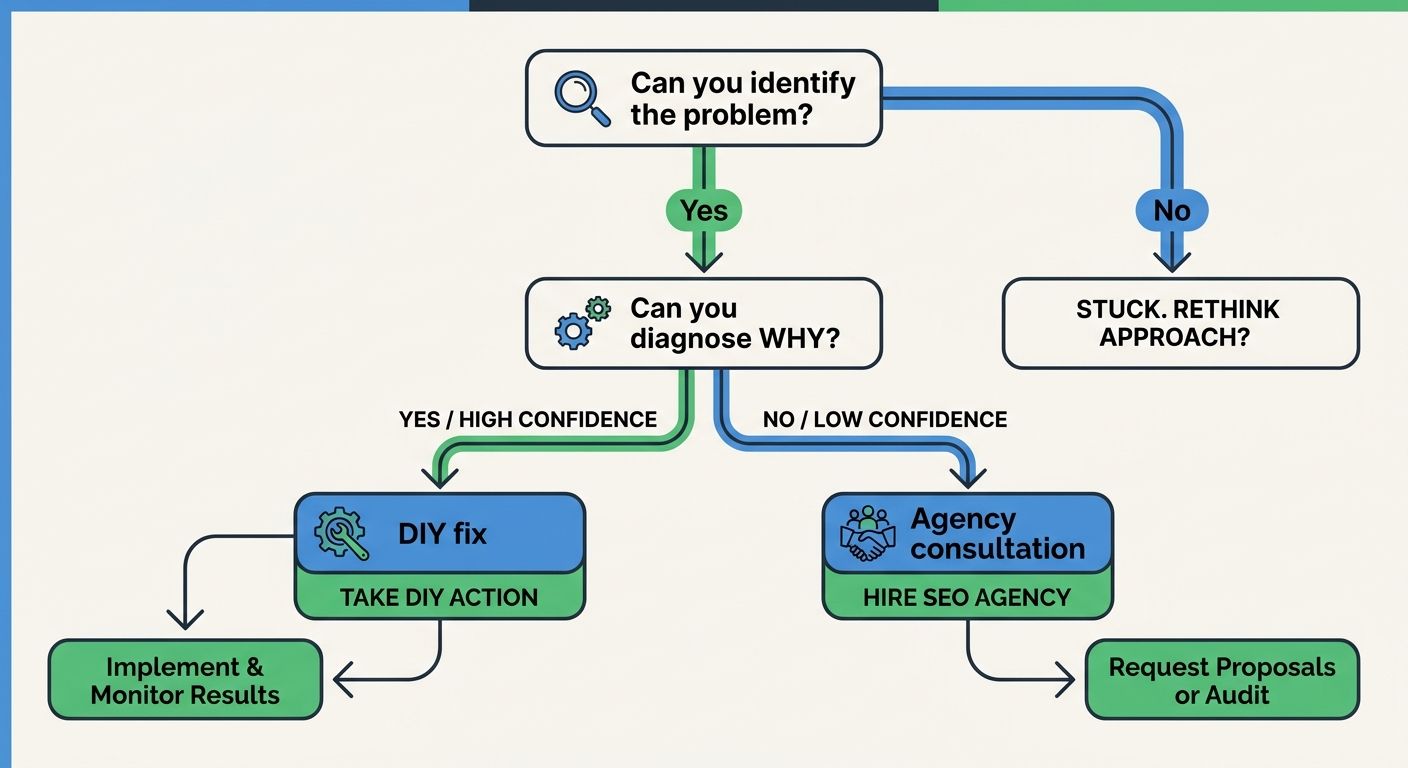

The standard SEO debugging framework tells you to escalate based on severity. Traffic dropped 40%? Call an agency. Traffic dropped 3%? Handle it internally. This sorting mechanism feels intuitive but produces bad outcomes.

A 40% traffic drop after a Google core update might have a clear, identifiable cause. If you published 200 low-quality AI-generated articles in the months before the update and your traffic to those pages specifically cratered, the diagnosis is obvious even if the scale is large. You don't need an agency to tell you what happened. You need a content strategy overhaul, and that's work your team can own.

Conversely, a slow 5% monthly decline over six months is far more insidious. It's easy to dismiss as noise. But that trajectory might indicate a crawl budget problem where new pages are cannibalizing old ones, or a gradual erosion of backlink equity that's degraded your domain's authority on specific topic clusters. Diagnosing the cause requires comparing crawl logs against index coverage reports, analyzing link velocity by page group, and potentially correlating with algorithm timeline data from tools like DemandSphere's tracker. This is agency-grade diagnostic work, even though the month-over-month number looked small enough to ignore.

WebFX's analysis of the in-house vs agency SEO question recommends in-house SEO when you have a large or complex website, publish content regularly, need SEO tied to other internal teams, and have the budget to hire and support the role properly. That's sound advice for ongoing strategy. But for debugging specifically, the frame should be different. The trigger for agency involvement should be diagnostic uncertainty, regardless of whether the problem looks big or small.

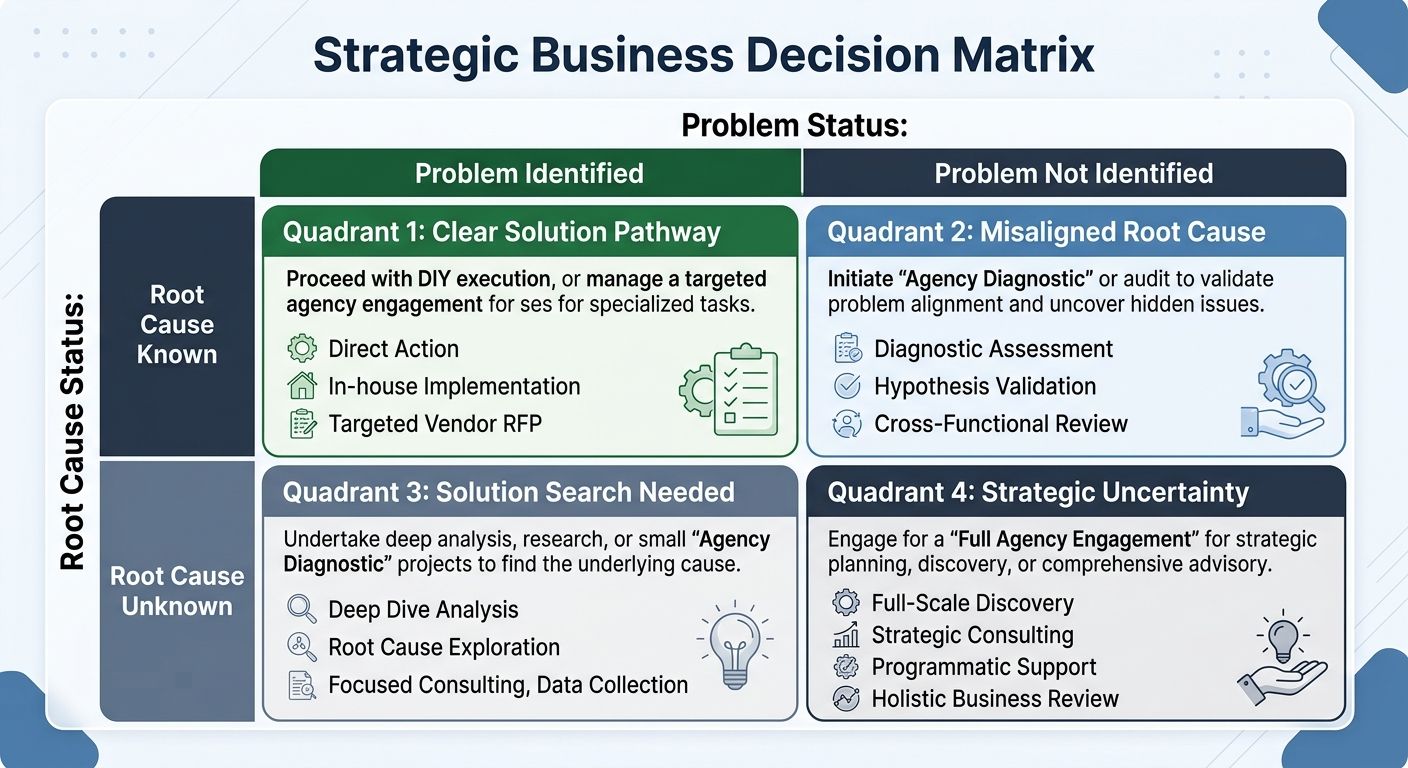

A revised decision framework looks like this:

You can identify the problem AND you know why it's happening: DIY. Fix it, monitor for 2-4 weeks, confirm resolution.

You can identify the problem BUT you're unsure of the root cause: Get a one-time agency diagnostic. Expect to pay $1,500-$5,000 for a focused audit rather than committing to a monthly retainer. Many agencies offer project-based engagements specifically for this.

You can't identify the problem AND traffic is declining: This is full-engagement territory. An agency at the growth or scale stage brings the full team of specialists needed to untangle systemic issues.

The problem involves a site migration, platform change, or major structural shift: Agency, period. The stakes are too high and the window for catching errors is too narrow. I've documented cases where botched migration redirects cost companies years of compounding growth, and those situations share a common thread: someone confident but under-experienced decided to handle it internally.

The organizations that handle this best tend to have what I'd call a "diagnostic checkpoint" built into their workflow. When an SEO issue surfaces, the first question isn't "How bad is this?" but "Do we understand why this is happening?" If the team can articulate a clear causal chain from symptom to root cause, they execute internally. If they can't, they bring in outside expertise before the problem compounds. This is especially critical when organizational friction slows internal SEO execution, because delays in diagnosis compound into delays in recovery.

The Claim, Reconsidered

The conventional SEO debugging decision tree sorts by problem difficulty. Easy problems stay home; hard problems get outsourced. I've argued that this produces the worst possible allocation of budget and attention, and the evidence supports that argument across hundreds of agency engagements I've evaluated.

The revised framework sorts by diagnostic confidence. If you understand the causal mechanism behind the problem, you can fix it yourself with the excellent tooling available today, even when the problem is technically complex. If you don't understand the causal mechanism, you need outside pattern recognition, even when the symptom looks minor. The in-house vs agency SEO decision should hinge on that single variable.

The practical implication is that most companies should spend less on ongoing agency retainers for routine maintenance and more on focused, project-based agency engagements when they hit diagnostic walls. A $3,000 retainer month after month for link audits and meta tag updates is almost always worse value than a $5,000 one-time diagnostic engagement when something actually breaks. Budget for the uncertainty, not the routine. And if your team invests in building genuine technical SEO troubleshooting skills over time, the threshold for when outside help becomes necessary will keep shifting in your favor, which is exactly where you want it.

Marcus Webb

Digital marketing consultant and agency review specialist. With 12 years in the SEO industry, Marcus has worked with agencies of all sizes and brings an insider perspective to agency evaluations and selection strategies.

Explore more topics