AI Crawlers and Structured Data: Why Your 2025 Technical SEO Audit Misses 2026 Visibility Issues

The technical SEO audit your agency delivered nine months ago checked for problems that no longer determine whether AI systems can find, read, or cite your pages. That's the claim, and the evidence that's surfaced in the past two weeks makes it difficult to argue otherwise.

AI Crawlers and Structured Data: Why Your 2025 Technical SEO Audit Misses 2026 Visibility Issues

The technical SEO audit your agency delivered nine months ago checked for problems that no longer determine whether AI systems can find, read, or cite your pages. That's the claim, and the evidence that's surfaced in the past two weeks makes it difficult to argue otherwise.

Search Engine Journal published a framework on April 27, 2026 arguing that the technical SEO audit needs an entirely new layer covering crawler access for AI bots, JavaScript rendering behavior, structured data validation, and accessibility tree parsing. The piece identifies five distinct audit layers, three of which didn't exist in any standard agency deliverable twelve months ago. If your agency hasn't rebuilt its audit methodology since mid-2025, you're paying for a document that addresses the wrong surface area.

I've reviewed audit deliverables from over 40 agencies in the past six months. Exactly three included any mention of AI crawler governance. Two addressed structured data accessibility beyond basic rich snippet validation. The rest delivered the same crawl-error-and-sitemap report they've been selling since 2019, repackaged with a fresh cover page.

This matters because the way AI crawlers consume your site is fundamentally different from how Googlebot processes it. And the gap between what a 2025 audit covered and what a modern SEO audit framework requires for 2026 visibility is wide enough that agencies are going to need to rebuild their service offerings or watch clients disappear from AI-generated answers entirely.

AI Crawlers Throw Away Your Metadata Before Reading Anything

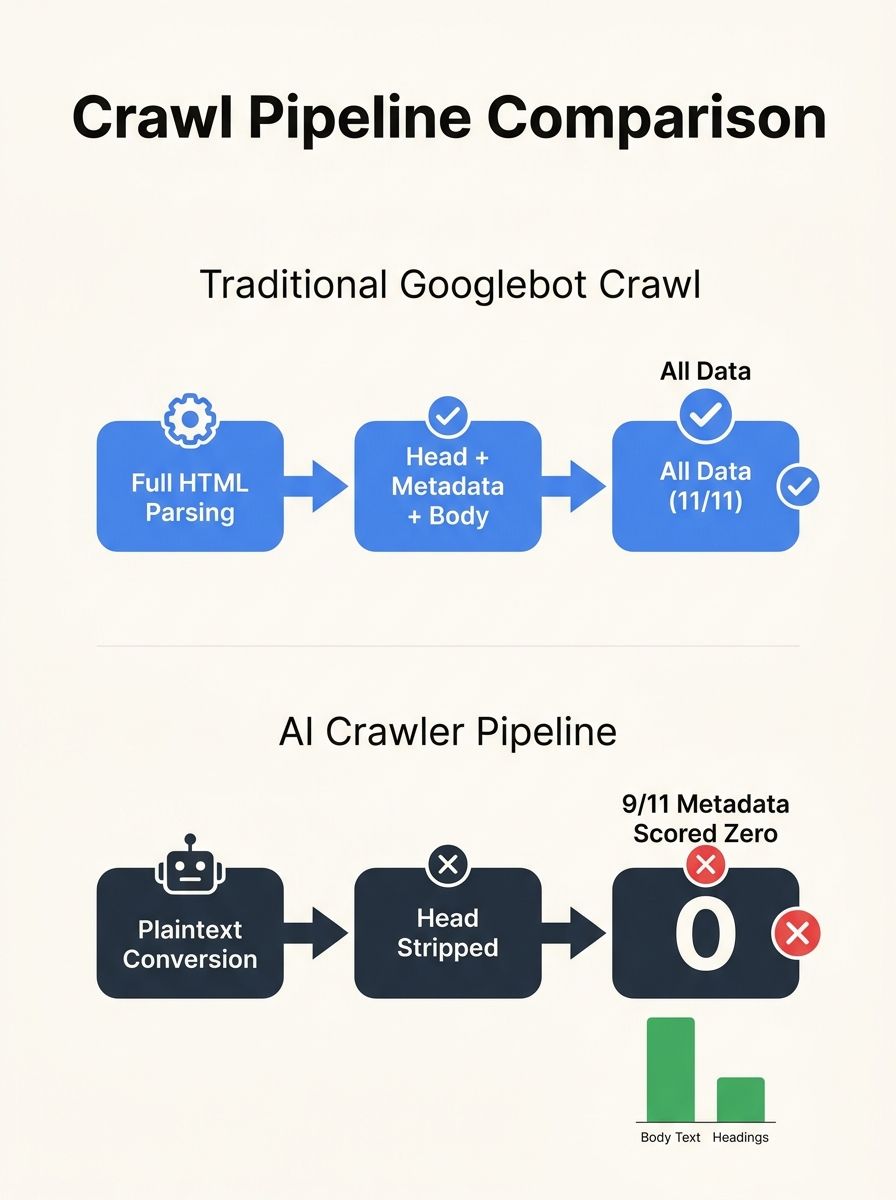

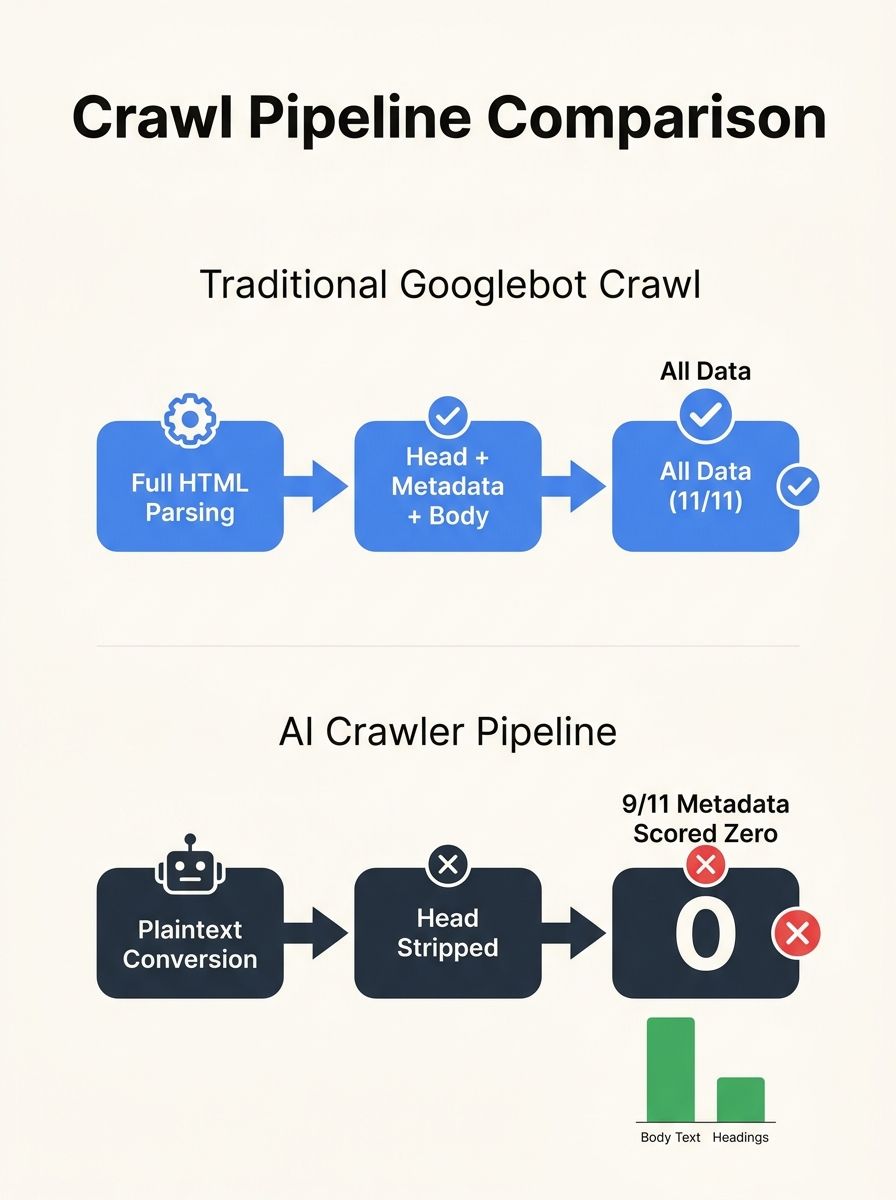

Here's the finding that should alarm every site owner who's invested in traditional on-page SEO: a test published on the r/TechSEO subreddit found that AI crawlers scored zero on 9 out of 11 metadata types. The reason is architectural. Every AI crawler converts your page to plain text before the language model processes it. That conversion strips the entire head section of your HTML. Your title tags, meta descriptions, canonical tags, Open Graph data — all of it gets discarded before the AI starts reading.

Think about what that means for your audit. Your agency probably spent hours analyzing title tag length, meta description optimization, and duplicate canonical issues. Those findings remain relevant for traditional Google search. But for ChatGPT, Perplexity, Gemini's answer features, and any other AI-powered search interface, that metadata work is invisible.

The AI sees your body content. It sees your heading structure. It sees text within your semantic markup. Everything else vanishes during the scraping-to-plaintext conversion.

Tools like Firecrawl demonstrate the pipeline clearly: scrape turns websites into clean, structured, AI-usable data, and "clean" in this context means stripped of the HTML overhead that traditional SEO has spent two decades optimizing. If your technical SEO audit 2026 methodology doesn't account for how pages look after this conversion, you're auditing a version of your site that AI systems never encounter.

I've been telling agency clients for months: ask your SEO provider what their audit checks about AI crawler SEO behavior specifically. If the answer involves meta tags and traditional on-page signals alone, that agency hasn't updated its playbook. The agencies worth their retainer fees are running parallel audits, one for Google's traditional rendering pipeline and one for the plaintext version that AI crawlers actually consume.

The Bot Governance Gap No 2025 Audit Addressed

The robots.txt file on your site probably mentions Googlebot. It might mention Bingbot. If your agency was thorough, it addresses a few other known crawlers. But does it distinguish between GPTBot and OAI-SearchBot? Does it handle Google-Extended separately from standard Google crawlers?

This distinction matters enormously, and almost no audit delivered before late 2025 addressed it. GPTBot is OpenAI's training scraper, collecting data to train future language models. OAI-SearchBot is OpenAI's retrieval agent, the bot that fetches real-time information for ChatGPT's search functionality. Blocking GPTBot prevents your content from training future AI models. Blocking OAI-SearchBot makes your site invisible in ChatGPT's search results.

Major publishers like The New York Times and The Guardian have blocked GPTBot while keeping OAI-SearchBot allowed. That's a deliberate, informed decision. But most businesses don't have that level of bot governance because their agencies never raised the issue.

I reviewed one mid-size e-commerce client's robots.txt that their SEO agency had "optimized" in early 2025. It blocked GPTBot entirely with a single disallow directive. No mention of OAI-SearchBot. No mention of Google-Extended, which feeds data to Gemini. The client had been invisible in ChatGPT search results for months without knowing it, because their audit treated robots.txt as a binary crawl-access check rather than a nuanced AI crawler SEO governance decision.

The Search Engine Journal framework puts crawler access as the first of five audit layers for AI readiness. The remaining layers cover JavaScript rendering, structured data, page readability for AI agents, and content discovery signals. A 2025 audit might have addressed the first two in passing. The last three represent entirely new territory that agencies need to build competency around, which brings us back to the agency selection question: does your provider even have staff who understand how retrieval-augmented generation works?

And this connects to a broader pattern. When you examine how Google's search platform is shifting toward task execution, the implication is clear: search engines themselves are becoming AI agents that need machine-readable inputs, not human-readable marketing copy. Will Scott, CEO of Search Influence, published a thought leadership article this week arguing that non-human web traffic now represents a strategic priority, not a footnote in analytics reporting. He's right. And the agencies that treat bot governance as a footnote are the ones delivering audits that miss the problem entirely.

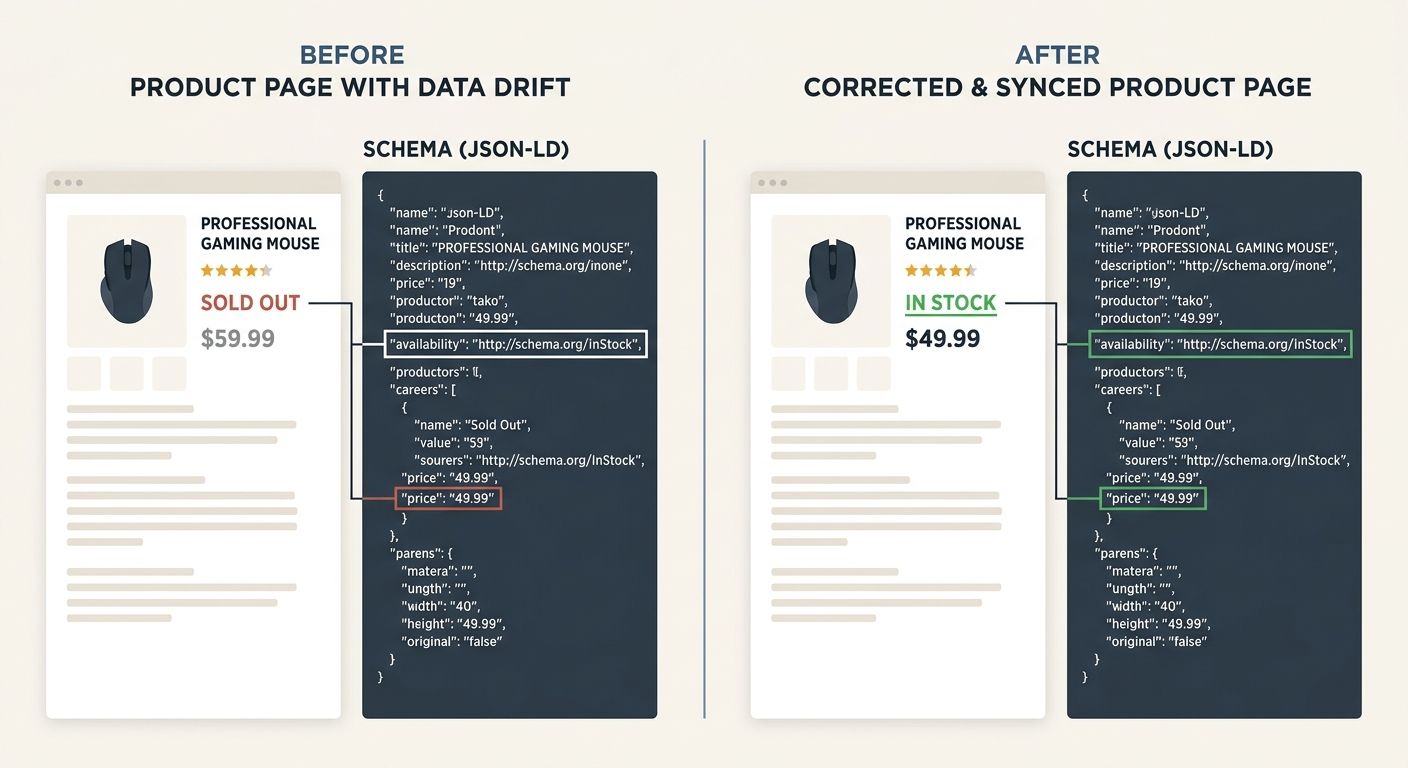

Structured Data Went from Optional Enhancement to Core Visibility Layer

Schema markup used to be the cherry on top of a solid SEO strategy. You'd add FAQ schema to get expanded snippets, product schema for star ratings in search results, maybe some organization schema for your knowledge panel. A nice addition that some agencies upsold and others ignored entirely.

That era is over. As Search Engine Land's analysis of how schema markup fits into AI search puts it, schema markup is one of the few tools SEOs have to make entities and relationships explicit and understandable for an AI system. When an AI crawler strips your page to plaintext, the structured data in your JSON-LD (which Google has long recommended as the preferred format) becomes the primary machine-readable signal about what your content actually represents.

Review schema ensures your customer feedback is accessible to AI systems generating recommendations and comparisons, according to analysis from EEDigital. Event markup helps AI agents surface your events in conversational responses. Article schema determines whether your content gets correctly categorized in AI-driven features. And Google has been tightening requirements: FAQ schema now only displays for authoritative government and health websites following a September 2025 update, and the returnPolicyCountry property became required for MerchantReturnPolicy markup as of April 2026.

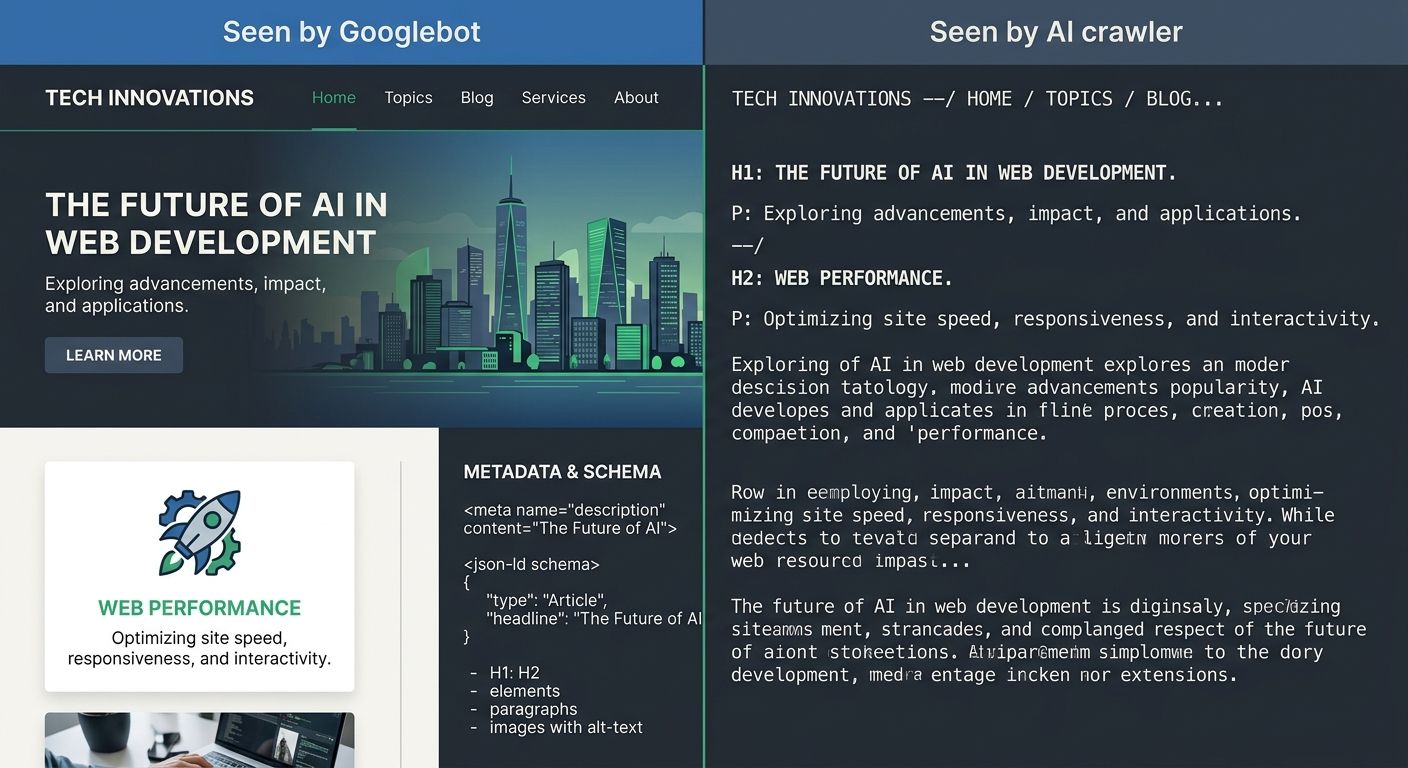

But structured data accessibility requires more than pasting schema onto your pages. Google now enforces what the industry is calling "schema drift" penalties, where your JSON-LD says one thing (product in stock, price at $49.99) but your visible page content says another (sold out, price at $59.99). This inconsistency triggers trust penalties that can suppress your content in both traditional and AI-generated search results.

The practical implication for agency evaluation is significant. When I audit agency work, I now check whether their structured data implementation includes automated validation, a system that flags when schema data and page content fall out of sync. Most agencies don't have this. They implement schema once during a site build or redesign, then never touch it again. Twelve months later, the schema is stale, the page content has changed through CMS updates and inventory shifts, and the structured data is actively working against the client's visibility.

If your agency handles technical handoffs to developers without including structured data validation in the ongoing monitoring plan, that's a gap you need to address this month. And if they're still treating schema as an optional line item on their service agreement rather than a core deliverable, you should be questioning whether they understand the current landscape at all. The HOTH documented a case where a site's average monthly visitors dropped from 655 to 32 due to crawlability barriers, index bloat, and broken sitemaps. That was a traditional technical SEO failure. Now imagine the same severity of traffic collapse, but caused by invisible AI crawler issues that your current audit methodology doesn't even measure.

Where This Leaves the Agency-Client Relationship

The claim at the top of this piece holds up under the accumulated evidence. AI crawlers discard your metadata. Bot governance decisions that didn't exist eighteen months ago now determine whether you appear in ChatGPT, Perplexity, and Gemini-powered search. Structured data has shifted from a search enhancement to the primary way AI systems understand what your pages contain. A 2025 audit was built for a world where these factors didn't matter. They matter now.

The agencies that will thrive through this transition are the ones rebuilding their audit frameworks right now. I've started asking every agency I evaluate a simple set of questions:

Do you audit for AI crawler behavior separately from Googlebot?

Do you test how client pages render after plaintext conversion?

Do you monitor structured data consistency on an ongoing basis, or do you implement it once and move on?

Do you manage robots.txt directives for GPTBot, OAI-SearchBot, and Google-Extended individually?

So far, the hit rate on those questions is depressing. Roughly one in eight agencies I've spoken with this quarter can answer yes to all four. The rest are selling a 2023 service at 2026 prices.

The pattern mirrors what I've seen in enterprise SEO implementations that fail at the organizational level: the strategy document looks impressive, but the actual execution misses the factors that drive outcomes. In this case, the factors are new. The AI search ecosystem emerged faster than most agencies could retool, and the gap between their deliverables and reality grows wider every quarter.

Your agency might be excellent at traditional technical SEO. They might have helped you recover from a migration, fix Core Web Vitals issues, or scale content production without degrading site performance. That work still matters for Google's traditional pipeline. But the modern SEO audit framework now requires an additional layer that most providers haven't built, and the cost of that gap compounds every month as AI-powered search captures more user attention. The agencies that adapt will earn their retainers. The ones that don't will keep delivering clean PDFs that measure an increasingly irrelevant version of your site's visibility.

Marcus Webb

Digital marketing consultant and agency review specialist. With 12 years in the SEO industry, Marcus has worked with agencies of all sizes and brings an insider perspective to agency evaluations and selection strategies.

Explore more topics