Technical SEO for Content Producers: Scaling Output Without Breaking Your Site's Core Web Vitals

Optimizely injected artificial latency into The Telegraph's website and measured the destruction. At four seconds of added delay, page views dropped 11%. At twenty seconds, the number hit 44%.

Technical SEO for Content Producers: Scaling Output Without Breaking Your Site's Core Web Vitals

Optimizely injected artificial latency into The Telegraph's website and measured the destruction. At four seconds of added delay, page views dropped 11%. At twenty seconds, the number hit 44%. The experiment, documented by performance engineer Simon Hearne, confirmed what developers had suspected but couldn't always prove: speed degradation doesn't produce a gentle downward slope. It produces a cliff.

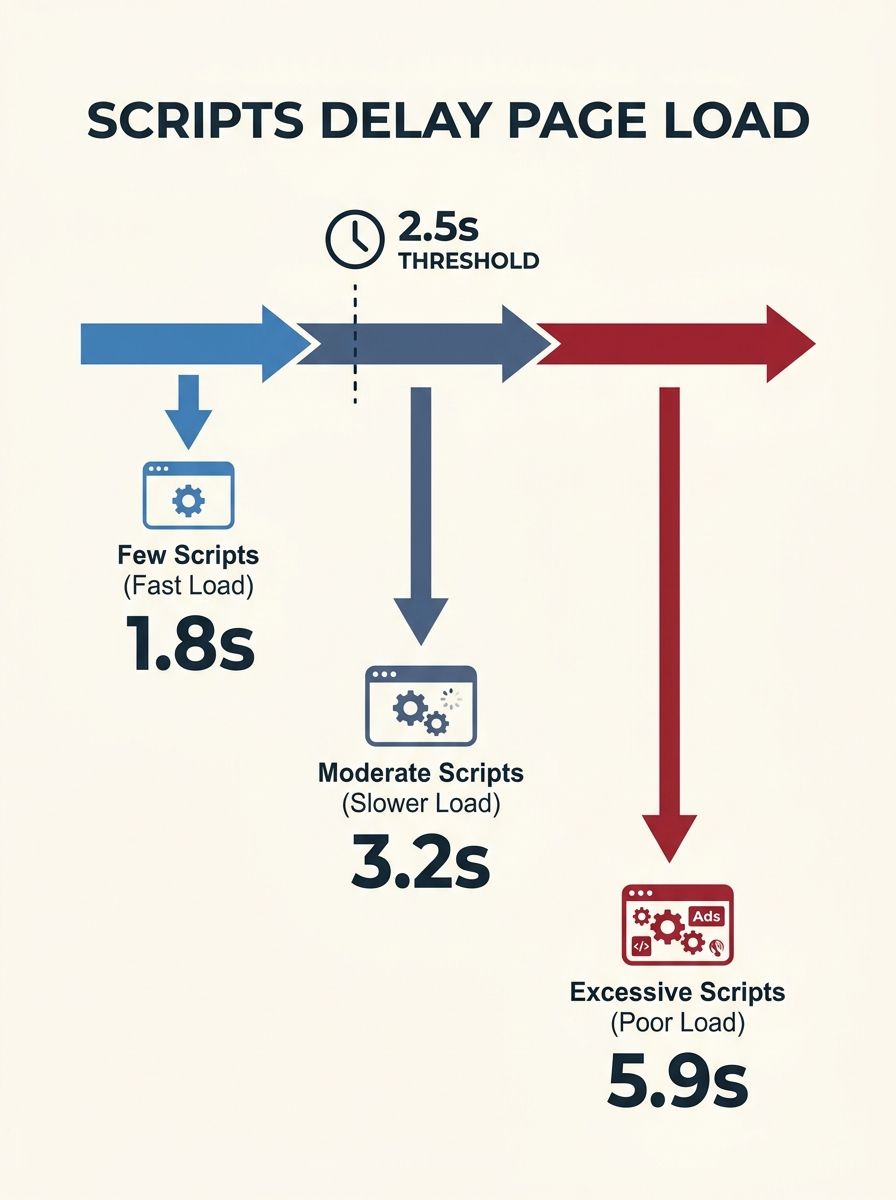

I keep returning to those Telegraph numbers every time an agency pitches "content velocity" as a growth strategy without addressing the infrastructure underneath. Over twelve years and 200+ agency evaluations, I've watched the same pattern repeat: a publisher or brand hires an agency to scale content production, traffic grows for a few months, and then something breaks. Pages slow down. Cumulative Layout Shift spikes. The Interaction to Next Paint metric creeps past Google's threshold. And the agency points at the CMS while the client points at the agency.

This article dissects how that breakdown actually happens, using the Telegraph's documented case as the anchor and drawing on what I've seen go wrong when agencies treat content scaling and technical SEO as separate workstreams.

The Conflict Between Ad Revenue and Load Time

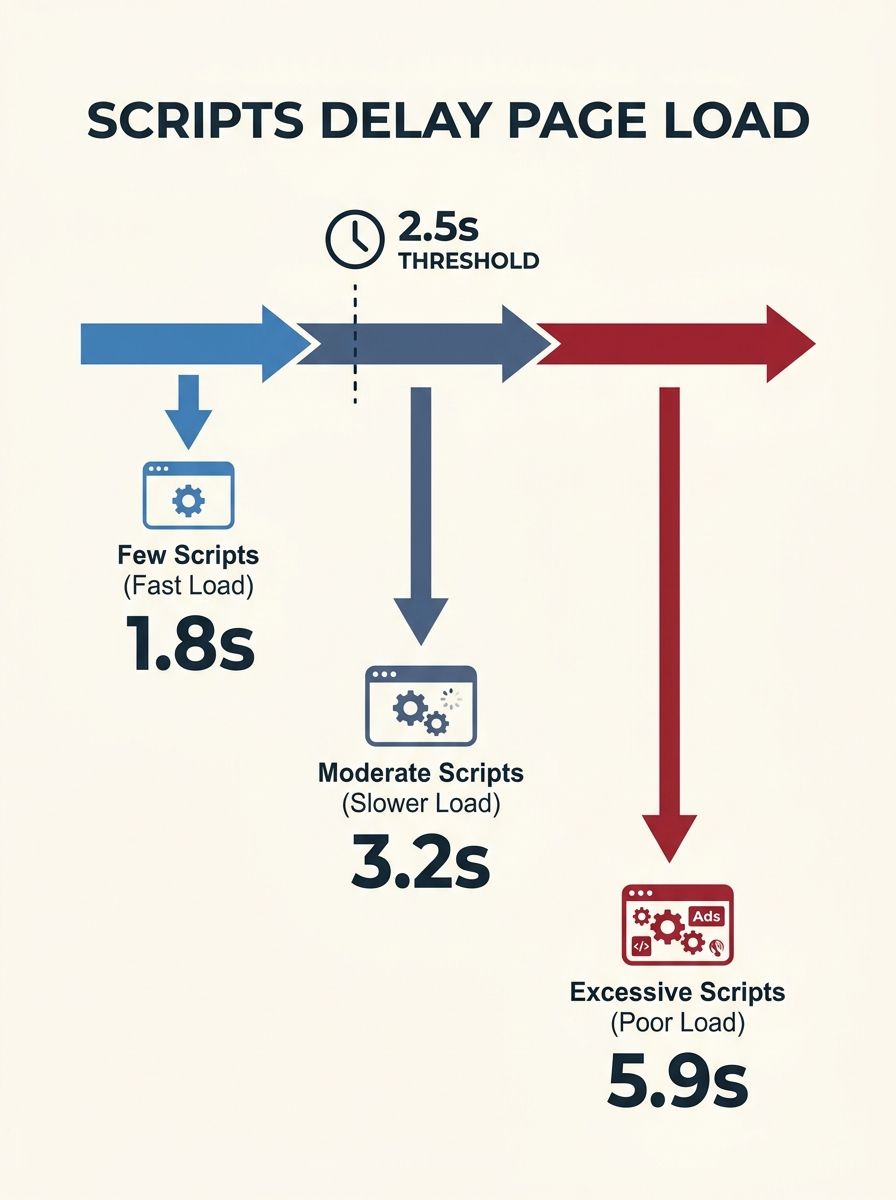

The Telegraph case is instructive because it exposed a tension that exists on virtually every ad-supported publisher site: AdOps teams stack third-party tags because each one promises incremental revenue, while the development team fights to keep editorial content loading fast. Simon Hearne's analysis documented this conflict explicitly. Tens or hundreds of third-party scripts compete for the main thread, and every one of them pushes your Largest Contentful Paint further from Google's 2.5-second threshold.

The same dynamic plays out on non-publisher sites when agencies recommend scaling content output. Each new piece of content often brings additional embedded elements: video players, social widgets, newsletter signup overlays, retargeting pixels that fire on specific post categories. When you publish 10 articles a month, the cumulative weight is manageable. When an agency ramps you to 40 or 60 pieces per month, those scripts multiply and interact in ways nobody tested.

The core web vitals impact on content production becomes visible at scale because the performance budget that accommodated a smaller content library gets overwhelmed. An agency that doesn't audit your existing third-party script load before recommending a content scaling plan is skipping the most important diagnostic step.

Google's own documentation establishes the thresholds clearly: LCP under 2.5 seconds, INP under 200 milliseconds, and CLS under 0.1. Those numbers look generous on a staging site with clean content. They look very different on a production site running 47 third-party scripts with a content library that doubled over six months.

The Template Degradation Nobody Catches Early

Here's the phase of the breakdown that I see agencies miss most consistently. When content production scales, it usually means more content types, more embedded media formats, and more template variations. A site that started with a standard blog post template now needs templates for comparison posts, data-driven articles with charts, video-first content, and interactive tools.

Each new template introduces layout complexity. And layout complexity is where Cumulative Layout Shift lives. CLS measures visual instability during page load. If an ad unit loads and pushes your headline down by 50 pixels, that's a layout shift. If a lazy-loaded image above the fold doesn't have reserved dimensions, the text below it jumps when the image appears. When you're running one or two templates, you can catch and fix these issues manually. When you're running eight templates across hundreds of pages, the shifts accumulate faster than anyone reviews them.

I evaluated an agency earlier this year that had scaled a B2B SaaS client from 8 to 35 blog posts per month. The organic traffic numbers looked stellar through month four. By month six, their CLS scores had degraded to "Poor" on 40% of content pages because nobody had set explicit height and width attributes on the hero images of three newer template types. The SEO handoff between the agency's content team and the client's development team had no checkpoint for performance validation.

The technical SEO bottlenecks in content workflows typically hide in this gap between "content is published" and "content performs well in production." Site speed optimization for high-volume content requires template-level performance budgets, not page-level fixes applied retroactively.

The Indexing Bottleneck That Compounds Everything

Content scaling technical SEO involves a second failure mode that's less visible but equally damaging: indexing efficiency. When you publish at volume, you need search engines to discover and index new pages quickly. Higher content velocity keeps crawlers returning more frequently, which sounds like a pure win. But there's a problem buried in the mechanics.

Google allocates crawl budget based partly on site health signals, and Core Web Vitals are part of that health assessment. A site with degrading performance metrics may find Googlebot adjusting its crawl rate downward precisely when you need it crawling more frequently because you're publishing more content. The agency celebrates hitting its 40-posts-per-month target. The client wonders why half those posts aren't indexed after three weeks.

CMS platforms have started addressing the publishing-side bottleneck. Webflow, for example, documented a 4.5x improvement in publishing speed, up to 10x for content-heavy sites. And tools supporting IndexNow can ping search engines immediately when new content goes live, reducing indexing delays. But these tools solve the notification problem, not the performance problem. If Googlebot arrives promptly because you pinged it, but the page takes 4.8 seconds to achieve LCP, you've won the battle for attention and lost the battle for rankings.

I've written before about how routine SEO tasks should be automated to free up time for higher-order work. Performance monitoring at the template level is exactly the kind of task that belongs in an automated workflow. The agencies doing content scaling well have automated CWV checks integrated into their publishing pipeline. The ones doing it poorly rely on quarterly site audits that discover problems months after they started compounding.

The Agency Evaluation Gap

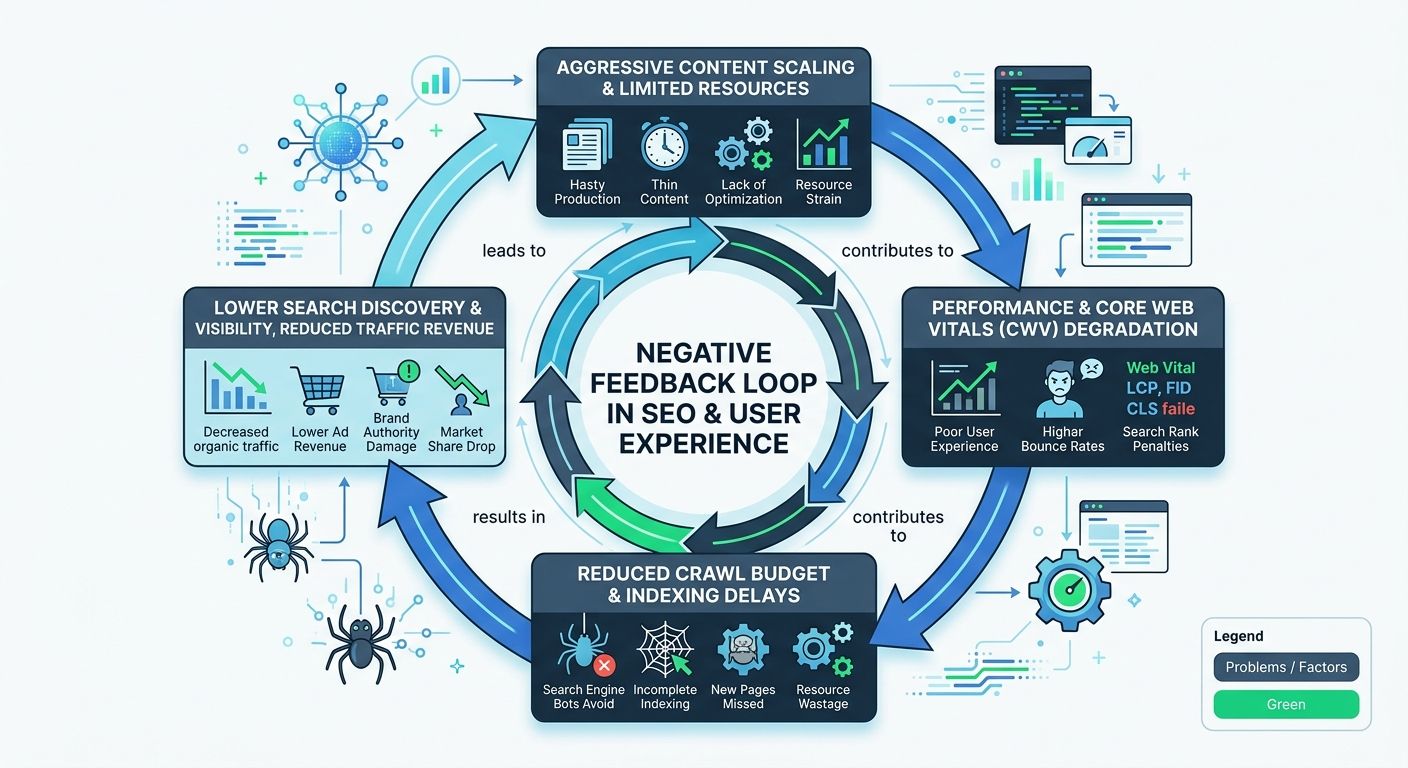

When I assess agencies for clients looking to scale content production, I ask a specific question: "Walk me through what happens between the moment a writer finishes a draft and the moment it's live on the production site." The answers reveal everything.

Agencies that treat content and technical SEO as unified disciplines describe a pipeline where drafts go through editorial review, then SEO optimization (meta tags, internal linking, structured data), then a staging deployment where performance is measured against per-template CWV budgets, and then production deployment with post-publish monitoring. That pipeline typically adds 24 to 48 hours between "draft done" and "live on site." And it costs more to operate because it requires tooling and engineering time.

Agencies that treat them as separate disciplines describe a pipeline where content goes through editorial review, gets optimized for keywords and metadata, and gets published directly to production. Performance is reviewed in a monthly technical SEO audit. By the time someone catches a problem, 30 or 40 articles have been published with the same issue.

The pricing difference between these two approaches is meaningful. Agencies with integrated technical-content pipelines typically charge $8,000 to $15,000 per month for content programs at the 30+ articles-per-month level. Agencies treating performance as a separate workstream often quote $4,000 to $8,000 for similar content volume, with technical SEO audits billed separately at $2,000 to $5,000 per quarter. The second option looks cheaper until you're paying emergency rates to fix six months of accumulated CWV damage.

As Adobe's CWV overview emphasizes, Core Web Vitals improve UX, lower bounce rates, and increase conversions by enhancing site performance and visual stability. Those outcomes disappear when an agency scales content without maintaining the performance floor. And if your agency guarantees rankings while ignoring the infrastructure required to support the content volume they're producing, the guarantee is hollow.

Here's what I look for in agency evaluations specifically around content scaling:

Pre-publish CWV validation: Does the agency measure LCP, INP, and CLS on staging before deploying content to production?

Template performance budgets: Has the agency defined per-template performance ceilings, and do they enforce them?

Third-party script governance: Does the content workflow include rules about which embeds, widgets, and tracking scripts are allowed on which page types?

Indexing monitoring: Does the agency track crawl rates and index coverage as part of their content performance reporting, or do they only report keyword rankings and traffic?

Rollback protocols: When a new content template or embed type degrades CWV, how quickly can the agency identify and revert the change?

Agencies that can answer all five of these questions with specific tools and timelines are the ones whose content scaling programs don't break sites.

The Telegraph Principle Applied to Agency Selection

The Telegraph experiment didn't reveal anything technically surprising. Every web developer knows that latency kills engagement. What it did was quantify the relationship with enough precision to make business decisions: 4 seconds of delay costs you 11% of page views, and the curve steepens from there.

The equivalent principle for content scaling is this: every piece of content you publish without validating its performance impact is adding latency you can't see in your analytics until the aggregate effect crosses a threshold. By the time your CWV scores turn red in Search Console, you're looking at a remediation project, not a quick fix.

When you're evaluating agencies for content scaling programs, the conversation shouldn't start with "how many pieces can you produce per month" or "what's your cost per article." It should start with "show me your performance monitoring pipeline and tell me what happens when a new content template fails a Core Web Vitals check." Agencies that respond with a specific workflow and toolchain understand content scaling technical SEO as a single discipline. Agencies that respond with "we run Lighthouse reports monthly" are the ones whose clients end up in my inbox six months later, asking me to evaluate what went wrong.

The Telegraph proved that speed degradation has a non-linear cost curve. Content producers scaling output are running that same experiment on their own sites every month, whether they realize it or not. The difference between a good agency and a mediocre one comes down to whether anyone's actually watching the numbers while the content machine runs.

Marcus Webb

Digital marketing consultant and agency review specialist. With 12 years in the SEO industry, Marcus has worked with agencies of all sizes and brings an insider perspective to agency evaluations and selection strategies.

Explore more topics