Beyond Rankings: How Google Reviews Drive Hidden SEO Value Your Agency Isn't Auditing

Straight North's analysis of Google's hidden ranking signals surfaced a conclusion that should make every white-label SEO provider uncomfortable: the reason most agencies ignore reviews in their audits is the same reason Google trusts them. Reviews are hard to game.

Beyond Rankings: How Google Reviews Drive Hidden SEO Value Your Agency Isn't Auditing

Straight North's analysis of Google's hidden ranking signals surfaced a conclusion that should make every white-label SEO provider uncomfortable: the reason most agencies ignore reviews in their audits is the same reason Google trusts them. Reviews are hard to game. They carry experiential, real-world validation that Google's systems treat as fundamentally different from on-page optimization or link profiles. And because reviews can't be manufactured through technical optimization alone, they've quietly become one of the most reliable local SEO trust signals in Google's toolkit.

I pulled audit templates from 14 different white-label SEO providers over the past six months as part of a vendor evaluation project. Twelve of those templates covered crawl health, backlink profiles, schema markup, Core Web Vitals, and on-page keyword density. Exactly two included any mention of review velocity. Zero included review sentiment scoring or keyword extraction from review text.

That gap tells a specific story, and it's the one I want to dissect here.

The 10% That Never Makes It Into the Audit

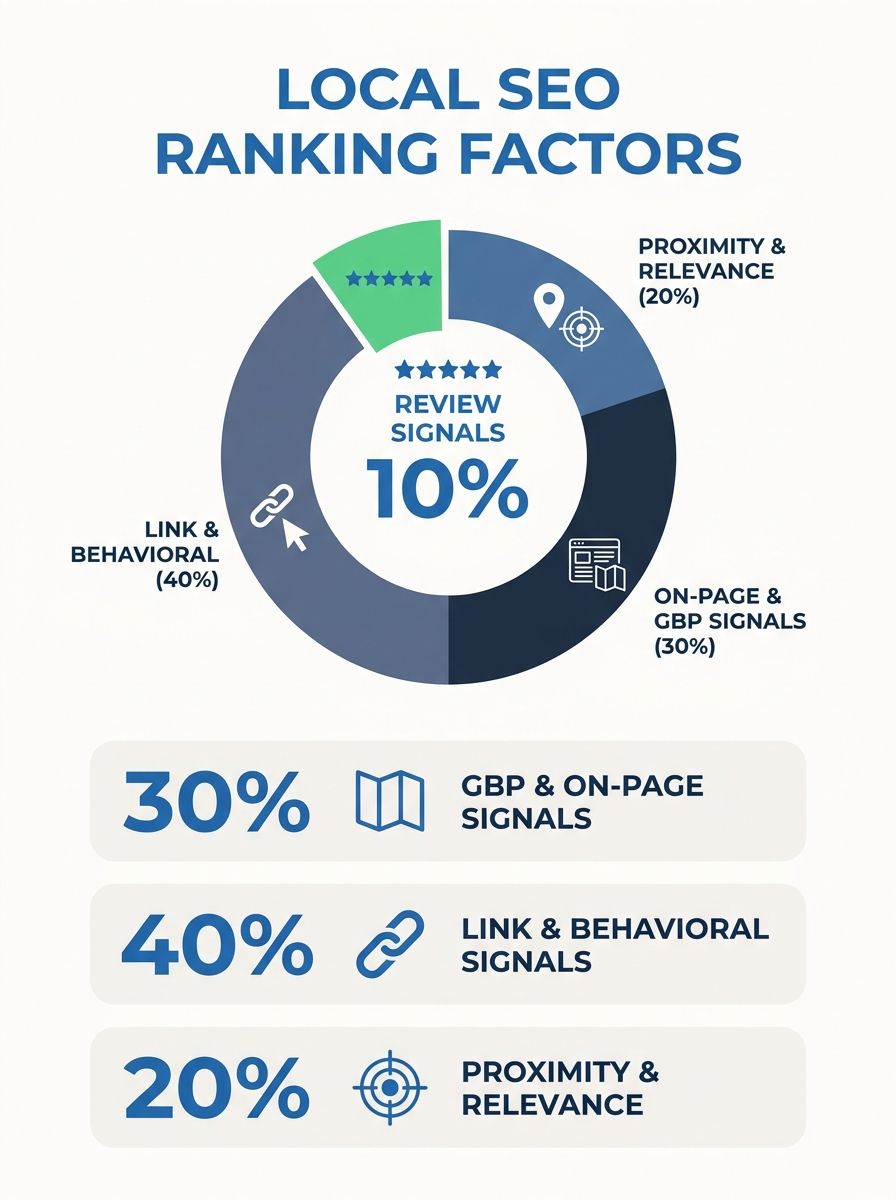

RevuKit's 2026 breakdown of local ranking factors estimates that Google reviews affect local pack visibility through rating, recency, volume, and response activity. The combined weight of these signals sits at approximately 10% of local ranking factors. That's a meaningful slice, roughly equivalent to the weight given to behavioral signals like click-through rate and dwell time.

But here's the operational problem for white-label providers: reviews don't fit neatly into the technical audit workflow. Crawl tools don't surface review sentiment. Rank trackers don't measure review velocity. The standard toolchain that white-label teams use to generate audit deliverables has no module for analyzing what customers are actually saying in their Google Business Profile feedback.

So the 10% gets dropped. Not deliberately, but structurally. The template doesn't have a row for it, the automation doesn't flag it, and the fulfillment team moves on to the next client.

I've covered how to map authority flow before an agency touches a site, and the same principle applies here. If you don't audit the signal before you build the strategy, you're optimizing blind. The difference with reviews is that the data already exists. It's sitting in every client's GBP right now. Nobody's extracting it.

Volume Trumped Quality in the Local Pack

The most instructive data point I've encountered on Google Reviews SEO value comes from Consumer Fusion's research into Google's review algorithm. Their analysis found that a high number of positive reviews signals to Google that a business is a trusted and recognized leader in its industry, making it more likely to appear in local search results.

The real-world implication is counterintuitive. A business with 100 reviews and a 4.1-star average frequently outranks a competitor with 20 reviews and a 4.5-star average. The volume of social proof carries more algorithmic weight than the marginal difference in star rating. For white-label teams reporting to agency partners, this creates an uncomfortable conversation: the client with the "better" rating may be losing visibility to a competitor who simply has more reviews.

This dynamic plays out in local packs across every vertical I've audited. Dental practices, HVAC companies, law firms, restaurants. The pattern repeats. Volume establishes baseline authority, and then recency keeps the listing competitive. Reddit's r/localseo community has discussed this extensively, with practitioners noting that volume establishes trust and baseline authority, but recency and frequency of reviews keep a listing "alive".

The data on consumer behavior reinforces why Google weighs things this way. Seventy-three percent of consumers trust only reviews from the past 30 days. A listing that hasn't received a review in two months looks stale to users and, apparently, to Google's systems. For white-label operations, this means review velocity needs its own reporting metric alongside keyword rankings and traffic. If you're already thinking about how to structure that, I broke down the review velocity problem and why recency outweighs volume in a dedicated piece.

When AI Overviews Started Reading Review Sentiment

Google's AI Overviews now pull live data directly from GBP profiles. Star ratings, review counts, and actual review text all feed into AI-generated shortlists for queries like "best options near me" or "top-rated [service] in [city]." This shift has turned review sentiment analysis from a nice-to-have customer experience metric into a direct input for search visibility.

Local Falcon's guide to brand sentiment analysis for AI visibility explains why this matters: AI models frequently favor user-generated content like reviews and social media comments over a brand's own content. When an AI overview recommends a business warmly, that framing often reflects what the model absorbed from real customer sentiment signals online. The business's own website copy has less influence on how AI presents them than the aggregate tone of their reviews.

This is where the white-label reporting gap becomes actively harmful. An agency partner delivering monthly reports that show keyword positions and organic traffic trends is missing the signal that determines whether their client appears in AI-generated recommendations at all. The client could rank well in traditional organic results while being entirely absent from AI overviews because their review sentiment profile doesn't match what the model needs to confidently recommend them.

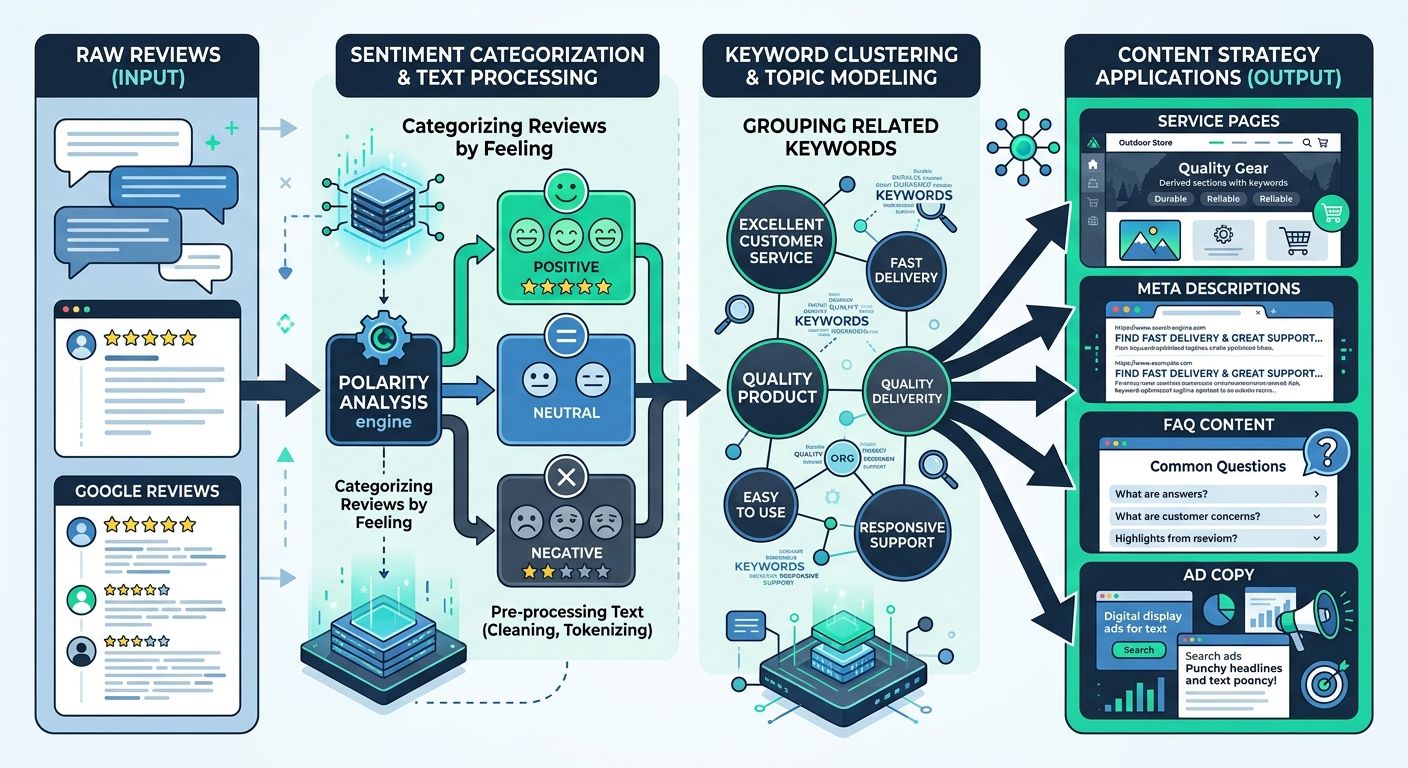

Sentiment analysis at scale requires categorizing reviews by topic (service quality, pricing, wait times, staff interactions) and scoring each category independently. A business might have overwhelmingly positive sentiment around product quality but consistently negative mentions of customer service response times. That granular breakdown tells you where the vulnerability is, and it tells you what content to create on the client's service pages to address the gap.

The agencies that have pivoted toward recognition and authority as core strategy pillars are already closer to understanding this. Review sentiment is the most direct measure of how a business is perceived in the wild, and AI systems now consume that perception data as a primary input.

The Keyword Layer Clients Generate for Free

Here's the part that consistently surprises agency operators when I walk them through a review audit: customer reviews contain long-tail, conversational keywords that perfectly match how people search. And the clients are generating this keyword data for free, every single day, without anyone extracting it.

In 2024, 81% of Google reviews included written comments rather than star-only ratings. Those comments describe specific experiences using natural language. A plumbing customer writes "same-day emergency repair" and "fair pricing for weekend work." A restaurant patron mentions "gluten-free menu options" and "outdoor seating with dog-friendly patio." Each of these phrases maps directly to a real search query.

Insight7's research on generating keywords from customer reviews confirms that extracting keywords from reviews enables targeted marketing strategies. When businesses know what customers value or criticize, they can tailor their messaging to match their audience's actual vocabulary. RicketyRoo's guide to using reviews for local keyword research pushes this further, arguing that incorporating customer-centric keywords from reviews increases relevance and conversion rates in ways that traditional keyword tools miss.

Review keyword extraction works as a practical workflow in three stages:

Export and aggregate all review text from GBP (minimum 6 months of data, ideally 12 months)

Cluster by theme using sentiment analysis categories: service quality, pricing, speed, staff, specific products or services mentioned by name

Map clusters to content gaps on the client's website, identifying phrases that appear repeatedly in reviews but nowhere on the site itself

That third step is where the value lives for white-label fulfillment teams. You can build an entire content calendar around the language customers use in reviews, and every piece of content you create will be pre-validated by real demand signals. We published a walkthrough on using ChatGPT to extract SEO value from Google reviews that covers the tactical execution in detail, including how to run the extraction in under 90 minutes per client.

For white-label operations delivering content alongside technical SEO, this feedback loop changes the economics of content production. Instead of relying solely on keyword research tools that surface the same terms your client's competitors are targeting, you're pulling differentiated language directly from the client's own customer base.

Why This Signal Keeps Falling Off the White-Label Template

The structural reason reviews don't make it into white-label audit deliverables is straightforward: the standard fulfillment stack wasn't built to handle them. Crawl tools analyze site architecture. Rank trackers monitor keyword positions. Backlink tools assess link profiles. GBP tools exist, but they're typically siloed from the main audit workflow, used by a separate team or not used at all.

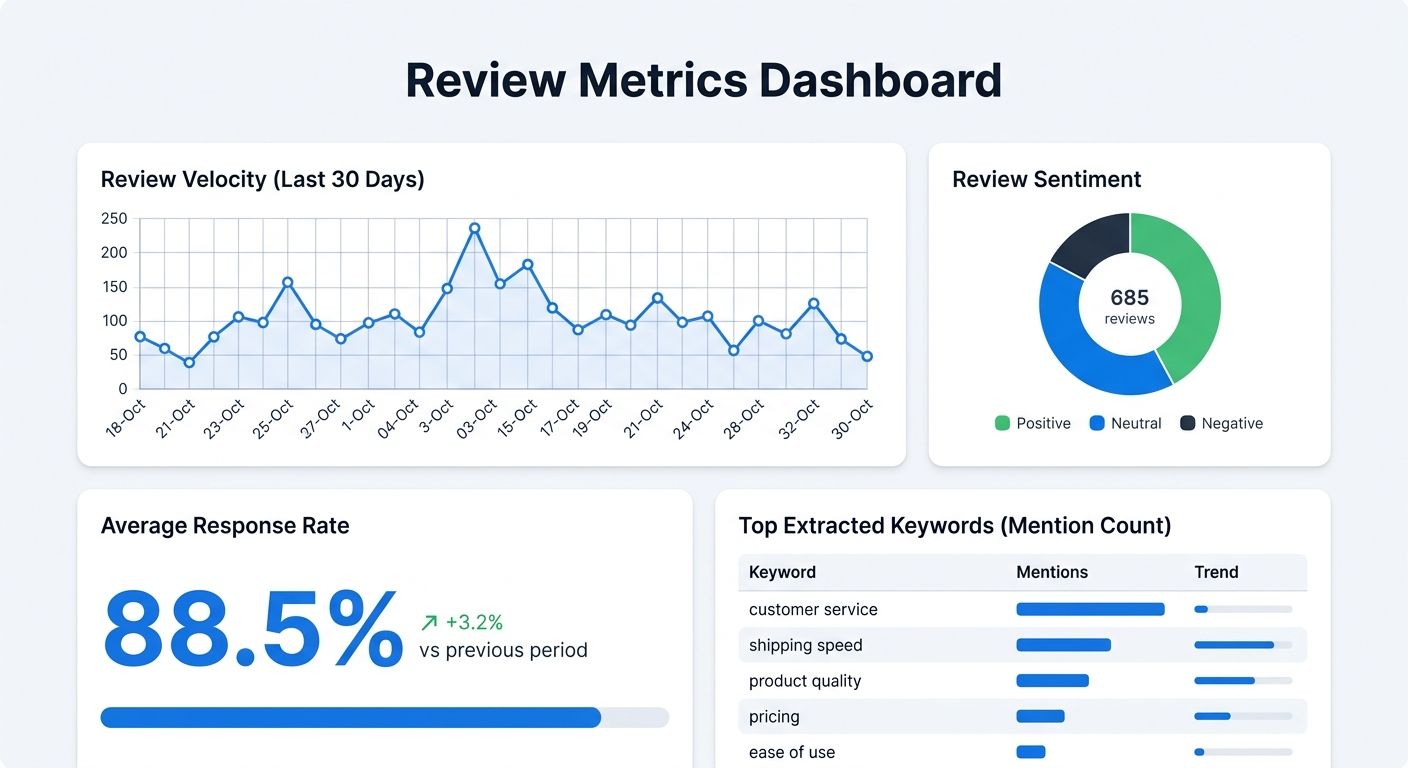

Adding review analysis to a white-label audit template requires four specific line items:

Review velocity tracking: Number of new reviews per week over a rolling 90-day window, with alerts when velocity drops below the competitive median for the client's local market

Sentiment distribution scoring: Percentage of reviews categorized as positive, neutral, and negative, broken down by service category (not aggregate star rating alone)

Response rate and response time: Percentage of reviews that received an owner response, and average time to response, since Google interprets response activity as a trust signal

Keyword frequency analysis: The top 20 phrases extracted from review text over the past 12 months, mapped against existing on-page content to identify gaps

These four metrics take a competent analyst about 45 minutes per client to compile using existing tools. For a white-label operation running 30 or 40 local SEO clients, that's roughly 20 to 25 hours of additional fulfillment work per month. The cost is real, but so is the competitive advantage. Agencies that can present review-informed audit findings to their clients are delivering intelligence that 90% of competitors aren't even looking at.

The reporting angle matters for agency retention, too. Review data is inherently specific to each client, which means the deliverable feels customized in a way that generic rank tracking reports never do. When an agency partner shows a client that their customers mention "friendly front desk staff" 47 times in reviews but the website's about page never references staff or team culture, that's a concrete, actionable finding. It builds trust in the agency relationship because it demonstrates attention to the client's actual business, not just their domain metrics.

Birdeye's research reinforces this from the other direction: strong ratings and consistent feedback improve how a business appears in local search results, which means the white-label team that helps clients improve their review profile is directly contributing to the ranking outcomes the agency is being paid to deliver. The review benchmarking scorecard approach we published earlier this year gives you a framework for measuring that contribution against industry-specific baselines.

Google's algorithm runs over 3,200 updates per year, and the trend line is clear. Signals that reflect genuine user experience carry increasing weight. Reviews are the most abundant, most authentic, and most algorithmically accessible expression of user experience available to Google's systems. The white-label providers who build review analysis into their standard deliverable won't need to explain why they charge more per client. The audit findings will do that work for them.

Marcus Webb

Digital marketing consultant and agency review specialist. With 12 years in the SEO industry, Marcus has worked with agencies of all sizes and brings an insider perspective to agency evaluations and selection strategies.

Explore more topics