The Review Benchmarking Scorecard: Measuring SEO Impact Against Industry Standards in 2026

Every agency pitch deck I've seen this year includes a slide about reviews. Star rating, total count, maybe a screenshot of a glowing testimonial.

The Review Benchmarking Scorecard: Measuring SEO Impact Against Industry Standards in 2026

Every agency pitch deck I've seen this year includes a slide about reviews. Star rating, total count, maybe a screenshot of a glowing testimonial. What none of them include is a benchmarking scorecard: a structured framework that compares review velocity, sentiment depth, response rate, and keyword density against vertical-specific standards. The absence is telling, because those granular online review metrics industry standards are the ones that actually move local rankings.

After evaluating over 200 agencies and auditing local profiles across dozens of verticals, I've distilled review benchmarking SEO into six rules. Each one maps to a specific metric you should track, a threshold you should hit, and a common mistake that burns businesses who think their reviews are "fine." These rules form the scorecard. If you're doing local SEO review performance tracking without them, you're running blind with a dashboard full of vanity numbers.

Benchmark velocity in 30-day windows, not lifetime totals

The single biggest misconception in review strategy is that total review count determines your ranking power. A Search Atlas study of 3,269 local businesses found that review count contributes to 19.2% of ranking performance across all positions, jumping to 26% for the top 10. That's significant. But the study doesn't distinguish between a business that accumulated 200 reviews over five years and one that earned 200 reviews with consistent monthly additions. And that distinction matters enormously.

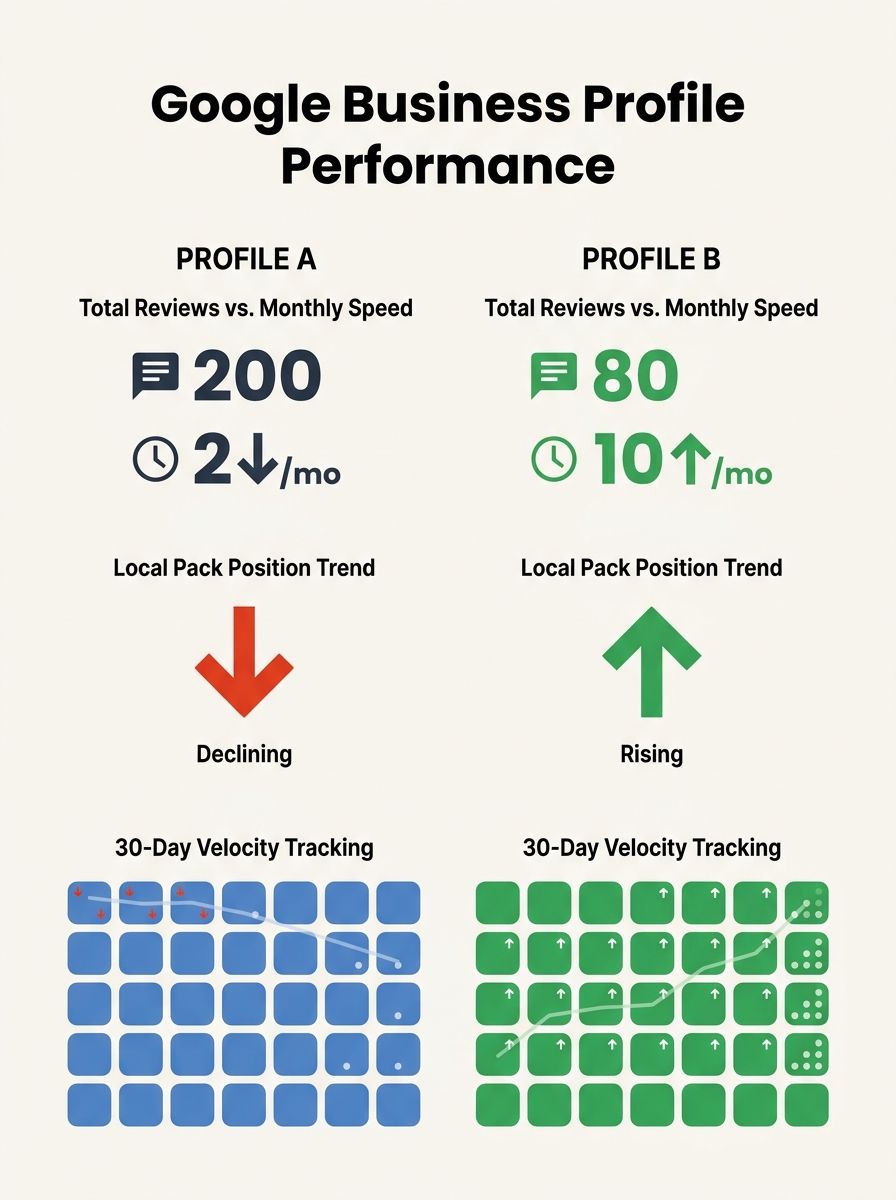

Modern algorithms prioritize the speed of new feedback over historical totals, as research on algorithmic momentum in retail search has documented. Google's local search algorithm treats review velocity as one of its most influential signals, and the practical implication is clear: a profile earning 8-12 reviews per month will outperform a profile with three times the total count but only 1-2 new reviews monthly.

Your scorecard metric: count reviews received in the trailing 30 days. Compare that number month-over-month and against your top three local competitors. If you're below the competitor median, your review generation process needs immediate attention regardless of your total count. We've covered the nuances of why velocity matters more than volume in depth before, and the data has only gotten more decisive since then.

The review velocity vs volume SEO 2026 debate is effectively settled. Track the 30-day window. It's the number that predicts ranking movement, and it's the one most businesses ignore because their reporting tools default to cumulative totals.

Respond to at least 30% of reviews or watch your leads evaporate

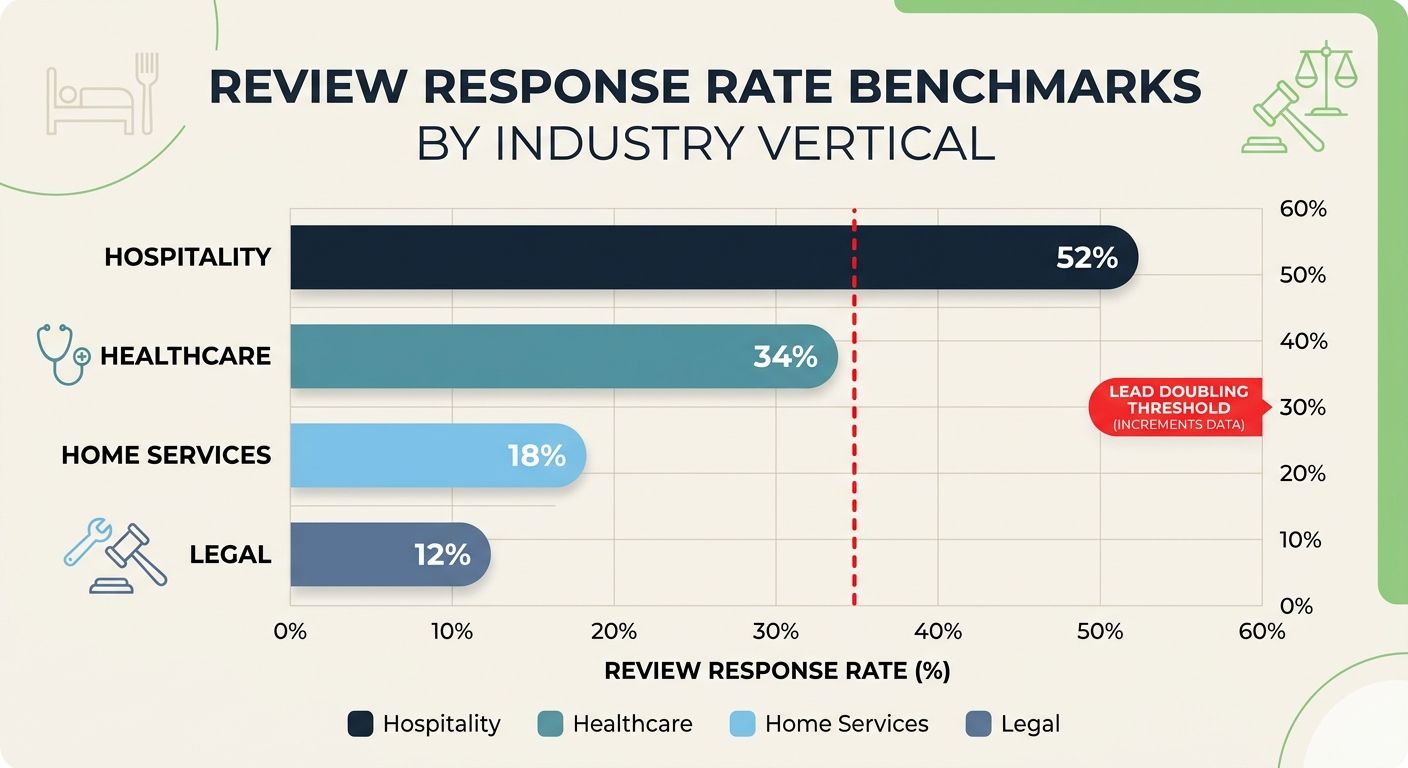

This rule has hard data behind it. According to Incremys' analysis of 2026 SEO statistics, responding to over 30% of reviews doubles the number of leads generated. Not "improves somewhat" — doubles.

And yet, the average local business responds to fewer than 15% of its Google reviews. That gap represents one of the easiest wins in local SEO, because response rate is entirely within your control. You don't need to wait for Google to crawl something. You don't need technical resources. You need someone spending 20 minutes a day writing thoughtful, specific replies.

The scorecard threshold is 30% as a floor, with 50%+ as the target for competitive verticals. Track your response rate monthly. If you run a multi-location business, track it per location, because franchise operators with inconsistent response habits will drag your aggregate numbers down. Hotel SEO agencies have been particularly aggressive about response rates because hospitality reviews carry enormous booking influence, and their playbooks are worth studying even if you're in a different vertical.

Your responses should be substantive. A "Thanks for the review!" template doesn't count toward the kind of engagement that signals quality to both users and algorithms. Reference specific details from the customer's experience. Use natural keyword phrases where they fit. If someone mentions your "emergency plumbing repair" in their review and you echo that phrase in your response, you've added keyword-relevant content to your profile without any manipulation.

Weight keyword-rich review text over star averages

Star averages matter for consumer trust, but they're a blunt instrument for SEO benchmarking. The real ranking signal lives in the text of the review itself. Reviews that are recent, detailed, include photos, and contain natural keywords carry the most weight in local search algorithms.

Here's what that means for your scorecard: instead of tracking your average star rating (which should be above 4.0 — if it isn't, you have a service quality problem, not an SEO problem), track the percentage of your reviews that contain at least one of your target service keywords. A dental practice should know what percentage of its reviews mention "teeth whitening," "emergency dentist," or "invisalign." A law firm should track mentions of "car accident lawyer" or "personal injury."

You can do this manually with a spreadsheet filter, or you can use AI-assisted tools to extract keyword patterns from your review corpus. We outlined a practical method for extracting SEO value from Google reviews with ChatGPT that works well as a quarterly audit.

The benchmark to aim for: at least 25-30% of your reviews should contain at least one service-relevant keyword phrase. If you're below that, your review solicitation process is probably asking the wrong questions. The phrasing of the ask matters more than most businesses realize, because customers will mirror whatever specificity you signal.

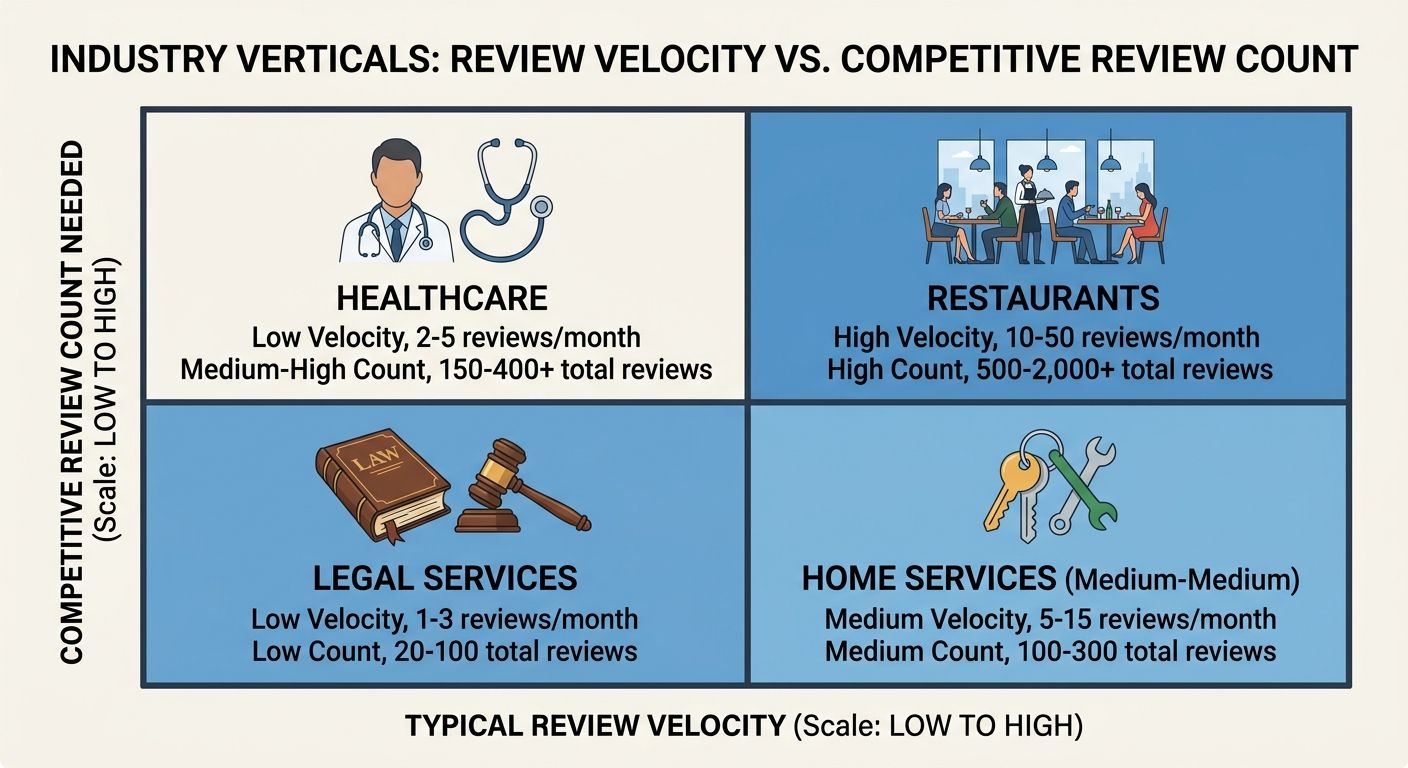

Compare against your vertical, not "all local businesses"

One of the most common mistakes I see in review benchmarking is comparing metrics against generic "local business" averages. A restaurant's review profile behaves nothing like a personal injury attorney's. Real estate SEO companies deal with review patterns that look completely different from what a plumber or dentist encounters, because real estate transactions happen less frequently but generate longer, more detailed reviews.

Vertical-specific benchmarks exist, and ignoring them makes your scorecard meaningless. Here's a rough framework based on data I've compiled across client audits and published research like WebFX's 2026 SEO benchmarks:

Restaurants and hospitality: Expect 50-200+ reviews with high velocity (10-30 per month for active locations). Star averages tend to cluster between 4.0-4.5. Response rate expectations from consumers are high in this space.

Home services (plumbing, HVAC, roofing): 30-100 reviews is competitive in most metros. Velocity of 4-8 per month puts you in the upper tier. Keyword density in reviews is often low, which means optimization here creates outsized gains.

Healthcare and dental: Review counts vary widely. 20-80 reviews is typical for a single-practitioner office. Patient privacy norms mean reviews tend to be shorter, so the ones that do contain detail carry extra weight.

Legal services: Lowest average review counts of any major local vertical. 15-40 reviews is often enough to be competitive. But the reviews that exist tend to be high-value, long-form narratives that carry substantial keyword signal.

B2B and professional services: Reviews matter less for direct Local Pack ranking in most B2B contexts, but B2B SEO agencies increasingly recognize that Google Business Profile authority influences branded search performance even when the Local Pack isn't the primary conversion path.

Your scorecard should include a column for "vertical benchmark" next to every metric. Without it, you'll either over-invest in review generation because you're comparing a law firm to a restaurant, or under-invest because your 25 reviews look fine against a generic average that doesn't reflect your competitive reality.

Track Local Pack signals separately from organic

The Local Pack and traditional organic results use overlapping but distinct ranking algorithms. Review signals carry significantly more weight in Local Pack rankings than in standard organic positions. A business can rank on page one organically for a service keyword while being invisible in the Local Pack because its review profile is weak relative to competitors in the same geographic area.

Your scorecard needs two separate sections. For Local Pack tracking, tools like Whitespark's local rank tracker let you monitor desktop and mobile rankings across Google's Local Pack, Maps, and organic results up to 100 positions deep. You can drill down by location and group by keyword clusters to see which specific aspects of your profile are performing and where gaps exist.

For organic rankings, review signals contribute less directly, but they still matter through secondary mechanisms: review content gets indexed, review schema can generate rich snippets, and strong review profiles contribute to overall domain authority signals. The Hashmeta guide on review velocity in local search documents how Google evaluates review signals as one of its most influential local ranking factors, and separating these tracking dimensions is essential for diagnosing where your profile underperforms.

If your Local Pack position is weak but your organic ranking is strong, focus your review efforts on velocity and recency. If both are weak, you have a broader authority problem that reviews alone won't solve. The scorecard should flag which scenario you're in so you allocate resources to the right problem.

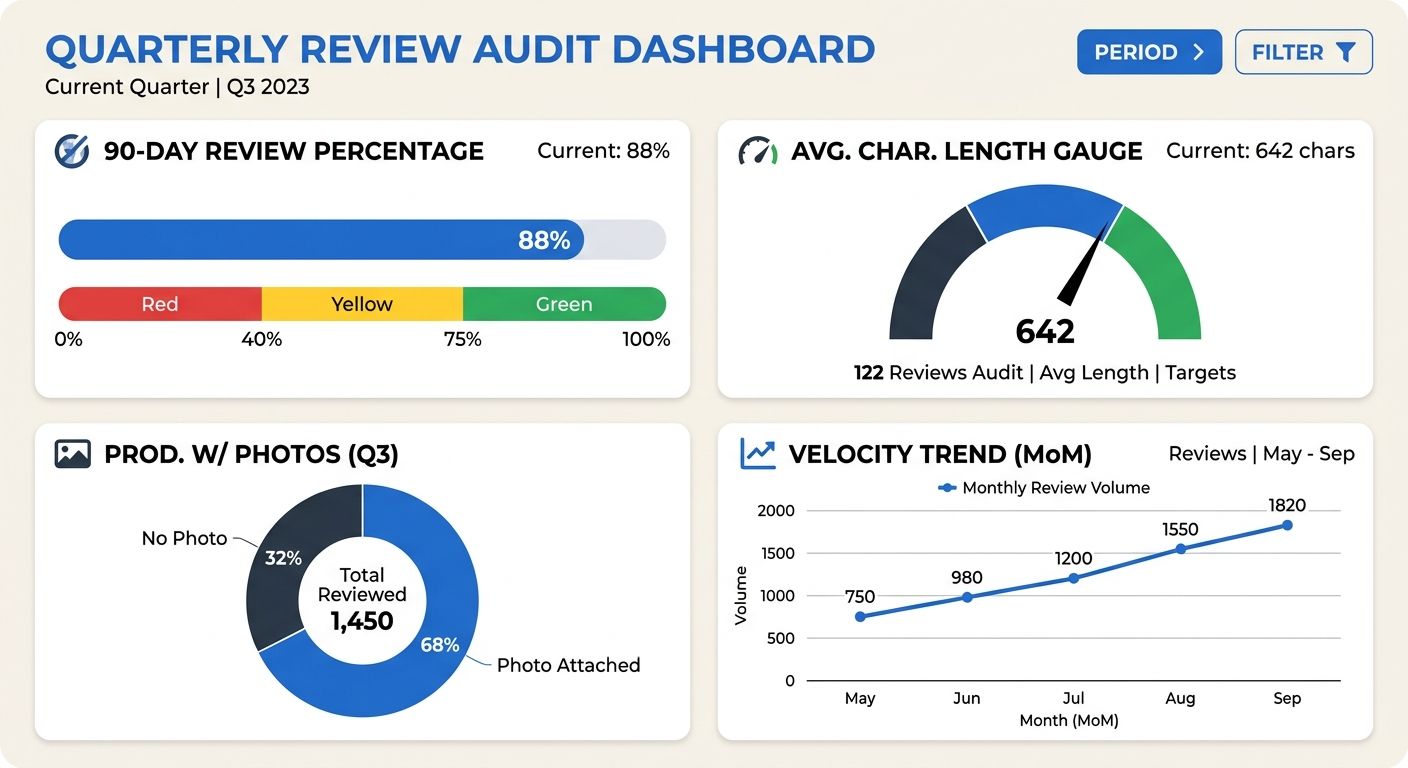

Audit review freshness and photo signals quarterly

Reviews age out of algorithmic relevance faster than most businesses realize. A profile where the most recent review is three months old sends a staleness signal that algorithms interpret as declining relevance.

Your quarterly audit should answer four questions:

What percentage of your total reviews were posted in the last 90 days?

What is the average character length of reviews received in the last 90 days? (Longer, more detailed reviews carry more signal.)

How many reviews include customer-uploaded photos?

Has your review velocity increased, decreased, or held steady compared to the prior quarter?

The answers form the freshness quadrant of your scorecard. If your 90-day review percentage is below 15% of total reviews, your generation process has stalled. If average character length is below 100 characters, your customers are leaving quick star ratings without substance. If photo-attached reviews are below 10% of the total, you're missing a quality signal that's easy to encourage by including a photo prompt in your review request flow.

This is where having concrete online review metrics industry standards becomes critical for calibration. According to Rankability's benchmarks analysis, 99% of local businesses need to establish a formal review generation process, and a quarterly audit is how you determine whether the process you have is actually producing results or creating the illusion of activity.

Connecting review performance to actual revenue is where the scorecard gains executive credibility. If you need to make the case internally, we've written about proving that your SEO effort drives measurable revenue, and the same framework applies to review-specific metrics. The scorecard only earns its place in your reporting stack when the people signing off on budgets can see a line from review activity to pipeline impact.

When These Rules Collapse

Every rule above assumes a business operating in a competitive local market where Google's Local Pack matters. That assumption breaks in several predictable scenarios.

If your business operates primarily in B2B with long sales cycles and no meaningful local search presence, review velocity benchmarks are largely irrelevant to your SEO strategy. Your review energy is better spent on G2, Capterra, or industry-specific platforms where purchase decision-makers actually look.

If you're in a market with very few competitors (rural areas, hyperspecialized services), the benchmarks described here will feel impossibly high. That's appropriate. In low-competition markets, even modest review activity can dominate. Your scorecard thresholds should adjust downward based on what the top three competitors in your specific market are actually doing, not what a national benchmark prescribes.

And if AI Overviews continue to reshape how local search results appear (and they will), the relationship between review signals and visibility may shift in ways that require scorecard updates. AI systems already pull review sentiment into generated answers, which means the qualitative content of your reviews may grow more important than the quantitative metrics tracked here. Keep the scorecard structure. Expect the specific thresholds to evolve as Google's AI integration deepens.

The scorecard's value is the discipline it imposes: measuring specific signals at regular intervals against relevant comparisons. The numbers will change year over year, vertical by vertical, market by market. The habit of tracking them with precision and context is what separates businesses that grow their local visibility from businesses that check a star rating, feel satisfied, and wonder why the phone stopped ringing.

Marcus Webb

Digital marketing consultant and agency review specialist. With 12 years in the SEO industry, Marcus has worked with agencies of all sizes and brings an insider perspective to agency evaluations and selection strategies.

Explore more topics