The Technical SEO Workflow Gap: Why Agencies Miss Critical Infrastructure Tasks Before Publishing

Content published without pre-flight technical checks is the single largest source of fixable ranking failures I find when auditing white-label SEO operations. Not thin content, not bad link profiles, not poor keyword targeting.

The Technical SEO Workflow Gap: Why Agencies Miss Critical Infrastructure Tasks Before Publishing

Content published without pre-flight technical checks is the single largest source of fixable ranking failures I find when auditing white-label SEO operations. Not thin content, not bad link profiles, not poor keyword targeting. The pages themselves ship with broken canonical tags, missing schema markup, uncompressed images, and orphaned URL structures because the production workflow never included a gate where someone with technical SEO knowledge actually reviewed the page before it went live.

I've evaluated over 200 agencies' delivery processes, and the pattern repeats with uncomfortable consistency. The content team produces the article. The reseller or client approves the draft. Someone hits publish. Then, weeks later, a technical audit surfaces fifteen fixable issues per page that should have been caught on day zero. This is the technical SEO workflow gap, and it's baked into how most white-label providers structure their fulfillment operations.

The conventional wisdom says you publish content first and optimize it later. That approach is wrong, and I'm going to walk through three specific bodies of evidence that demonstrate why.

White-Label Fulfillment Splits Content From Infrastructure By Design

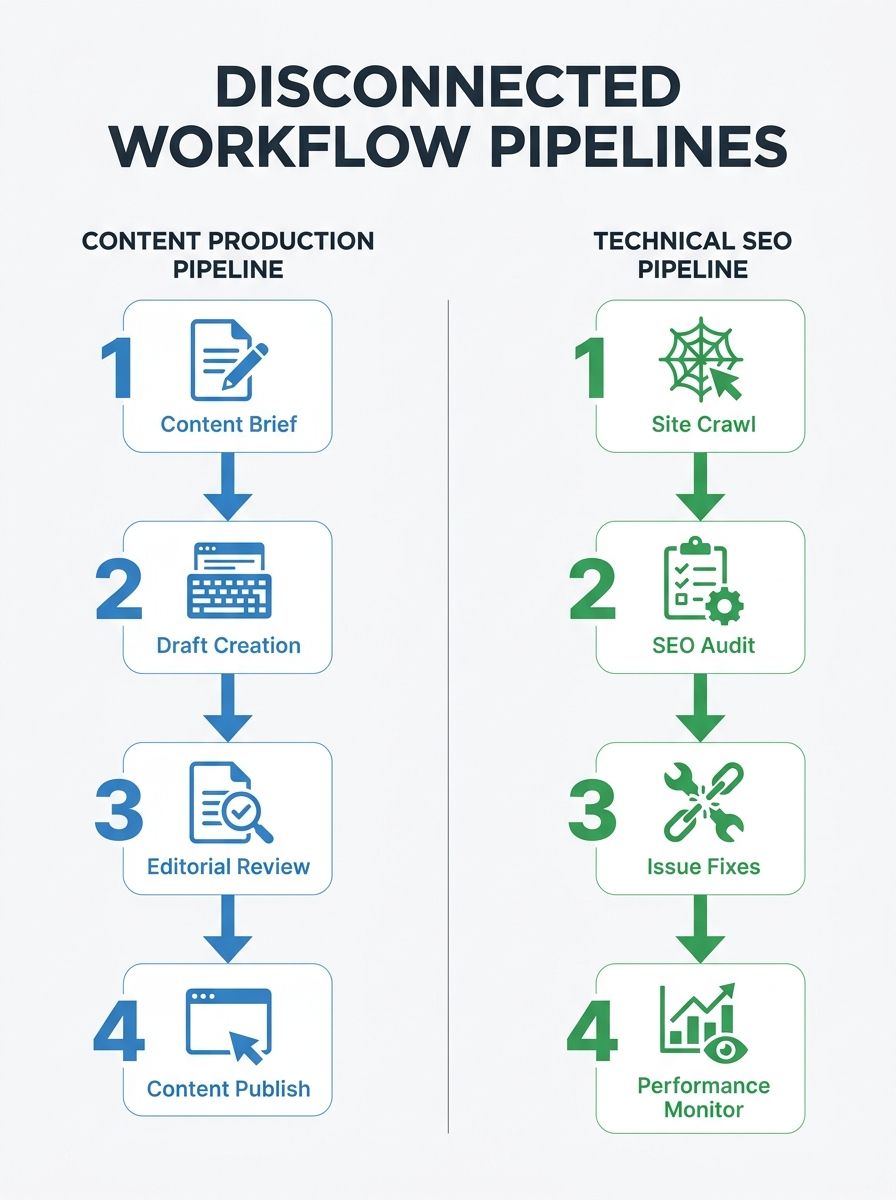

The root cause of agency SEO process gaps in pre-publication work is structural, not personnel. White-label SEO providers typically organize their teams into content production units and technical SEO units that operate on separate timelines, with separate project management tools, and often separate reporting lines. A content writer drafting blog posts for a reseller client never opens a crawl report. A technical SEO analyst running a quarterly audit never sees the editorial calendar.

This separation exists because white-label models are built for throughput. When you're fulfilling work across dozens of reseller accounts, you need standardized processes that move fast. Content briefs go into the pipeline, drafts come out, they pass through a quality review focused on readability and keyword usage, and they ship. The quality review almost never includes technical checks because the people conducting it don't have the tools or training to perform them.

As Shivang Rathod wrote in a widely-circulated analysis of SEO infrastructure, a mistake many teams make is assuming SEO equals publishing. Publishing is the last step. Everything that matters for search performance happens in the layers beneath the content: URL structure, internal linking topology, page speed configuration, structured data, crawl accessibility. When white-label providers treat the article itself as the deliverable and ignore the page environment it lands in, the deliverable arrives broken.

I audited a mid-size white-label provider's process documentation earlier this year and counted the handoff points between their content and technical teams. There was exactly one: a quarterly technical audit that retroactively flagged issues on pages published weeks or months earlier. Everything published between audits shipped with zero technical review. Their reseller clients were paying $3,000–$5,000 per month for content that often sat unindexed for weeks because the XML sitemap wasn't updating automatically and nobody on the content side knew to check.

This is the kind of disconnect that creates compounding technical debt. As Growth Rocket noted in their analysis of SEO operations failures, missed optimizations create technical debt that compounds over time. The cost isn't one bad month. It's a steady accumulation of underperforming pages that drag down crawl efficiency and dilute the authority signals you're trying to build across a site.

If you're running a white-label operation or buying fulfillment from one, the absence of pre-publication SEO tasks in the delivery workflow should be a red flag. The content quality might be fine. The infrastructure around it probably isn't.

Three Infrastructure Tasks That Get Skipped Almost Every Time

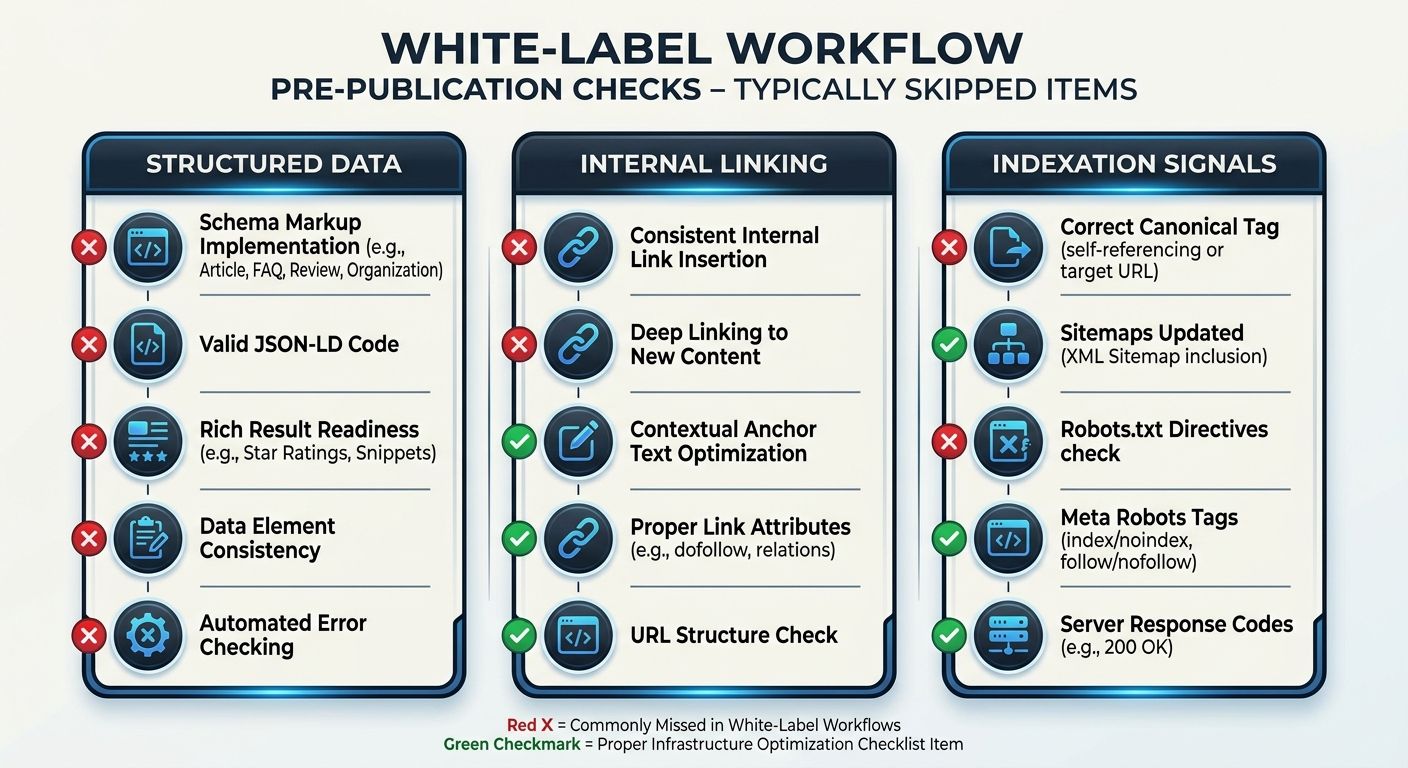

After reviewing technical audit reports across dozens of white-label engagements, I've identified three specific infrastructure tasks that get skipped before publication with near-universal consistency. These aren't obscure edge cases. They're foundational elements covered in every reputable technical SEO checklist available, and yet they fall through the cracks because no one in the publishing workflow owns them.

Structured Data Implementation

JSON-LD schema markup is supposed to be applied at the page level before a page goes live. In practice, white-label content teams almost never touch it. They rely on whatever default schema the CMS theme provides, which is frequently misconfigured, outdated, or absent entirely. I routinely see blog posts published with Article schema that references the wrong author, the wrong datePublished value, or a headline that doesn't match the actual H1 on the page.

The stakes here have increased considerably since AI-powered search engines started relying on structured data for retrieval. If your client's pages are going to appear in AI Overviews or get cited by answer engines, the structured data needs to be accurate and complete at the moment of publication, not patched in three months later during a technical audit cycle. For agencies thinking about how AI crawlers interact with page infrastructure, there's a detailed breakdown of how to prepare site architecture for AI search that covers this exact problem.

Internal Link Insertion With Topical Context

White-label content workflows typically include a step for adding internal links, but it's usually handled by the content writer, who places two or three links to whatever pages they can find that seem vaguely related. There's no reference to the site's actual authority flow, no consideration of which pages need link equity, and no awareness of orphan page problems.

This is a massive gap. Internal linking done well requires knowledge of the full site architecture, not just the page being published. I've written before about how agencies should approach mapping authority flow before touching a client's site, and the same principle applies to every individual page that goes live. When a white-label writer adds links without that context, they're either linking to pages that already have plenty of equity or, worse, creating links to pages that have been deprecated or redirected.

Indexation Signal Configuration

This includes canonical tags, meta robots directives, XML sitemap inclusion, and (increasingly) IndexNow pings for Bing, Yandex, and ChatGPT-connected crawlers. On a properly configured CMS, some of these are automated. On the custom or semi-custom WordPress builds that dominate the agency landscape, many require manual configuration or plugin settings that need to be verified per page.

White-label providers routinely publish pages with self-referencing canonical tags that point to the wrong URL pattern (HTTP vs. HTTPS, trailing slash vs. no trailing slash, www vs. non-www). They publish pages that aren't included in the XML sitemap because the sitemap generator hasn't been triggered. They publish pages without submitting to IndexNow, leaving 30% of the non-Google search market waiting for organic discovery.

None of these are difficult to fix individually. The problem is that fixing them after publication means someone has to go back and re-audit every page that shipped without checks, prioritize the fixes, implement them, and then wait for recrawling. That cycle adds weeks or months of delay to pages that should have been performing from day one.

The Compounding Cost That White-Label Clients Never See

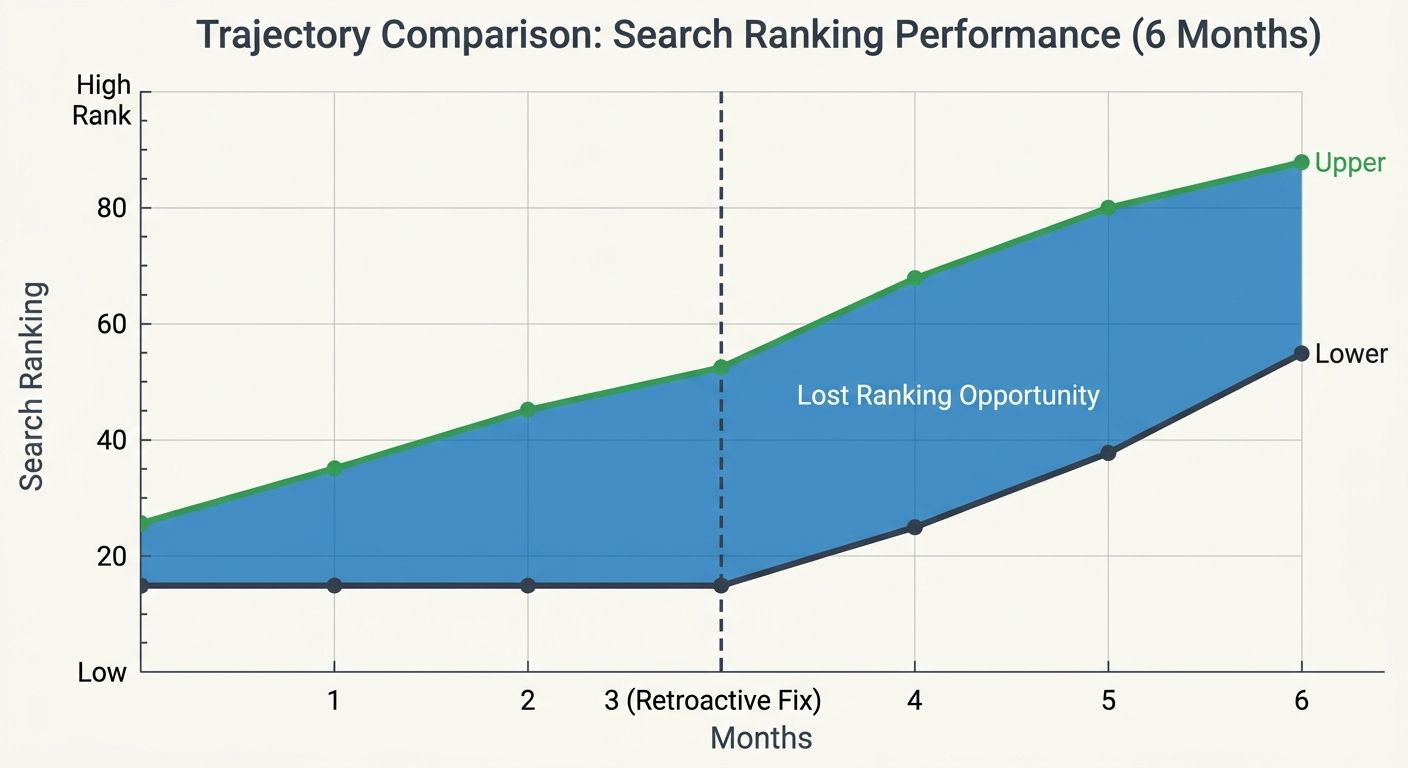

Here's where the technical SEO workflow gap becomes genuinely expensive. When pre-publication infrastructure tasks get deferred to post-publication audits, the cost per fix increases with every week of delay, and the opportunity cost of lost indexation and ranking potential accumulates silently in the background.

Consider the math on a typical white-label engagement producing 12 pages per month at $250–$400 per page. Over a six-month contract, that's 72 pages. If each page ships with an average of three infrastructure issues (a conservative estimate based on my audits), that's 216 discrete technical problems that need remediation. At an agency billing rate of $150/hour for technical SEO work, and assuming five minutes per fix on average, you're looking at roughly $2,700 in remediation labor. But that assumes the fixes are straightforward, which they often aren't once pages have been live long enough to accumulate backlinks, get cached by AI systems, or develop redirect chains.

The real cost is in ranking delays. Monday.com's analysis of SEO workflows found that structured workflows reduce decision fatigue and rework by standardizing how work moves from research to publication. When pre-publication checks are absent, the rework happens downstream where it's more expensive and less effective. A page that launches with correct schema, proper canonicalization, and solid internal linking starts competing for rankings immediately. A page that launches broken and gets fixed two months later has already lost the window where Google's freshness signals would have given it a boost.

This is where the conversation about an infrastructure optimization checklist becomes practical rather than aspirational. If you're a reseller buying white-label fulfillment, you need to know whether your provider's workflow includes a technical review step between content approval and publication. If it doesn't, you need to either add one yourself or factor the remediation cost into your budget.

And if you're the white-label provider, the argument for building pre-publication checks into your pipeline is straightforward: your reseller clients will eventually notice that pages aren't ranking. When they run their own audit or hire someone to diagnose visibility drops, the trail leads back to infrastructure problems that existed from day one. That's a client retention problem with a known, preventable cause.

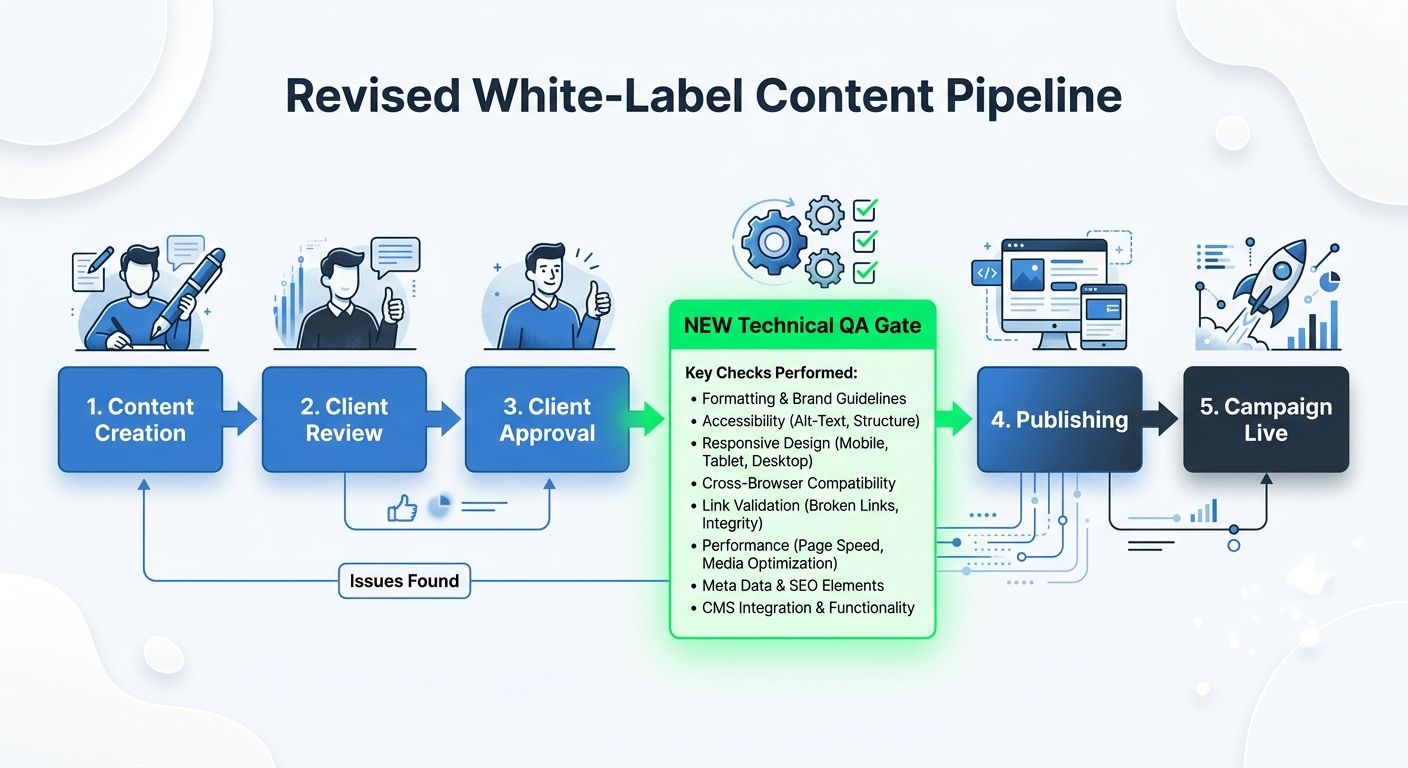

What a Pre-Publication Technical Gate Actually Looks Like

Building pre-publication SEO tasks into a white-label workflow doesn't require hiring a dedicated technical SEO analyst for every piece of content. It requires a checklist and someone trained to use it. The checklist should cover, at minimum:

Canonical tag verification: Does the canonical URL match the intended live URL pattern exactly?

Schema markup review: Does the JSON-LD reflect the correct page type, author, date, and headline?

Internal link audit: Do the internal links connect to active, high-priority pages within the site's topical architecture?

XML sitemap inclusion: Will the page appear in the sitemap within 24 hours of publication?

Core Web Vitals spot-check: Does the page template pass LCP, CLS, and INP thresholds with the actual content loaded?

IndexNow submission: Is the page being pinged to Bing and Yandex-connected crawlers at publication?

Image optimization: Are all images compressed, properly dimensioned, and carrying descriptive alt attributes?

This adds roughly 15–20 minutes per page. At white-label content volumes of 10–15 pages per week, that's about four hours of additional labor. Compare that against the remediation costs I outlined above, and the ROI is obvious.

The agencies I've seen implement this well typically train their content QA team to run the checklist rather than pulling from their technical SEO bench. A content editor with a documented process and a browser extension for validating schema can catch 80% of the issues I'm describing. The remaining 20% — complex JavaScript rendering problems, crawl budget considerations on large sites, and edge cases with internationalized URLs — still need periodic technical audits. But the volume of post-publication remediation drops dramatically.

For agencies scaling operations across many concurrent campaigns, this kind of pre-publication gate is the difference between a clean portfolio and a growing backlog of technical fixes that never quite gets resolved.

The Claim, Revisited

The conventional wisdom that content should go live quickly and get optimized later made sense in an era when Google's crawling was slow and ranking signals accumulated gradually. That era ended. Crawlers from Google, Bing, and AI-powered search systems now evaluate pages rapidly after publication, and the technical signals present at launch carry measurable weight in early ranking decisions.

White-label SEO providers that separate content production from technical implementation will continue shipping pages that underperform, creating a cycle of audits, fixes, and client frustration that eats into margins and damages reseller relationships. The agencies that close this workflow gap by inserting a structured technical gate before publication will deliver better results per page, reduce remediation costs, and build the kind of operational credibility that keeps reseller partners from shopping for alternatives. If you're evaluating whether your current provider falls into the first camp or the second, the fastest test is to look at the first 72 hours after any page publishes. Check whether it's in the sitemap. Check whether the schema validates. Check whether the canonical resolves cleanly. If any of those fail, you've found your gap, and you know exactly where the process broke down.

Marcus Webb

Digital marketing consultant and agency review specialist. With 12 years in the SEO industry, Marcus has worked with agencies of all sizes and brings an insider perspective to agency evaluations and selection strategies.

Explore more topics