From Google Search to AI Assistants: How B2B Agencies Must Rebuild SEO Strategy Beyond Traditional SERP Rankings

Three of the five B2B agency contracts sitting on my desk right now define success exclusively as Google organic ranking improvements and click-through traffic growth. The agencies that wrote those contracts aren't incompetent.

From Google Search to AI Assistants: How B2B Agencies Must Rebuild SEO Strategy Beyond Traditional SERP Rankings

Three of the five B2B agency contracts sitting on my desk right now define success exclusively as Google organic ranking improvements and click-through traffic growth. The agencies that wrote those contracts aren't incompetent. They're operating on assumptions from a search ecosystem that no longer describes how B2B buyers find vendors, compare solutions, or build shortlists. Nearly 60% of Google searches now end without a click. AI Overviews appear in over 13% of queries, slashing mobile click-through rates by 19%. And according to a Demand Gen Report survey of 400 senior marketing executives, AI search has already become the second largest driver of qualified leads for B2B organizations.

The rules I'm laying out here come from evaluating over 200 SEO agencies and watching which B2B strategies actually produce pipeline in an environment defined by AI search discovery fragmentation. These aren't theoretical. They're the principles separating agencies that still deliver measurable business outcomes from those burning retainer budgets on vanity metrics.

Accept that Google rankings are one signal among many

The reflex to check "where do we rank?" persists because it's familiar and measurable. Ranking reports feel concrete. They're also increasingly disconnected from how B2B buyers actually find solutions.

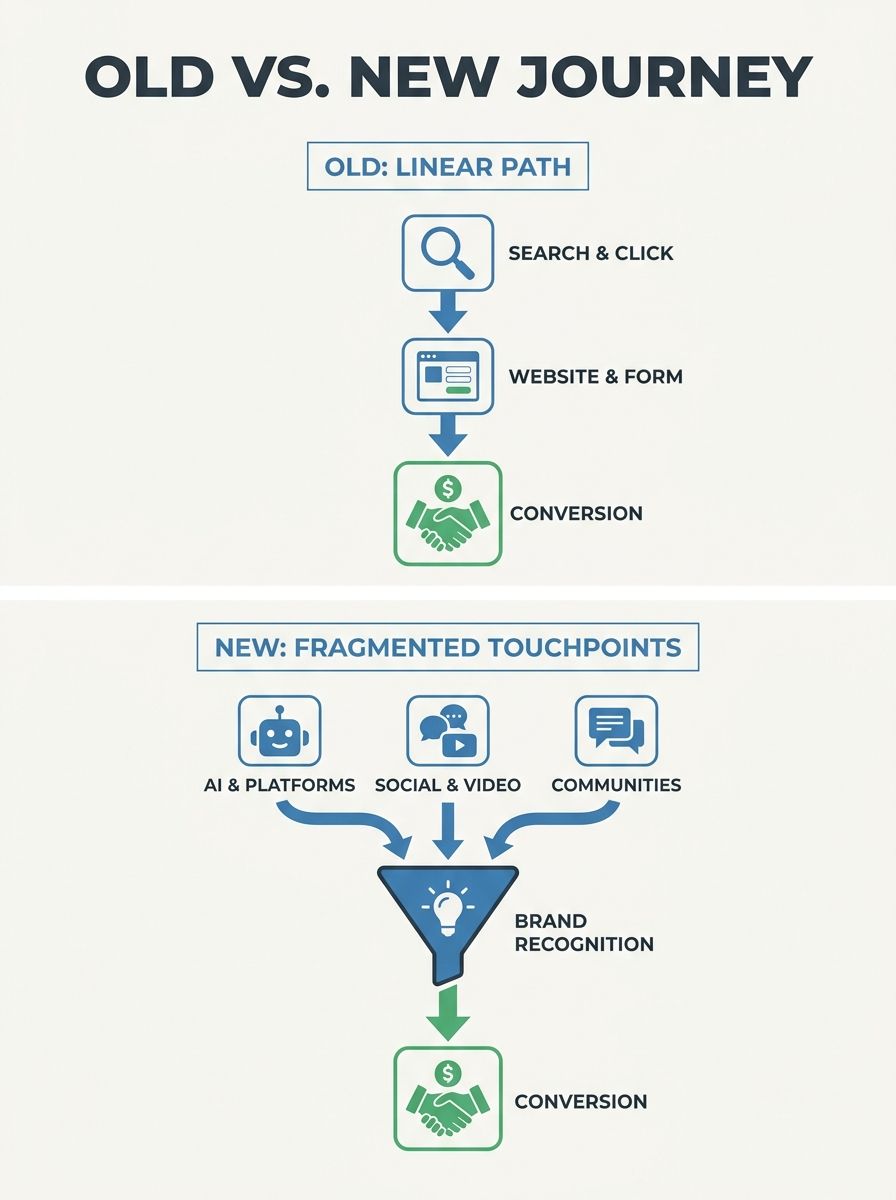

As Search Engine Land documented in their analysis of the new realities of search in 2026, discovery now happens across TikTok, YouTube, LinkedIn, Reddit, AI assistants, and embedded search within SaaS tools. Cosmo Edge's research on search market fragmentation describes information discovery as no longer relying on a centralized point of entry. It's becoming a distributed function integrated into operating systems, applications, and AI agents.

This matters practically: 46.5% of pages cited in Google's AI Overviews rank outside the top 50 organic results. Your page can be invisible in traditional rankings and still show up as the authoritative answer in an AI-generated summary. The inverse is also true. You can hold position one and get bypassed entirely when the AI Overview answers the query directly.

B2B SEO beyond Google means your agency needs to define success across the full spectrum of surfaces where buyers encounter your brand. If your monthly reporting package contains twelve slides about Google position changes and zero slides about AI citation rates, you're watching the wrong scoreboard.

Treat answer engine optimization as a distinct discipline

AEO—answer engine optimization—is fundamentally different from traditional SEO, and agencies that bolt it onto existing workflows as an afterthought produce mediocre results in both.

Traditional SEO optimizes for crawlability, keyword relevance, and link authority so that pages appear in ranked blue links. Answer engine optimization AEO, as Amsive's guide to AI search visibility explains, is about structuring content so AI platforms select your material as the definitive answer to user questions. The objective shifts from "rank first" to "get cited."

The skills required look different too. AEO requires structured data expertise, entity-relationship mapping, and an understanding of how large language models evaluate credibility. We've covered the distinction between AEO and traditional SEO in detail before, but the practical takeaway for agencies is this: you need practitioners who understand machine parsing, not keyword density.

When this rule breaks: if your B2B product category is so niche that AI assistants don't yet have enough training data to surface useful answers about it, traditional SEO still dominates. Industrial equipment manufacturers selling to a total addressable market of 200 companies, for example, may find that direct relationships and trade publications still drive more pipeline than any AI-generated summary. Even manufacturing SEO agencies that specialize in industrial verticals are navigating this tension between established search patterns and emerging AI surfaces.

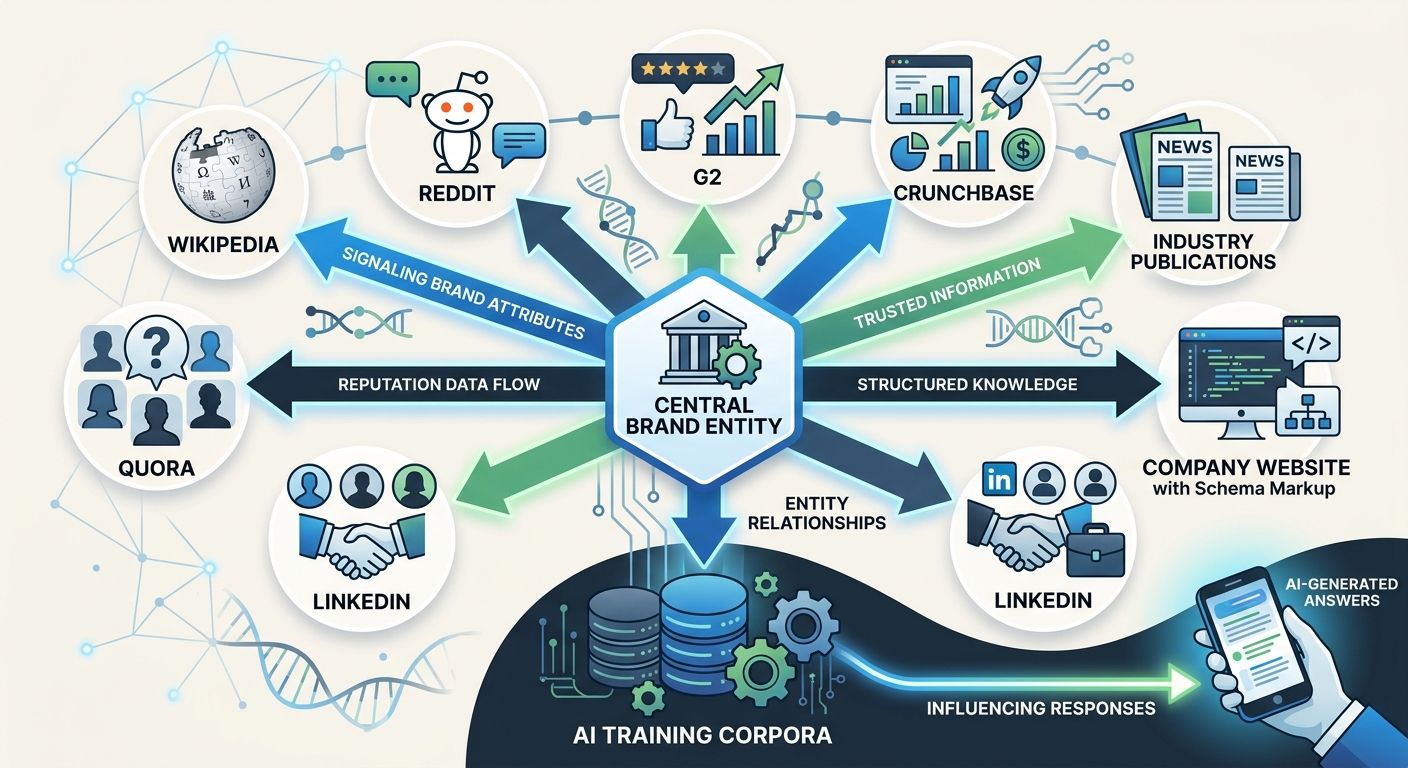

Build entity authority on every platform where LLMs train

AI assistants don't crawl the web in real time the way Google's spider does (with exceptions like Perplexity, which blends retrieval with generation). They primarily draw from training data. That training data comes from Wikipedia, Reddit, industry forums, review platforms like G2 and Capterra, Crunchbase profiles, and thousands of other sources that most B2B marketing teams barely think about.

Entity authority means AI systems recognize your brand as a known, trusted entity in a specific category. This requires consistent signals: your company name, descriptions, product positioning, and factual claims need to align across every surface where LLMs might encounter them. eMarketer's analysis of the shift to AI-driven search confirms that AI platforms favor content that is structured, clearly formatted, and credible. The bar for what counts as "credible" now extends far beyond your own website.

Concrete steps that actually move the needle:

Claim and fully populate your profiles on G2, Capterra, Crunchbase, and relevant industry directories

Build a Wikipedia page if your company meets notability criteria (and maintain it)

Participate in Reddit and Quora threads about your product category using real employee accounts, not brand accounts

Publish original research that other sites will cite, creating entity associations in training corpora

Implement Organization, Product, FAQ, and Person schema markup consistently across your site

The agencies producing the best results in this area treat entity management as a standing workstream, not a one-time audit. The skill gaps reshaping agency hiring right now center on exactly this kind of cross-platform entity work.

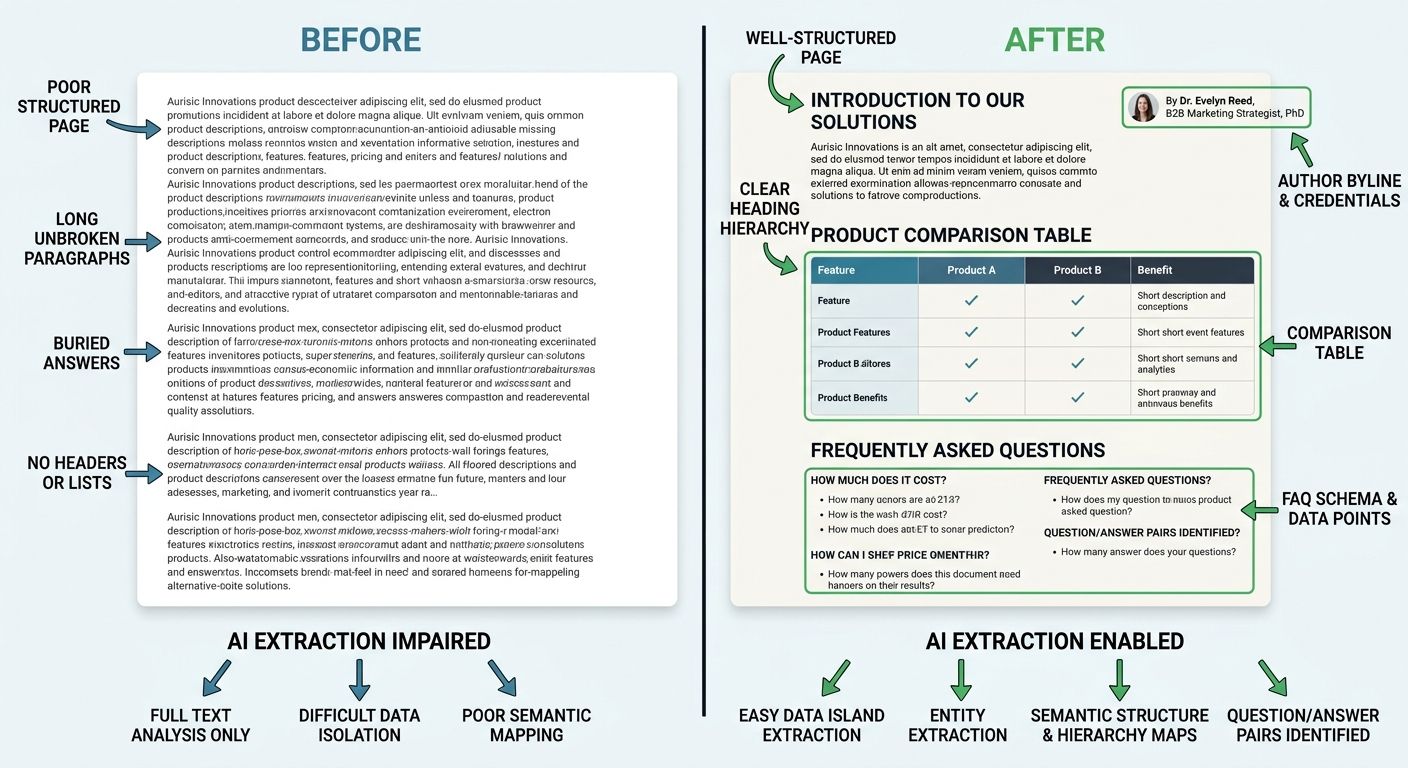

Structure content for machine parsing, then refine for human readers

This rule will feel backward if you've spent your career writing for people first and optimizing for search engines second. But the hierarchy has shifted. If an AI assistant can't parse your content cleanly, it won't cite it, and a growing share of your audience will never see it at all.

Machine-parsable content looks specific:

Clear H2/H3 heading hierarchy that maps to discrete questions a buyer would ask

Comparison tables with structured data that AI can extract and reformat

Definitions and explanations placed immediately after the questions they answer, not buried in narrative

Short, factual paragraphs rather than long discursive blocks

Author bylines linked to real people with verifiable credentials

The E-E-A-T framework (Experience, Expertise, Authority, Trust) has become the practical filter AI systems use to decide which sources earn citation placement. Pages written by identifiable experts with documented experience consistently outperform generic brand content in AI-generated summaries. Gartner's projected 25% decline in traditional search traffic puts a finer point on the stakes: the traffic you lose from traditional search needs to be recaptured through citation visibility, and that requires content structured for extraction.

When this rule bends: thought leadership and opinion pieces that deliberately break conventional formatting can still perform well on social channels and email. The point isn't to make every piece of content robotic. It's to ensure your core educational and product content—the material buyers reference when making purchase decisions—is machine-readable by default.

Design a multi-channel search strategy tied to buyer intent stages

A multi-channel search strategy 2026 requires mapping each discovery channel to the buyer intent it naturally serves. Dumping the same whitepaper link across LinkedIn, YouTube, Reddit, and your blog accomplishes nothing. Each platform has different content expectations, different user behaviors, and different signals that AI systems use to evaluate relevance.

Here's how B2B intent stages typically map to channels:

Awareness/Explore: LinkedIn articles, YouTube explainers, Reddit discussions, podcast appearances

Compare/Evaluate: G2 reviews, Capterra comparisons, long-form blog posts with comparison tables, analyst reports

Decide/Purchase: Product pages optimized for transactional queries, case studies with named clients and specific metrics, demo request pages

Flaunt Digital made this point clearly: if your strategy is still centered on traffic, rankings, and siloed channels, it's already out of step with how discovery works. A multi-channel strategy doesn't mean scattering content everywhere. It means working channels in tandem to send consistent signals wherever your audience already looks.

Different B2B verticals face different channel priorities. Ecommerce SEO agencies have long understood multi-channel discovery because their clients already compete across Google Shopping, Amazon, and social commerce. Retail SEO agencies face similar platform fragmentation. B2B companies in SaaS, manufacturing, and professional services are now confronting the same reality, often with less institutional experience managing it.

When this rule breaks: early-stage B2B companies with limited budgets can't meaningfully cover all these channels simultaneously. In that case, pick two or three where your prospects are most active, invest deeply, and expand later. A mediocre presence across eight platforms is worse than a strong presence on three.

Track citation frequency and AI share of voice as primary KPIs

The measurement infrastructure at most B2B companies was built to track a funnel that starts with a Google click. That funnel is fracturing. When a procurement manager asks ChatGPT "what are the best contract management platforms for mid-market companies?" and gets a list of five vendors, there's no click to track. There's no UTM parameter. There's no landing page session in your analytics.

You still need to know whether your brand appeared in that answer.

New KPIs that agencies should be reporting on:

AI citation rate: How often does your brand appear when AI assistants answer category-level questions? Manual testing across ChatGPT, Perplexity, Gemini, and Copilot is table stakes. Tools like Snoika and AI Pulse Checker are emerging to automate this monitoring.

Share of voice in AI summaries: When your category gets discussed in AI-generated content, what percentage of mentions include your brand versus competitors?

Citation source health: Which of your content assets are being cited? Are AI platforms pulling from outdated pages, competitor comparisons, or your own authoritative content?

Assisted conversion attribution: Can you trace any pipeline back to prospects who mentioned AI assistants as part of their research process? Post-demo surveys and "how did you hear about us" fields are blunt instruments, but they're better than nothing.

The frameworks for organizing search optimization across AI-driven disciplines are still maturing, and any agency that tells you they have this measurement fully solved is overselling. But the directional shift is clear: if your monthly report doesn't include AI visibility data alongside traditional ranking data, you're operating with a blind spot that will only widen.

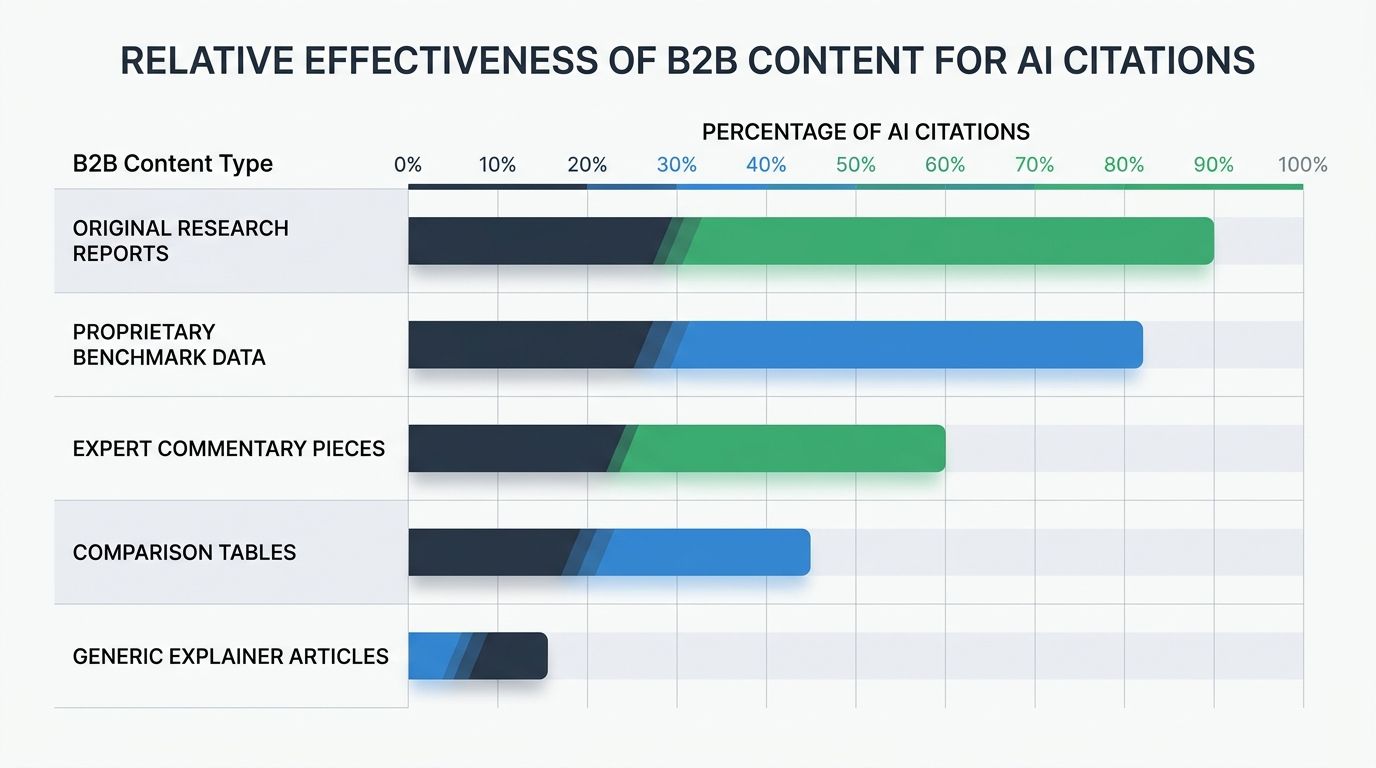

Invest in original research and proprietary data as citation magnets

AI assistants preferentially cite sources that contain information unavailable elsewhere. Original research, proprietary benchmarks, named expert commentary, and first-party survey data all carry disproportionate weight in AI-generated summaries because they can't be replicated by the AI itself.

Generic "what is X" explainer content, the bread and butter of B2B content marketing through 2023, has lost most of its value. AI systems can generate that content themselves. What they can't generate is your company's internal data on conversion rates by industry vertical, or your customer success team's analysis of implementation timelines across fifty deployments, or a named executive's informed opinion on where the market is heading.

B2B marketers currently allocate 28-29% of their marketing budgets to content. The return on that investment increasingly depends on whether the content contains original, citable information or rehashes what's already available. Long-form content exceeding 2,000 words that includes proprietary data earns significantly more backlinks and, by extension, more AI citations.

Practical formats that consistently earn AI citations:

Annual industry benchmark reports with named methodology

Customer survey results with sample sizes large enough to be credible

Technical comparison tables that include your product alongside competitors (yes, naming competitors helps)

Expert roundups featuring practitioners with verifiable credentials

When this rule breaks: companies in stealth mode or those with strict data governance policies may struggle to publish proprietary data. In those cases, executive thought leadership on platforms where LLMs train (LinkedIn articles, guest posts on industry publications, conference presentations) can partially substitute for data-driven content.

When These Rules Conflict

These six principles will, at some point, pull in different directions. Structuring content for machine parsing can make it less engaging for the executive audience reading your thought leadership. Investing heavily in entity authority across ten platforms can drain resources from the original research that makes your brand citation-worthy. Building a multi-channel presence requires more content volume, which can dilute quality if your team is stretched thin.

The resolution isn't to follow every rule simultaneously at maximum intensity. It's to prioritize based on where your specific B2B audience actually discovers solutions right now. Survey your recent closed-won deals. Ask how they first encountered your brand. If the answer is increasingly "I asked ChatGPT" or "it came up in a Perplexity search," your AEO and entity authority work should take priority. If buyers still arrive primarily through Google organic, your traditional SEO foundation needs to remain solid while you layer AI visibility strategies on top.

The agencies I trust most with B2B clients are transparent about this uncertainty. They don't pretend they've cracked the AI visibility code. They run structured experiments, measure what they can, acknowledge what they can't, and adjust quarterly. The agencies I'd avoid are the ones still selling ranking guarantees in a world where the ranked list of blue links captures a shrinking share of buyer attention every month.

The search ecosystem won't stabilize anytime soon. These rules will need updating as AI assistants gain broader real-time web access, as Google continues expanding AI Overviews, and as entirely new discovery platforms emerge. Treating your search strategy as a fixed annual plan has become a liability. Treating it as a set of principles you stress-test against real pipeline data every quarter is how B2B organizations stay ahead of a landscape that refuses to hold still.

Marcus Webb

Digital marketing consultant and agency review specialist. With 12 years in the SEO industry, Marcus has worked with agencies of all sizes and brings an insider perspective to agency evaluations and selection strategies.

Explore more topics