Five Major AI Search Engines Return Completely Different Answers to Identical Query in New Test

A technical developer testing five AI search engines with the same question received five distinct answers citing entirely different sources, according to a report published today on DEV Community. Google AI Overviews recommended GitHub Actions, ChatGPT suggested GitLab CI, Perplexity cited an unfam

Five Major AI Search Engines Return Completely Different Answers to Identical Query in New Test

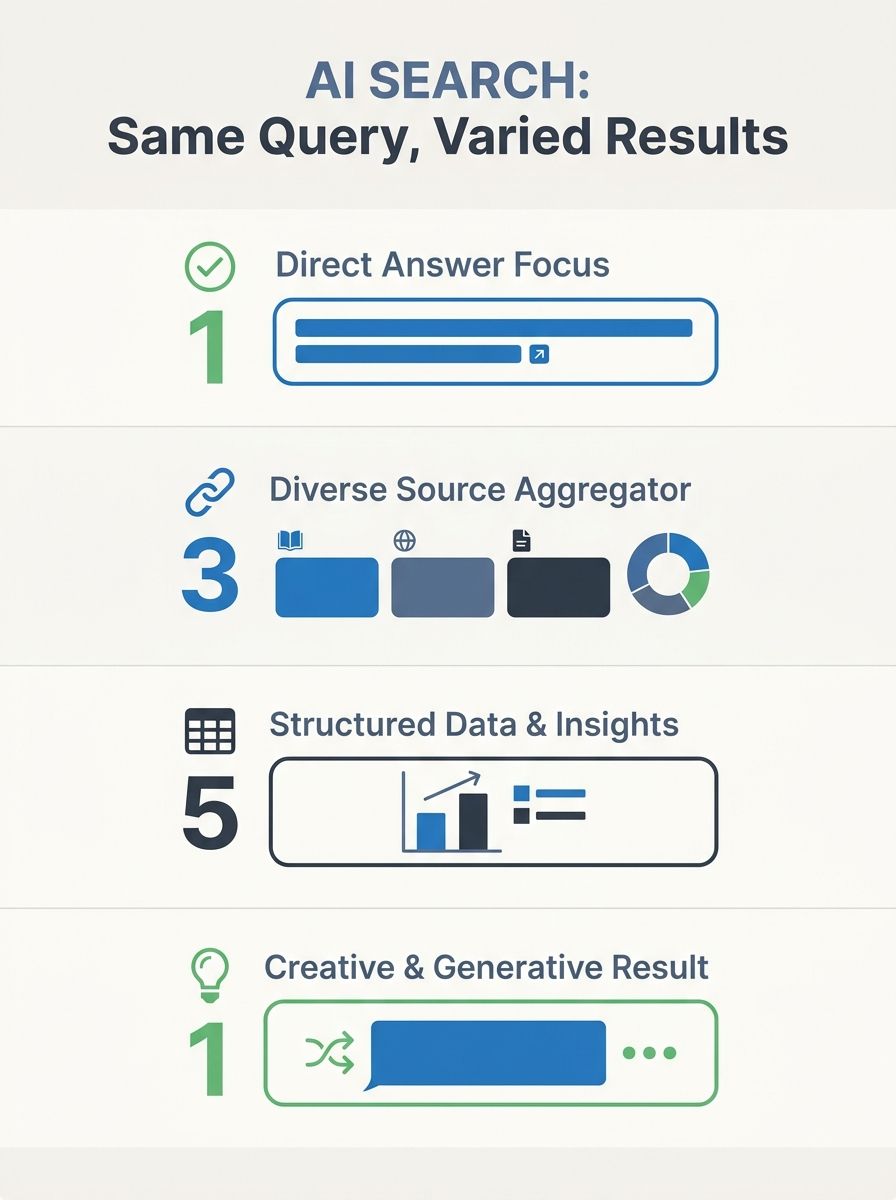

A technical developer testing five AI search engines with the same question received five distinct answers citing entirely different sources, according to a report published today on DEV Community. Google AI Overviews recommended GitHub Actions, ChatGPT suggested GitLab CI, Perplexity cited an unfamiliar 2026 benchmark blog, Gemini referenced a YouTube tutorial, and Claude responded with follow-up questions instead of a direct answer.

The divergence stems from fundamental architectural differences in how each platform indexes content, applies ranking logic, and selects sources. Google AI Overviews operates on the standard Google search index, ChatGPT combines a Bing index with GPTBot crawl data, Perplexity queries Brave Search plus proprietary crawl data, Gemini integrates Google Search with YouTube and Scholar, and Claude relies primarily on training data without real-time web search.

The test highlights a growing challenge for agencies managing client visibility: traditional SEO focused solely on Google rankings no longer guarantees presence across AI-powered search experiences that collectively serve hundreds of millions of queries daily.

Platform-Specific Indexing Creates Citation Fragmentation

The 5W AI Platform Citation Source Index 2026, which analyzed over 680 million citations across the five engines, found Google AI Overviews maintains approximately 54 percent overlap with traditional organic rankings, according to the report. The remaining 46 percent of AI-cited content diverges from standard search results.

Google AI Overviews now appears on roughly 48 to 60 percent of U.S. queries, up from 13 percent in early 2025. Education queries jumped from 18 percent to 83 percent AI Overview presence, while B2B technology queries increased from 36 percent to 82 percent.

ChatGPT captures 60.7 percent of all AI search traffic as of February 2026, but activates its search feature on only 34.5 percent of queries. The remaining queries draw answers from training data alone, requiring content to exist both on the live web and within the training corpus. The platform concentrates citations heavily on Wikipedia, Reddit, Forbes, and Business Insider, with 56 percent of journalism citations from the past 12 months.

Citation Transparency Varies Dramatically Across Platforms

Perplexity provides numbered source citations for every answer, creating measurable traffic referrals to cited sources, the report notes. The platform queries the Brave Search index combined with proprietary crawl data, favoring authoritative domains, structured answers, fresh content with explicit timestamps, hard data, and original research.

Gemini integrates multimodal content across Google's full stack, including YouTube, Google Scholar, and standard search results. A written tutorial may be passed over in favor of video content covering the same topic because Gemini processes both formats.

Claude differs fundamentally by operating without real-time web search, relying instead on training data plus document uploads and tool integrations. The platform prioritizes conceptual understanding over fresh citations.

The architectural differences create optimization requirements that extend beyond traditional answer engine optimization approaches agencies currently deploy for Google-focused strategies.

Experience Signals Separate Human Content From AI-Generated Noise

Google's E-E-A-T framework—Experience, Expertise, Authoritativeness, Trustworthiness—applies more stringently to AI Overviews than traditional search results, according to the analysis. The "Experience" dimension specifically distinguishes content created by authors who built, tested, or used the subject matter from content generated without direct involvement.

Content characteristics that secure citations on Perplexity include authoritative domains such as official documentation and peer-reviewed publications, structured Q&A formats, numbered lists, explicit publication dates, quantitative benchmarks, and proprietary data unavailable elsewhere.

ChatGPT's dual-system ranking combines Bing-side signals including domain authority, backlinks, keyword relevance, and click-through rates with ChatGPT-side signals focused on training data quality, contextual understanding, and conversational fit.

The Apple Intelligence integration announced for iOS 18.2 directs Siri queries to ChatGPT, with WWDC 2026 expected to deepen the handoff, expanding ChatGPT's reach beyond direct platform users.

Services Implications

Agencies operating under traditional SEO contracts focused exclusively on Google rankings face immediate strategic gaps when AI search engines surface client competitors through entirely separate indexing systems. The test demonstrates that page-one Google visibility no longer guarantees citation across platforms capturing the majority of AI-assisted search traffic.

Practical optimization now requires platform-specific approaches: ensuring GPTBot access for ChatGPT, optimizing for Brave Search to reach Perplexity, strengthening first-hand experience signals for Google AI Overviews, and producing multimodal content formats for Gemini. Agencies must audit client robots.txt files, verify crawler permissions across multiple bots, and implement structured data that supports extraction as standalone answers rather than full-page experiences.

The divergence in citation sources reveals new competency requirements for agency teams beyond keyword research and backlink analysis. Technical teams need visibility tracking across five separate platforms, content teams require format-specific optimization frameworks, and client reporting must expand beyond Google Analytics to include citation monitoring across fragmented AI search experiences where traditional conversion attribution breaks down entirely.

Marcus Webb

Digital marketing consultant and agency review specialist. With 12 years in the SEO industry, Marcus has worked with agencies of all sizes and brings an insider perspective to agency evaluations and selection strategies.

Explore more topics