Search Engine Journal Publishes 90-Day Framework for AI Search Optimization as $750 Billion Shifts to Generative Platforms

Search Engine Journal published a 90-day implementation framework for Generative Engine Optimization on April 30, positioning the discipline as a response to the $750 billion in consumer spending that has shifted toward AI-powered search platforms, according to the guide.

Search Engine Journal Publishes 90-Day Framework for AI Search Optimization as $750 Billion Shifts to Generative Platforms

Search Engine Journal published a 90-day implementation framework for Generative Engine Optimization on April 30, positioning the discipline as a response to the $750 billion in consumer spending that has shifted toward AI-powered search platforms, according to the guide.

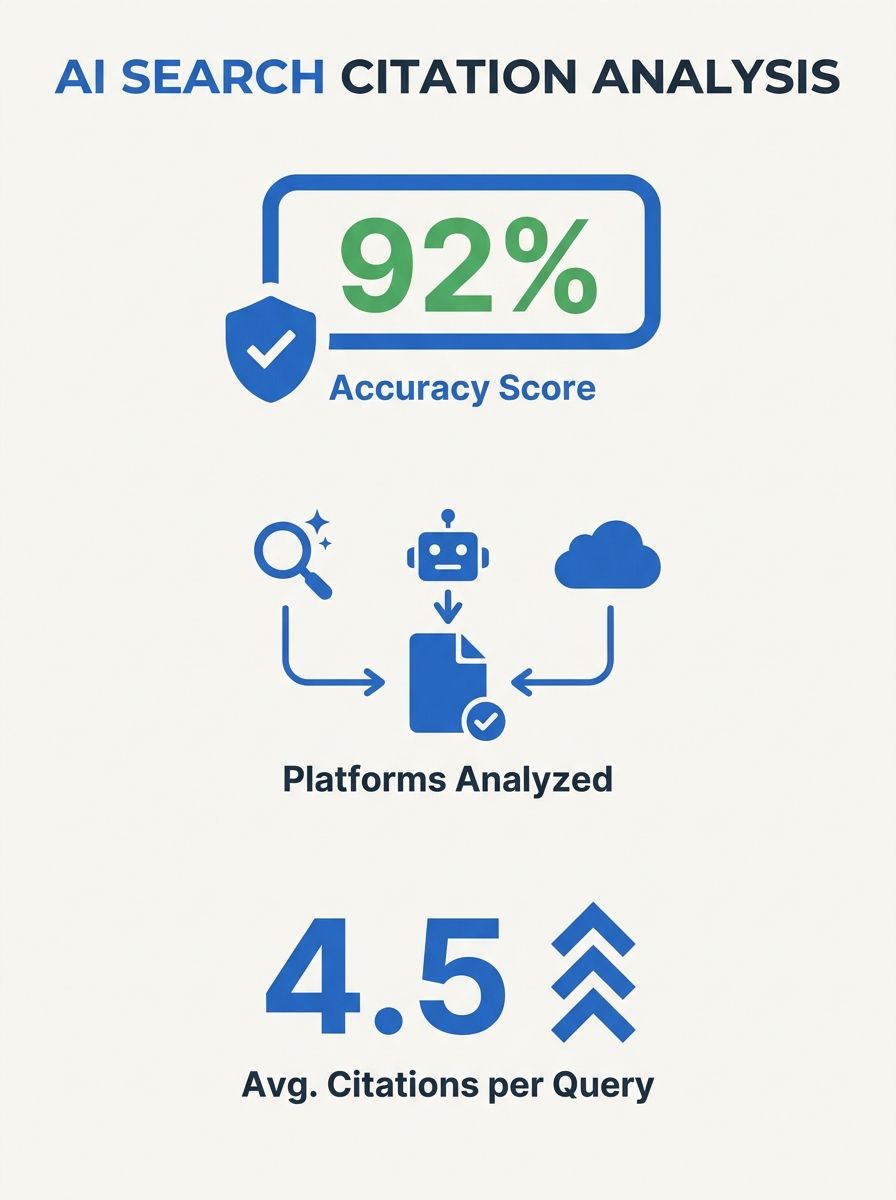

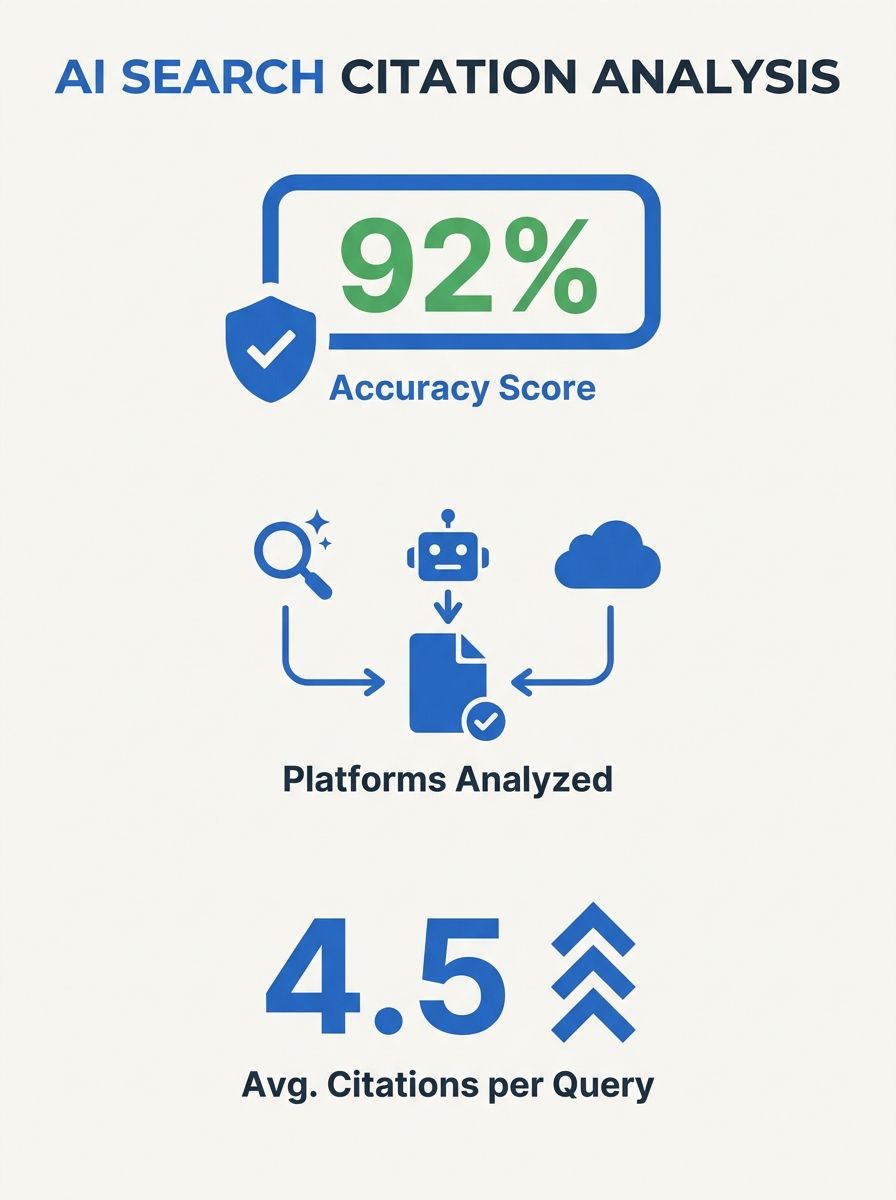

The framework addresses three data points from research conducted by sponsor Uberall: 60 percent of searches now conclude without a click to any website, 68 percent of brands are absent from AI engine recommendations in their category, and AI-powered search systems have captured an estimated $750 billion in consumer transaction volume over the past 18 to 24 months.

The guide defines GEO as an optimization approach that targets AI recommendations rather than traditional search rankings. Where search engine optimization focused on page-level signals to earn placement in results lists, generative engine optimization focuses on entity-level signals to earn citations when AI systems synthesize answers.

Three-Pillar Framework

The published framework structures GEO around three operational pillars.

The first pillar, labeled "source of truth," requires businesses to synchronize basic facts—name, address, phone number, hours, services—across every platform an AI model might reference during training or inference. Inconsistent data across Google Business Profiles, Apple Maps, Yelp, Bing Places, and aggregator networks signals lower entity confidence to machine learning systems, the guide states.

The second pillar, "context engineering," directs content creation toward the questions customers ask rather than keyword clusters. The framework recommends conversational answers structured as direct responses to spoken queries, citing tests that show AI engines extract complete factual answers over keyword-dense text that delays the response.

The third pillar, "orchestration," establishes measurement protocols around citation rates rather than ranking positions. Teams track where brands appear in AI-generated recommendations, refresh content based on citation gaps, and compound visibility across multiple generative platforms over quarterly cycles.

90-Day Implementation Schedule

The framework divides implementation into three sequential phases.

Week one focuses on data hygiene. Teams audit NAP details across major platforms, verify structured data implementation on location and product pages, and document citation gaps by testing customer queries in ChatGPT, Gemini, Perplexity, and Google AI Overviews. The guide notes that structured data clarity matters more than volume—recent tests indicate large language models parse schema markup similarly to standard on-page text, but structured formats reduce entity ambiguity.

Days seven through 30 shift to content production. For each documented citation gap, teams build or optimize pages that answer specific prompts. The framework recommends one prompt per page, direct factual responses over long-form guides, and explicit local details, dates, and named authors to increase citation confidence. This phase typically reveals differences between content optimized for traditional rankings and content AI systems actively cite, according to the guide.

Days 30 through 60 target off-page authority. The framework advises teams to identify sites that already rank for customer prompts and appear frequently in AI citations, then pursue mentions on those domains. The guide argues that topical authority outweighs domain authority for citation rates—a specialist publication with category relevance often generates more AI citations than larger general-audience publishers.

Research Methodology and Sponsorship

Uberall sponsored the guide's publication. The company provides location marketing software for multi-location businesses. The $750 billion figure, 60 percent zero-click rate, and 68 percent brand absence statistics derive from Uberall research into AI search behavior, though the guide does not specify sample size, time period, or methodology for those measurements.

The framework's structural resemblance to traditional SEO implementation schedules—audit, content optimization, authority building—reflects deliberate design. The guide positions GEO as an evolution of existing search optimization practices rather than a replacement discipline, arguing that the compounding visibility model remains valid while the surfaces AI systems read from have changed.

Local businesses and multi-location brands represent the framework's primary audience. The guide references queries like "best orthodontist near Lincoln Park" and "coffee shops in Berlin that allow dogs" as examples of the conversational prompts GEO targets, aligning with patterns documented in earlier research on how AI Overviews are reshaping local search visibility.

Citation Economics Shift

The framework identifies a structural shift in off-page authority economics. Traditional link building prioritized high-domain-authority publishers on the assumption that search engines weight those signals more heavily. For AI citations, the guide argues, relevance and existing citation rates predict inclusion better than domain metrics.

Teams following the framework re-run Phase 1 prompts at 30-day intervals, track which domains appear repeatedly in AI-generated citations, and build that list into their outreach targets. The guide describes this as a shortlist approach—fewer, more topical placements rather than volume-based campaigns.

The shift mirrors broader changes in how AI search systems evaluate authority signals, where models trained on diverse data sources prioritize factual consistency across multiple mentions over single high-authority citations.

What Happens Next

Agencies implementing the framework face a measurement challenge: citation tracking across generative platforms requires manual prompt testing or specialized software, neither of which integrates cleanly with existing SEO reporting stacks. The 90-day timeline assumes teams can dedicate resources to query testing, gap documentation, and iterative content updates without disrupting existing optimization work.

The framework's emphasis on entity-level optimization rather than page-level signals aligns with technical shifts documented in recent analyses of AI crawler behavior, where models increasingly parse business information as structured entities rather than keyword-bearing documents. Businesses that have already invested in local SEO data hygiene—consistent NAP, verified business profiles, structured markup—enter GEO implementation with foundational work complete.

The $750 billion figure, if validated, suggests AI-powered search has moved beyond experimental adoption into material commercial impact. For agencies evaluating whether to add GEO services, the decision depends less on whether AI search matters and more on how quickly client revenue concentrates in zero-click, AI-mediated transactions where traditional visibility metrics no longer apply.

Marcus Webb

Digital marketing consultant and agency review specialist. With 12 years in the SEO industry, Marcus has worked with agencies of all sizes and brings an insider perspective to agency evaluations and selection strategies.

Explore more topics