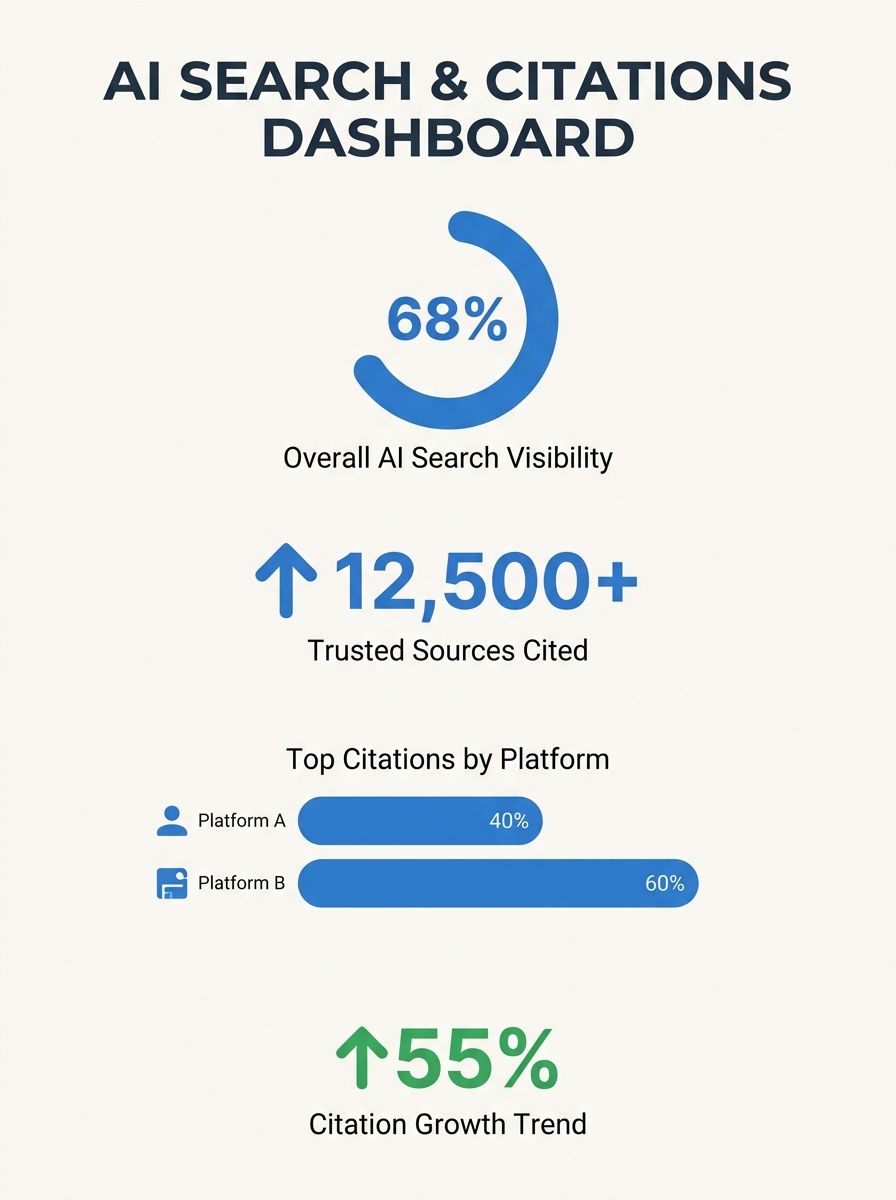

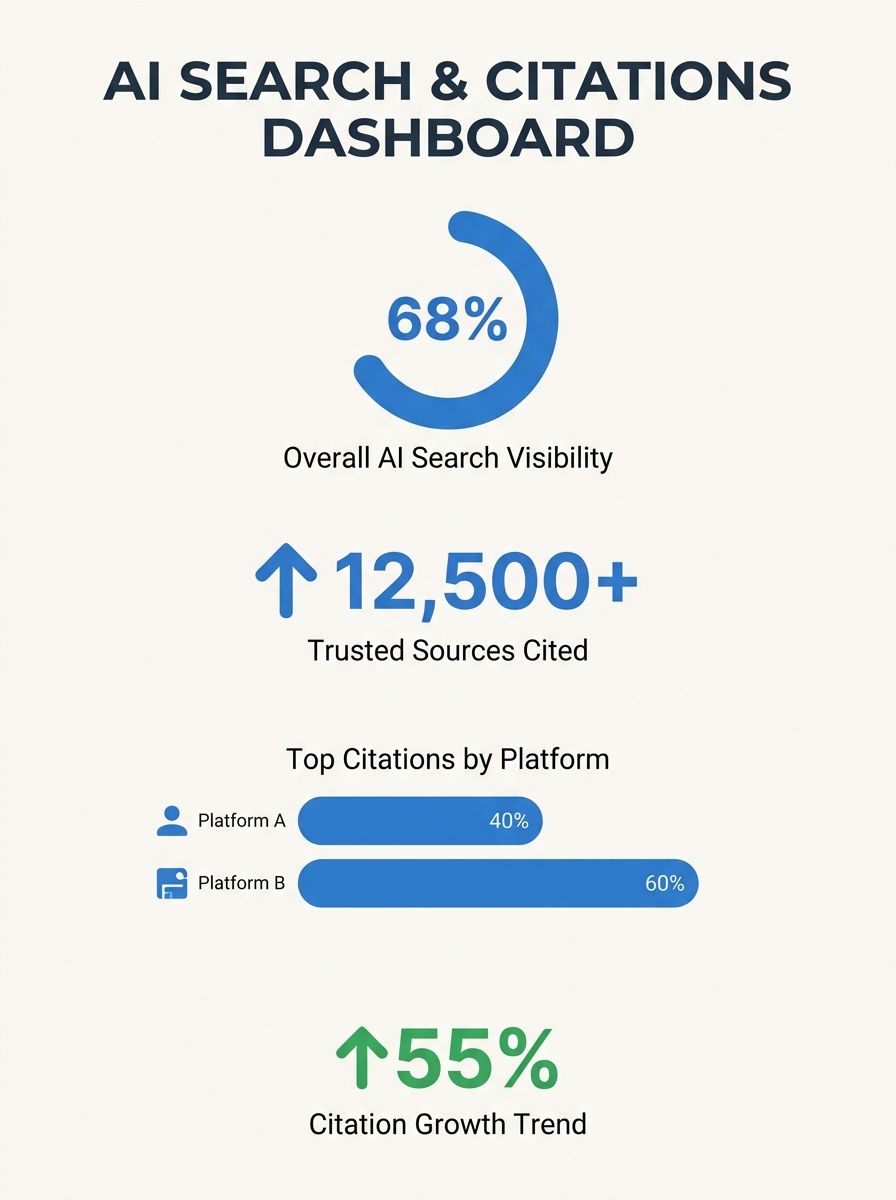

Search Engine Journal Releases 90-Day GEO Framework as 68% of Brands Vanish from AI Search Recommendations

Nearly seven in ten brands are absent from AI-generated search recommendations in their own categories, prompting Search Engine Journal to publish a 90-day implementation framework for Generative Engine Optimization on April 30. The playbook addresses what the publication characterizes as a "fast-mo

Search Engine Journal Releases 90-Day GEO Framework as 68% of Brands Vanish from AI Search Recommendations

Nearly seven in ten brands are absent from AI-generated search recommendations in their own categories, prompting Search Engine Journal to publish a 90-day implementation framework for Generative Engine Optimization on April 30. The playbook addresses what the publication characterizes as a "fast-moving visibility problem" driven by research showing $750 billion in consumer spending has shifted toward AI-powered search platforms.

According to data from Uberall cited in the framework, approximately 60 percent of searches now conclude without a single click to an external website, while 68 percent of brands fail to appear when AI engines generate category recommendations. The framework positions GEO as a response discipline distinct from traditional SEO, optimizing entities for recommendation rather than pages for ranking.

Three-Phase Implementation Structure

The published framework divides the 90-day period into three distinct phases. Week one focuses on foundational data analysis, requiring teams to audit NAP consistency (name, address, phone) across Google Business Profiles, Apple Maps, Yelp, and Bing Places. The framework instructs practitioners to test unbranded queries—examples include "best orthodontist near Lincoln Park" or "coffee shops in Berlin that allow dogs"—across ChatGPT, Gemini, Perplexity, and Google AI Overviews to identify citation gaps.

Days seven through 30 shift to what the framework terms "context engineering," building content structured around complete question-answer pairs rather than keyword density. The playbook specifies one dedicated page per customer prompt, with direct factual responses prioritized over lengthy guides. According to the framework, AI engines extract complete answers and reward specificity over vague claims.

The final phase, spanning days 30 through 60, targets off-page authority through what the framework calls "surgical placement." Rather than pursuing high-domain-authority publishers, the playbook directs teams toward sites already cited by AI engines in their category. The framework notes that topical authority from specialist publications often generates more AI citations than placements on larger generic platforms.

Citation Economics vs. Traditional Link Building

The framework draws explicit distinctions between GEO and traditional link-building strategies. Where SEO practitioners historically pursued top-tier publisher placements based on domain authority metrics, the playbook argues that AI engines source from credible, topical publications regardless of overall site size. The framework recommends practitioners re-run Phase 1 prompts to identify which domains appear consistently in AI-generated citations, using that list as a targeting guide.

The playbook characterizes GEO work around three structural pillars: source of truth (consistent NAP data across platforms), context engineering (content matching natural language queries), and orchestration (ongoing measurement and refinement). The framework states that inconsistent business signals across platforms train AI engines to assign lower confidence scores to entities, reducing citation likelihood.

Related guidance on AI search optimization frameworks and local SEO visibility tactics has emerged across industry publications in recent months as generative platforms reshape search economics.

Context and Outlook

The framework's April 30 release positions GEO as a response to structural changes in search behavior documented over the past 18 to 24 months. The 68 percent invisibility rate among brands suggests most multi-location businesses remain optimized for ranking algorithms rather than generative recommendation systems, creating what the playbook terms a "blind spot" in current marketing strategies.

For CMOs evaluating SEO agencies, the framework's publication signals a discipline shift requiring new measurement approaches. Traditional ranking metrics do not capture citation frequency in AI-generated responses, while NAP consistency and structured data quality—historically considered foundational hygiene—now directly influence whether generative engines surface a brand at all. Agencies demonstrating fluency in prompt testing and citation tracking may offer more relevant capabilities than those optimizing exclusively for SERP position.

The 90-day timeline reflects the framework's emphasis on iterative testing over comprehensive launches. By structuring phases around specific visibility gaps identified through prompt testing, the playbook provides multi-location businesses a method for entering AI recommendation ecosystems without requiring complete content inventories or site rebuilds. Whether the approach proves scalable beyond early adopters will depend on how quickly generative platforms standardize their sourcing criteria and whether traditional ranking factors retain influence alongside citation-based visibility.

Marcus Webb

Digital marketing consultant and agency review specialist. With 12 years in the SEO industry, Marcus has worked with agencies of all sizes and brings an insider perspective to agency evaluations and selection strategies.

Explore more topics