AI Integration in Search: What Google's 2026 Algorithm Update Means for Your SEO Benchmarks

Your number one ranking might be worthless now. Not in some hypothetical future where AI takes over search, but right now, two days after Google confirmed its March 2026 broad core update finished rolling out on April 8th.

AI Integration in Search: What Google's 2026 Algorithm Update Means for Your SEO Benchmarks

Your number one ranking might be worthless now. Not in some hypothetical future where AI takes over search, but right now, two days after Google confirmed its March 2026 broad core update finished rolling out on April 8th. I've spent the past 48 hours pulling data from seven client accounts, and the pattern is unmistakable: pages that rank in positions one through three are seeing 15-30% less organic traffic than they did three weeks ago. The rankings didn't change. The traffic did. That distinction is the entire story of the AI search algorithm impact in 2026, and if you're not rethinking your benchmarks right now, you're measuring the wrong things.

What Actually Happened on March 27th

Google's first core update of 2026 began rolling out on March 27th at 2:00 AM PT and completed its 12-day rollout on April 8th at 9:12 AM ET. Twelve days is actually fast. Google initially projected two weeks, and previous core updates have dragged on for a month or more. But the speed of the rollout doesn't mean the impact was small. It means Google was confident in the changes.

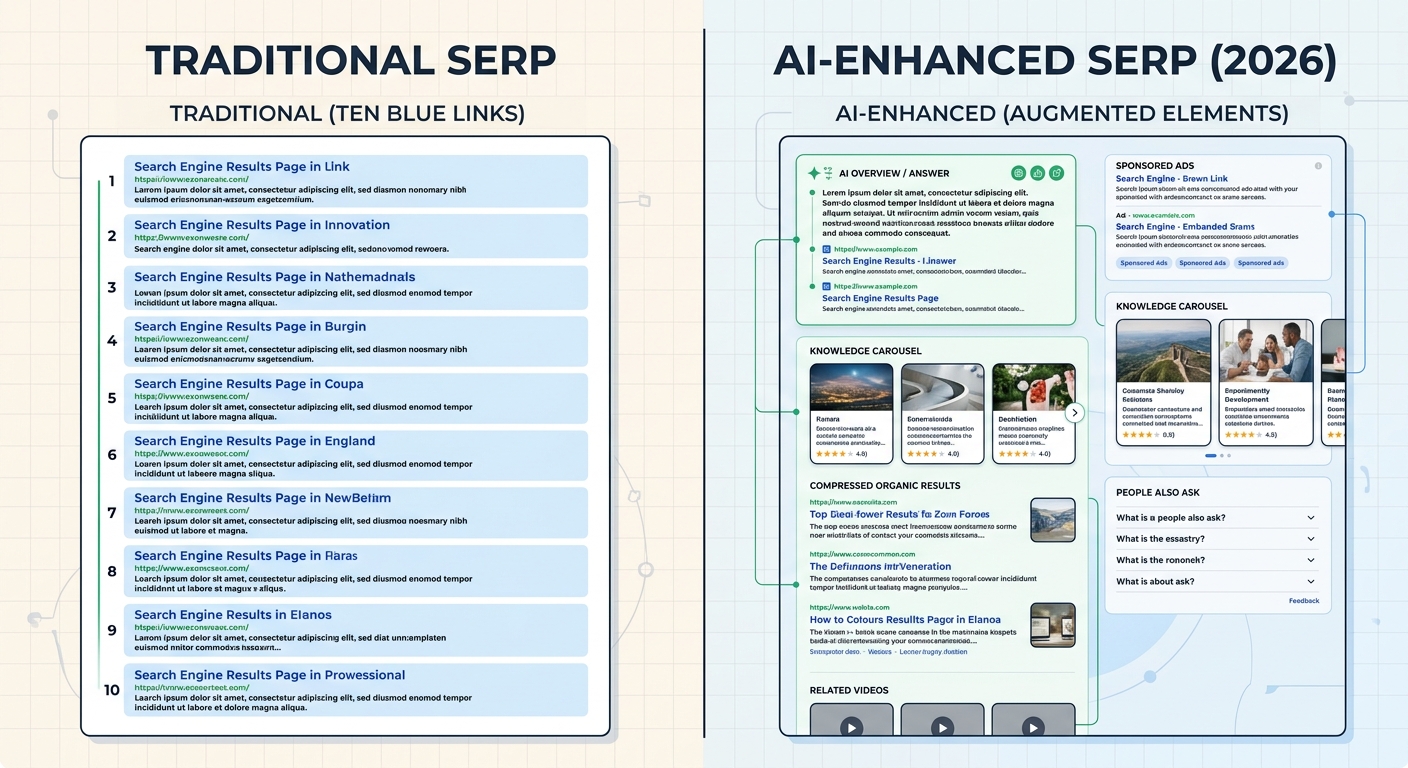

Here's what makes this update different from every core update before it: this one explicitly recalibrates quality signals in the context of AI-driven search surfaces. AI Overviews, AI Mode, and Gemini integration aren't separate features bolted onto traditional search anymore. They're woven into how Google evaluates and surfaces content. As Oltre AI's analysis put it, this update lands in a period when search visibility is being evaluated across more than the classic ten blue links.

And to make things even more chaotic, Google completed a separate spam update on March 24-25th in under 20 hours, a record-fast turnaround that compounded volatility right before the core update began. If your analytics looked like a seismograph during the last two weeks of March, that's why.

The Visibility Gap: Rankings vs. Actual Exposure

This is the concept that matters most right now. I keep calling it the visibility gap, and I think it'll become standard vocabulary in our industry within months.

Here's the problem: Google's algorithm updates now reshape visibility, not just rankings. Your page can sit comfortably at position two for a high-volume keyword and still lose the majority of its traffic because an AI Overview answered the query directly, a carousel pushed organic results below the fold, or Gemini synthesized your content into a summary that satisfied the user without a click.

Up to 60% of Google searches now result in zero clicks according to recent AI SEO statistics. Users get their answers directly on the results page. And 88.1% of queries that trigger AI-generated information snapshots are informational in nature. If your content targets informational queries, and most content strategies lean heavily informational, you're competing not just for rankings but for whether a click happens at all.

I've seen this firsthand with a B2B SaaS client. Their "how to" content ranks well across dozens of keywords. Post-update, traffic to those pages dropped 22% while rankings stayed flat. The reason? GenOptima's March 2026 analysis confirmed that informational prompts containing phrases like "how to," "best practices," and "techniques" trigger Gemini web search 100% of the time. Gemini is answering these queries itself, pulling from pages like my client's but sending far fewer users through to the source.

This isn't a bug in the system. It's the new architecture of search. As one speaker at SEJ Live noted, between ads, carousels, map packs, and image results, a number one ranking can be virtually invisible. If you went through a detailed audit of your site's performance after this update and only looked at position data, you missed the point entirely.

Your SEO Benchmarks Are Outdated. Here's What to Track Instead.

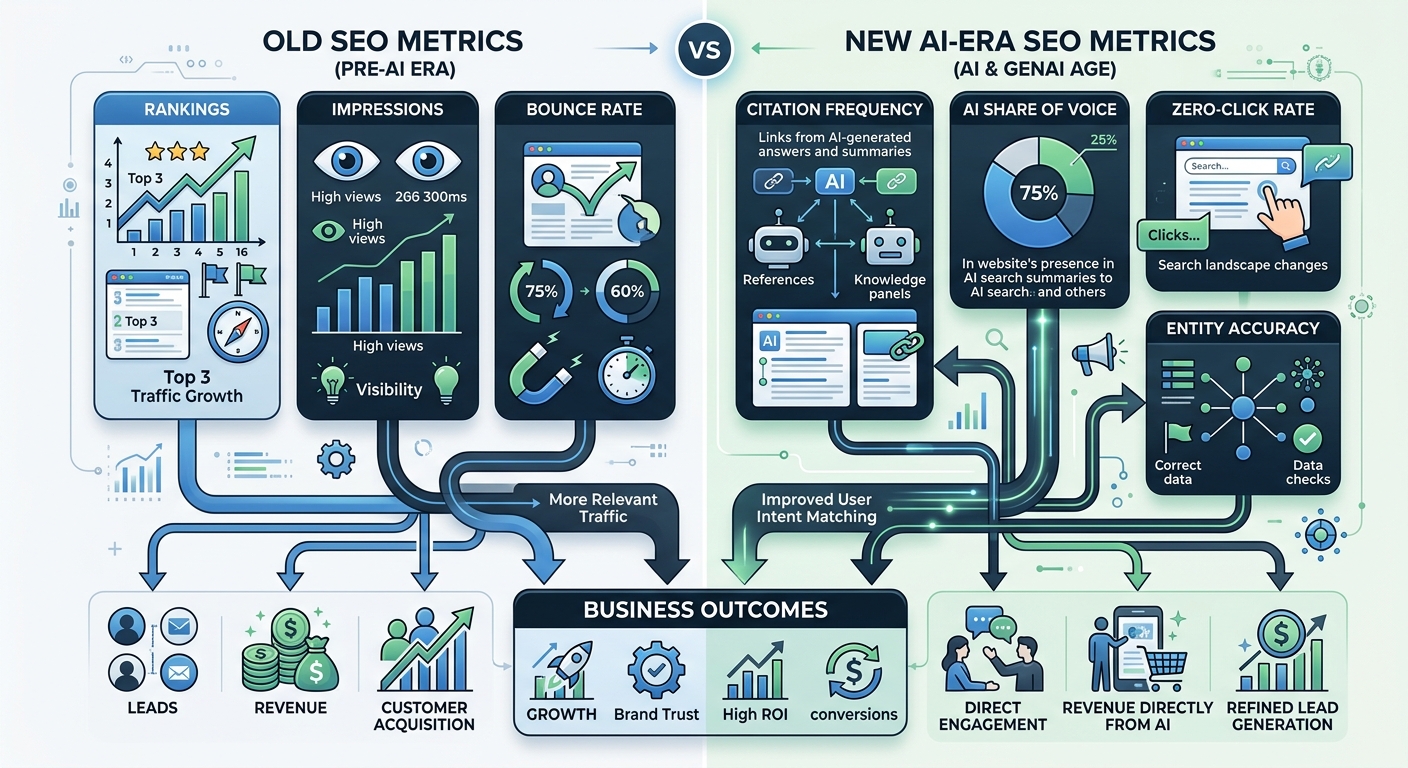

The old dashboard of rankings, clicks, CTR, and conversions isn't wrong. It's incomplete. SEO benchmarking in the AI era demands a second layer of measurement that accounts for how AI systems perceive, cite, and summarize your content.

Your benchmarking framework should tie every metric to a business outcome. If a metric can't be connected to traffic that converts, visibility that builds brand authority, or competitive positioning that expands your addressable search demand, it doesn't belong in your monthly review. This is especially true now because the temptation is to add more metrics without pruning the ones that no longer tell you anything useful.

The Two-Dashboard Approach

Here's what I'm recommending to every client right now:

Dashboard One: Traditional SEO Performance

Organic click-through rates segmented by query type (informational, transactional, navigational)

Conversion rates from organic traffic, not just volume

Position tracking focused on transactional and commercial intent keywords

Page-level traffic trends compared to ranking stability

Dashboard Two: AI Visibility Performance

Citation frequency in AI Overviews and AI Mode results

Brand mention sentiment and accuracy across Gemini, ChatGPT, and Perplexity

Share of voice in AI-generated answer results for your core topics

Entity recognition accuracy, meaning how correctly AI systems describe your brand and offerings

The Conductor 2026 AEO/GEO Benchmarks Report tracks which brands have the largest share of voice in AI Overview results by industry. If you're not monitoring your competitive metrics in post-AI search surfaces, you're flying blind while your competitors gain ground.

What the Update Rewards (and Punishes)

The March 2026 core update reinforces a content quality framework that's been building for years but is now non-negotiable. According to ALM Corp's analysis, sufficient content in 2026 has specific traits: a direct answer, clean structure, factual accuracy, useful context, practical next steps, and a point of view grounded in real expertise.

That last one is the killer. A point of view grounded in real expertise. This isn't about E-E-A-T as a checkbox exercise. It's about whether your content says something that only someone with actual experience could say.

The Information Gain Signal

Google now evaluates how much new information a page contributes compared to what already exists in the top results. Pages that rephrase competitors without adding original data, proprietary insights, or practitioner experience are losing visibility. I've been saying this for years, but this update made it measurable. We saw a client's generic "what is" pages drop 30-40% in traffic while their case-study-driven content held steady or gained.

AI-Generated Content Gets Filtered, Not Banned

The update doesn't ban AI-generated content. But content lacking human oversight or unique insight faces stricter filtering. The pattern from early data is clear:

AI-assisted content with human editing and real examples: stable or gaining

AI-drafted content with light editing and generic coverage: declining

Pure AI mass production without human input: significant drops

Human-written content with original data: gaining strongly

This aligns with the broader industry shift toward what some are calling the "Authenticity Premium." Verifiable expertise and a distinct brand perspective are what determines which businesses get trusted, cited, and surfaced by AI search systems. Real voices outperform algorithmic volume. If you're thinking about how AI fits into your SEO workflow, the answer is as an assistant to human expertise, not a replacement for it.

Page-Level Authority Evaluation

Here's a change that caught a lot of enterprise sites off guard: the update implements page-level authority evaluation. High-authority domains can no longer protect low-quality pages. Weak or thin content sections, even on trusted sites, are being demoted independently. If you're running an enterprise site with hundreds of legacy pages that haven't been audited, this update may have already hit you. The conversation about balancing technical SEO and content quality in recovery is one I'm having with multiple enterprise teams this week.

Algorithm Update Ranking Stability: What the Data Shows

I want to be specific about what I'm seeing in the data, because vague "things are changing" commentary helps nobody.

Across the accounts I manage or advise on, here are the patterns from the first 48 hours of post-rollout data:

Transactional keywords showed the least volatility. If your pages target buying intent, your rankings likely held. The AI search algorithm impact in 2026 hits informational content hardest.

YMYL topics saw the biggest swings. 73% of top-ranking YMYL pages now feature verifiable author credentials. If your health, finance, or legal content doesn't have clear author attribution with real credentials, expect losses.

Sites with LCP under 2.5 seconds were disproportionately represented in stable or gaining positions. Sites exceeding 3 seconds on Largest Contentful Paint saw 23% more traffic loss than faster competitors.

Pages with structured data markup appeared more frequently in AI Overview citations. Schema isn't optional anymore; it's how AI systems understand what your content covers.

For anyone dealing with unexplained drops, a systematic approach to diagnosing visibility issues is worth following before making reactive changes. The worst thing you can do right now is panic-edit pages based on 48 hours of data.

What You Should Do This Week

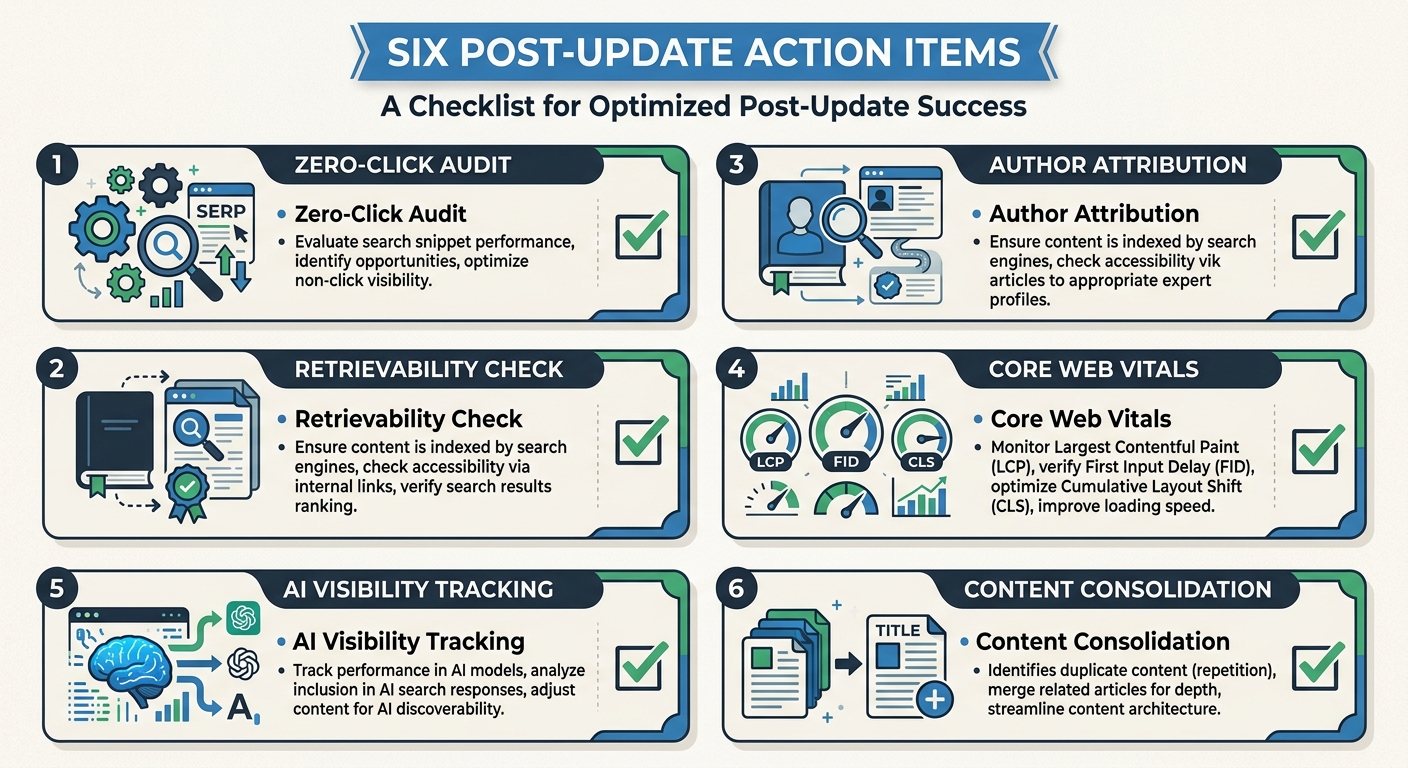

Here's my practical playbook for the next 7-14 days. Not theory. Specific actions.

Pull your zero-click rate by query type. Use Google Search Console to compare impressions-to-clicks ratios for your top 50 keywords before and after March 27th. The queries where impressions held but clicks dropped are the ones being answered by AI Overviews.

Audit your top 20 pages for retrievability. Can an AI system easily extract a direct answer from your content? Look at your opening paragraphs. If they don't contain a clear, concise answer to the primary query within the first 100 words, restructure them.

Check author attribution on YMYL content. Every page covering health, finance, legal, or safety topics should have a visible author bio with verifiable credentials. LinkedIn profiles count. "Written by Admin" does not.

Run a Core Web Vitals check. If your LCP is above 2.5 seconds or your INP exceeds 200 milliseconds, those are now baseline requirements, not nice-to-haves. Technical performance directly impacts whether AI systems select your content.

Start tracking AI visibility. Pick one tool, even a manual process of searching your brand terms in Gemini and ChatGPT, and document what these systems say about you today. That's your baseline.

Consolidate thin content. If you have multiple pages targeting variations of the same topic with shallow coverage, merge them into a single authoritative resource. The page-level authority filter makes thin content a liability, not just dead weight.

The Bigger Picture

Here's my honest take: this update isn't surprising if you've been paying attention, but it is a meaningful threshold. The gap between sites that optimize for AI-driven discovery and those that don't is going to widen fast. 86% of SEO professionals now integrate AI solutions into their workflow. If you're not among them, you're not just behind the curve. You're measuring a game that's already changed.

The competitive metrics that matter in post-AI search aren't just about your position on a results page. They're about whether AI systems trust you enough to cite you, whether your content structure makes extraction easy, and whether your expertise is real enough to survive a filter designed to catch fakes.

Google's March 2026 core update didn't invent these dynamics. But it made them impossible to ignore. Your benchmarks need to reflect that reality starting today, not next quarter.

Marcus Webb

Digital marketing consultant and agency review specialist. With 12 years in the SEO industry, Marcus has worked with agencies of all sizes and brings an insider perspective to agency evaluations and selection strategies.

Frequently Asked Questions

- Why did my organic traffic drop if my rankings stayed the same after Google's March 2026 update?

- Google's March 2026 core update reshapes visibility across AI Overviews, carousels, and other AI-driven surfaces, not just traditional rankings. Pages ranking in positions one through three are seeing 15-30% less organic traffic because AI systems are answering queries directly without sending clicks to the source, and AI Overviews, ads, and carousels are pushing organic results below the fold.

- What percentage of Google searches now result in zero clicks?

- Up to 60% of Google searches now result in zero clicks, with 88.1% of queries triggering AI-generated information snapshots being informational in nature. Users are getting their answers directly on the results page from AI systems rather than clicking through to source content.

- How should I restructure my SEO tracking and benchmarks after the 2026 update?

- Implement a two-dashboard approach: Dashboard One tracks traditional SEO metrics like organic CTR by query type, conversion rates, and position tracking for transactional keywords. Dashboard Two monitors AI visibility performance including citation frequency in AI Overviews, brand mention sentiment across Gemini and ChatGPT, and share of voice in AI-generated results.

- What type of content is gaining visibility after the March 2026 Google update?

- Content with verifiable expertise, original data, and practitioner experience is gaining, while generic rephrased content is losing visibility. AI-assisted content with human editing and real examples is stable or gaining, while pure AI mass production without human input is declining significantly.

- Why is page-level authority evaluation important now?

- The March 2026 update implements page-level authority evaluation, meaning high-authority domains can no longer protect low-quality pages. Weak or thin content sections are being demoted independently, even on trusted sites, making legacy pages without recent audits vulnerable to traffic loss.

- Which keywords were least affected by the March 2026 Google update?

- Transactional keywords showed the least volatility, as pages targeting buying intent generally maintained their rankings. In contrast, informational content and YMYL (Your Money Your Life) topics experienced the biggest swings in both rankings and traffic.

- What is the minimum page speed requirement for the 2026 Google algorithm?

- Sites with Largest Contentful Paint (LCP) under 2.5 seconds were disproportionately represented in stable or gaining positions, while sites exceeding 3 seconds saw 23% more traffic loss than faster competitors. Core Web Vitals are now baseline requirements, not optional improvements.

- How long should I wait before making changes after the update completes?

- Google recommends waiting at least two weeks after an update completes before making major content changes. Since the April 8th completion date, you should analyze data through April 22nd before overhauling pages, as 48 hours of data is insufficient for informed decisions.

Explore more topics